Sabitlenmiş Tweet

Nitish

472 posts

Today we are open-sourcing rbot - an end-to-end AMR simulation stack that make launching your ROS2 with Gazebo or Isaac sim as easy as three commands.

Try it today - github.com/rlxai/rbot

Robolabs AI@RobolabsAI

Introducing rbot, an open-source AMR simulation stack for ROS 2 Jazzy, Gazebo Harmonic, and Isaac sim. Repo: github.com/rlxai/rbot Demo: youtu.be/B-d64c-2Mw0 We welcome your valuable feedback and contributions. @OpenRoboticsOrg @ROSIndustrial

English

Nitish retweetledi

Newton Physics Engine: How NVIDIA, Google DeepMind & Disney Are Reshaping Sim-to-Real Robotics

Read out latest blog:

robolabs.ai/resources/blog…

English

Nitish retweetledi

Behind the scenes of a $500B robotic pizza delivery model.

Read part 2 of our Robotics Industry Analysis blog series:

robolabs.ai/resources/blog…

English

Nitish retweetledi

iRobot filed for bankruptcy last week. Anki shut down overnight. Jibo became a paperweight. Three case studies reveal why $300M+ in funding wasn't enough.

Read our latest blog -

robolabs.ai/resources/blog…

English

@cursor_ai It would be great if we can easily monitor cost right within the editor

English

We recently updated our pricing, but missed the mark.

We're refunding affected customers and clarifying how our pricing works.

cursor.com/blog/june-2025…

English

Nitish retweetledi

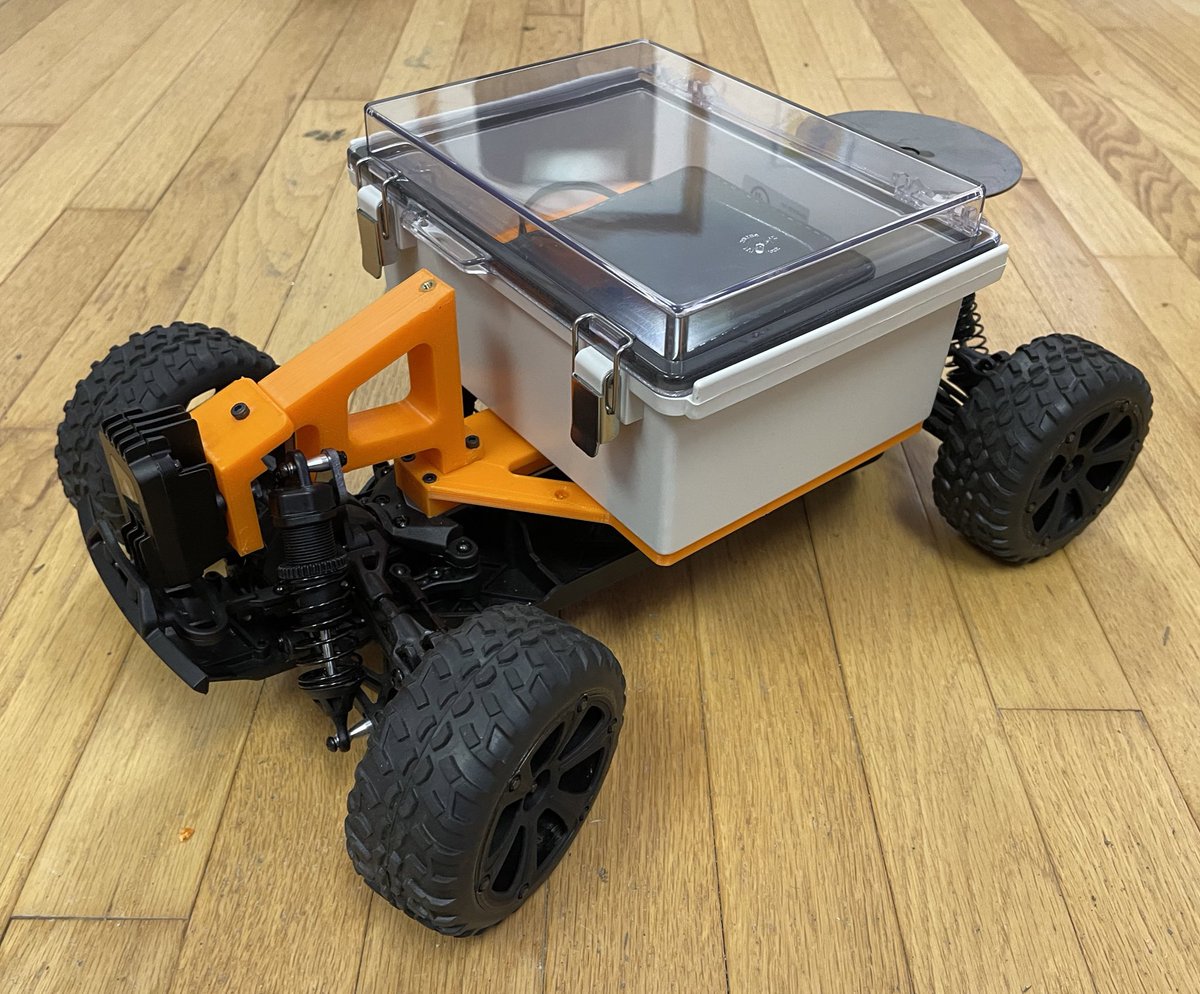

@TheRealFergs I would like to use that mount with attribution, if possible. Do you mind sharing the model files? TIA

English

#RoboMagellan #robot coming together - having a super fast 3D printer has made this really fun

English

When your insecurities talk

Sheel Mohnot@pitdesi

Zuck on the Apple Vision Pro He's still got fire in him, love to see it

English

Nitish retweetledi

Nitish retweetledi

I've made that point before:

- LLM: 1E13 tokens x 0.75 word/token x 2 bytes/token = 1E13 bytes.

- 4 year old child: 16k wake hours x 3600 s/hour x 1E6 optical nerve fibers x 2 eyes x 10 bytes/s = 1E15 bytes.

In 4 years, a child has seen 50 times more data than the biggest LLMs.

1E13 tokens is pretty much all the quality text publicly available on the Internet. It would take 170k years for a human to read (8 h/day, 250 word/minute).

Text is simply too low bandwidth and too scarce a modality to learn how the world works.

Video is more redundant, but redundancy is precisely what you need for Self-Supervised Learning to work well.

Incidentally, 16k hours of video is about 30 minutes of YouTube uploads.

Tom Osman 🐦⬛@tomosman

"A 4-year-old child has seen 50x more information than the biggest LLMs that we have." - @ylecun 20mb per second through the optical nerve for 16k wake hours 🤯 LLMs may have consumed all available text, but when it comes to other sensory inputs...they haven't even started.

English

Nitish retweetledi

@TheRealFergs Looks great! What camera module are you using up on the front?

English