Tarn 🌐🐙

3.3K posts

Tarn 🌐🐙

@somervta

Public Policy PhD student. Math, philosophy and politics nerd, Law nerd, Musical Theatre nerd, Nerd^2. he/him/his

@gmiller Here's a source. Now you retract time.com/6266923/ai-eli…

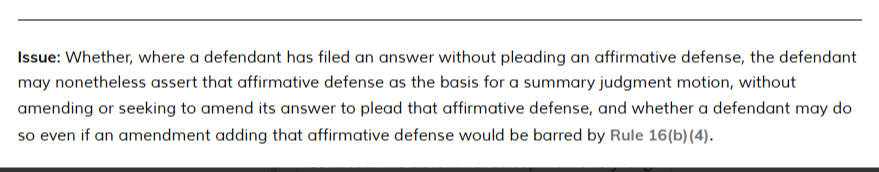

One Supreme Court certiorari grant today, likely to be argued next term. This is a complicated question about when an affirmative defense may be raised. The Eleventh Circuit opinion is very long and thorough, drafted "Per Curiam" (for the Court) from Judges Branch, Luck, Lagoa

Planned Parenthood just posted this on Instagram… and I have questions?!?

Here's the average:

In my data, here's the median sexual partners by age and gender:

The so-called “Machine Intelligence Research Institute” has never accomplished any research on machine intelligence. It serves two purposes, one of them to pay Eliezer Yudkowsky a fortune every year for doing absolutely nothing of importance, and the second, to help spread his cult’s propaganda. At one time, it pretended to do AI research, but it never accomplished any in spite of spending oceans of donor money.