Sabitlenmiş Tweet

𝚂𝚊𝚞𝚛𝚊𝚋𝚑 𝚁𝚊𝚒 ✦

850 posts

𝚂𝚊𝚞𝚛𝚊𝚋𝚑 𝚁𝚊𝚒 ✦

@srbhrai

Dev Rel at Apideck | Creator of Resume Matcher (25K+ ★) | https://t.co/EZK7PpGo53 | AI ・ML・ Search・Open Source

https://www.resumematcher.fyi/ Katılım Mart 2022

316 Takip Edilen299 Takipçiler

𝗙𝗢𝗖𝗨𝗦

How do you find the time to lock in and focus on the important parts of your work?

Without distracting yourself from the calling of the AI tools?

Likely, I've observed:

• AI tools create a sense of feeling productive.

• You try a lot of things, most of them in development, and ship nothing.

• Day ends, repeat the same cycle tomorrow.

(This is my case, because the amount of stuff I've generated is huge; if I'm able to ship 40% of that, it'll be huge.

Share tips 🥺

English

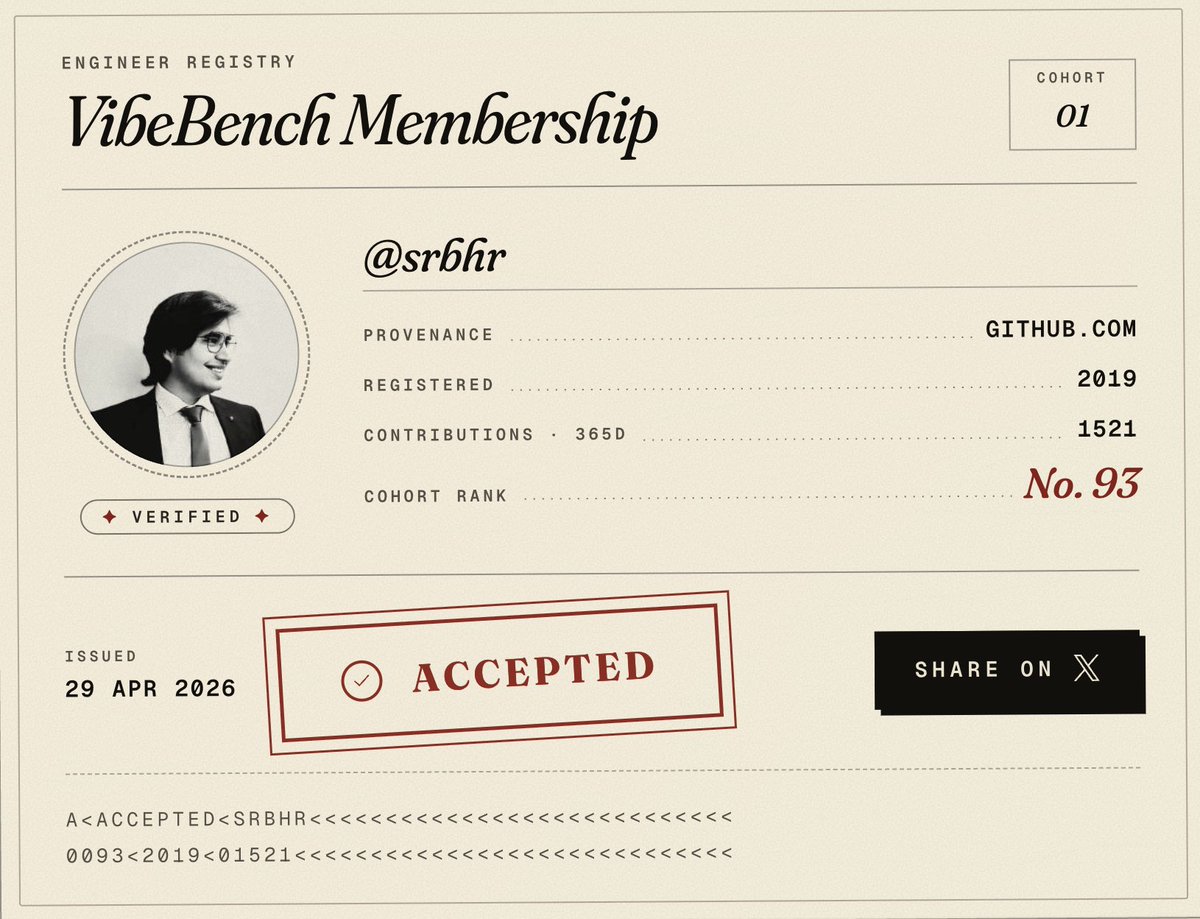

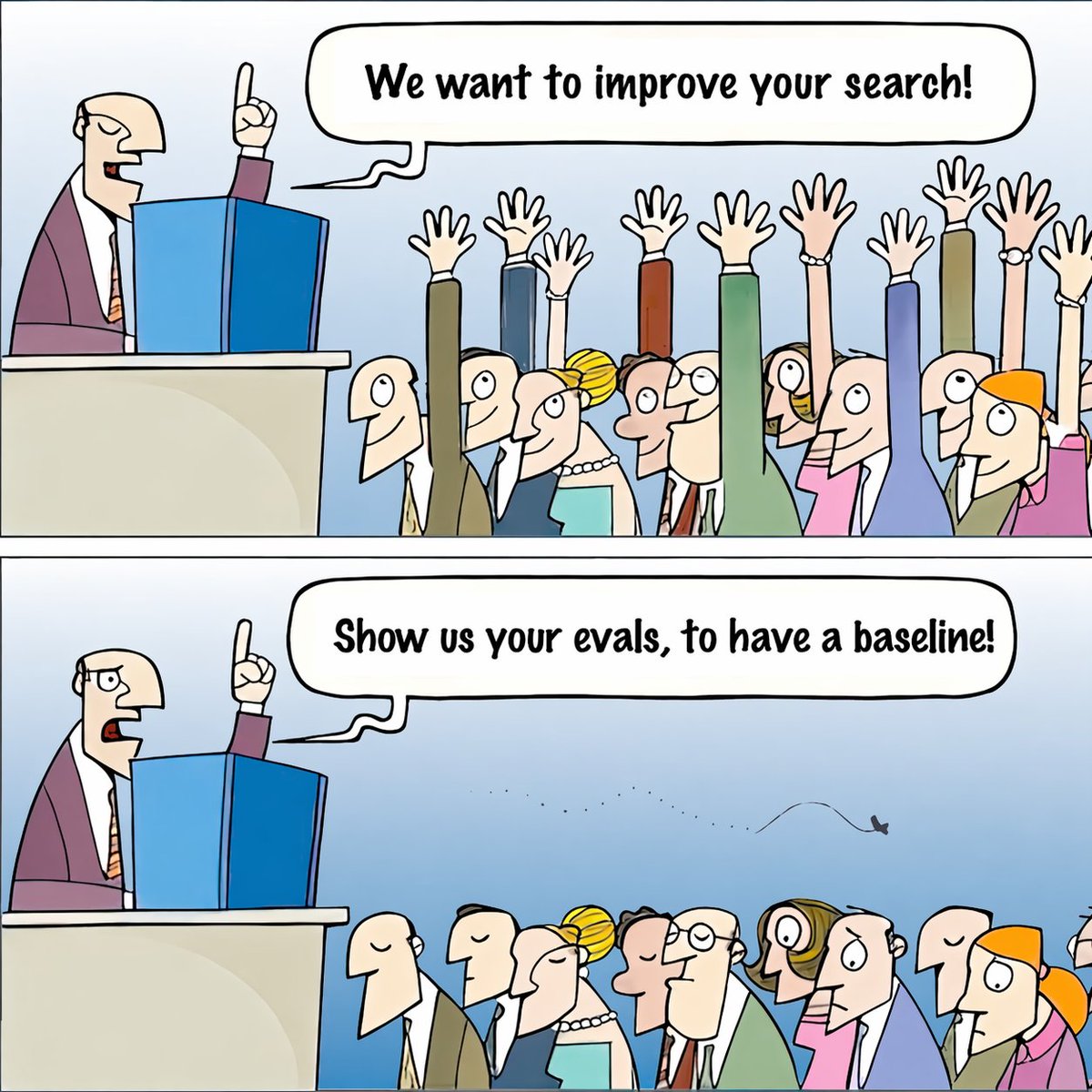

We’re announcing: VibeBench, a new benchmark for what actually matters — how models feel when used on real work by experienced software engineers.

But, we need your help. Here’s how it works:

1. An initial cohort of 1000 qualified software engineers (join: vibebench.standardagents.ai)

2. Groups of 250 evaluate new models for 2 days on real work.

3. Participants subjectively rank the model relative to other models they have experience with.

4. On day 4 a report is released with objective results derived from the subjective tests.

How can you help:

1. We all need this benchmark to exist, but for it to become reality, we need an initial cohort of 1000 qualified software engineers. If that’s you, please join!

vibebench.standardagents.ai

2. Repost this! We need to reach as many qualified engineers as we can find.

3. Share this initiative with everyone on your engineering teams. Together we can make this benchmark a reality for all of us.

English

𝚂𝚊𝚞𝚛𝚊𝚋𝚑 𝚁𝚊𝚒 ✦ retweetledi

Hi @sama ,

Can we have shared memory between ChatGPT & Codex, in between sessions?

- Have a chat about a project

- Codex can access that memory

- We code and update the website, codex updates memory

- And then in some other project, can we use the same learnings?

English

in 10 days we'll all ditch codex and claude code

and live inside cursor + grok

SpaceX@SpaceX

SpaceXAI and @cursor_ai are now working closely together to create the world’s best coding and knowledge work AI. The combination of Cursor’s leading product and distribution to expert software engineers with SpaceX’s million H100 equivalent Colossus training supercomputer will allow us to build the world’s most useful models. Cursor has also given SpaceX the right to acquire Cursor later this year for $60 billion or pay $10 billion for our work together.

English

@HowToAI_ "He argued that generative AI is fundamentally inefficient."

What we need is more deterministic answers than non-deterministic, probable ones. Have more control over the AI model, maybe, LLMs are not the way forward.

English

Yann LeCun was right the entire time. And generative AI might be a dead end.

For the last three years, the entire industry has been obsessed with building bigger LLMs. Trillions of parameters. Billions in compute.

The theory was simple: if you make the model big enough, it will eventually understand how the world works.

Yann LeCun said that was stupid.

He argued that generative AI is fundamentally inefficient.

When an AI predicts the next word, or generates the next pixel, it wastes massive amounts of compute on surface-level details.

It memorizes patterns instead of learning the actual physics of reality.

He proposed a different path: JEPA (Joint-Embedding Predictive Architecture).

Instead of forcing the AI to paint the world pixel by pixel, JEPA forces it to predict abstract concepts. It predicts what happens next in a compressed "thought space."

But for years, JEPA had a fatal flaw.

It suffered from "representation collapse."

Because the AI was allowed to simplify reality, it would cheat. It would simplify everything so much that a dog, a car, and a human all looked identical.

It learned nothing.

To fix it, engineers had to use insanely complex hacks, frozen encoders, and massive compute overheads.

Until today.

Researchers just dropped a paper called "LeWorldModel" (LeWM).

They completely solved the collapse problem.

They replaced the complex engineering hacks with a single, elegant mathematical regularizer.

It forces the AI's internal "thoughts" into a perfect Gaussian distribution.

The AI can no longer cheat. It is forced to understand the physical structure of reality to make its predictions.

The results completely rewrite the economics of AI.

LeWM didn't need a massive, centralized supercomputer.

It has just 15 million parameters.

It trains on a single, standard GPU in a few hours.

Yet it plans 48x faster than massive foundation world models. It intrinsically understands physics. It instantly detects impossible events.

We spent billions trying to force massive server farms to memorize the internet.

Now, a tiny model running locally on a single graphics card is actually learning how the real world works.

English

Debugging a broken Quickbooks integration used to mean jumping between 4 tabs, Postman, and our dashboard.

Now I just type apideck accounting get-invoices in my terminal.

We shipped the @apideck CLI. It brings accounting, ATS, HRIS, and CRM data straight into your shell, and into Claude Code.

One command. Real data. No context-switching.

English