Abhishek (key/value) retweetledi

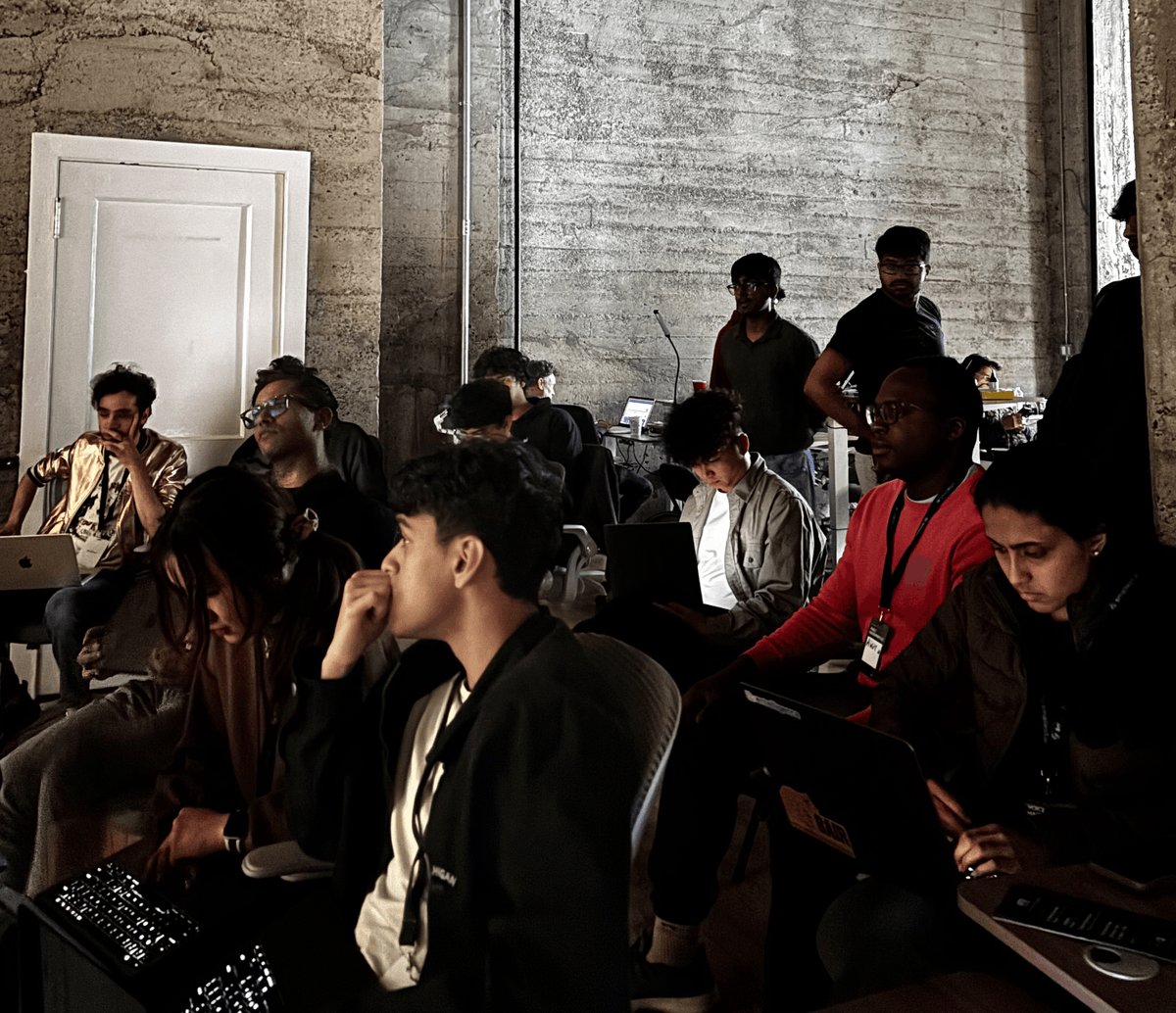

Last weekend was our first conference appearance in SF at the AI+ Renaissance Conference as the Title sponsor.

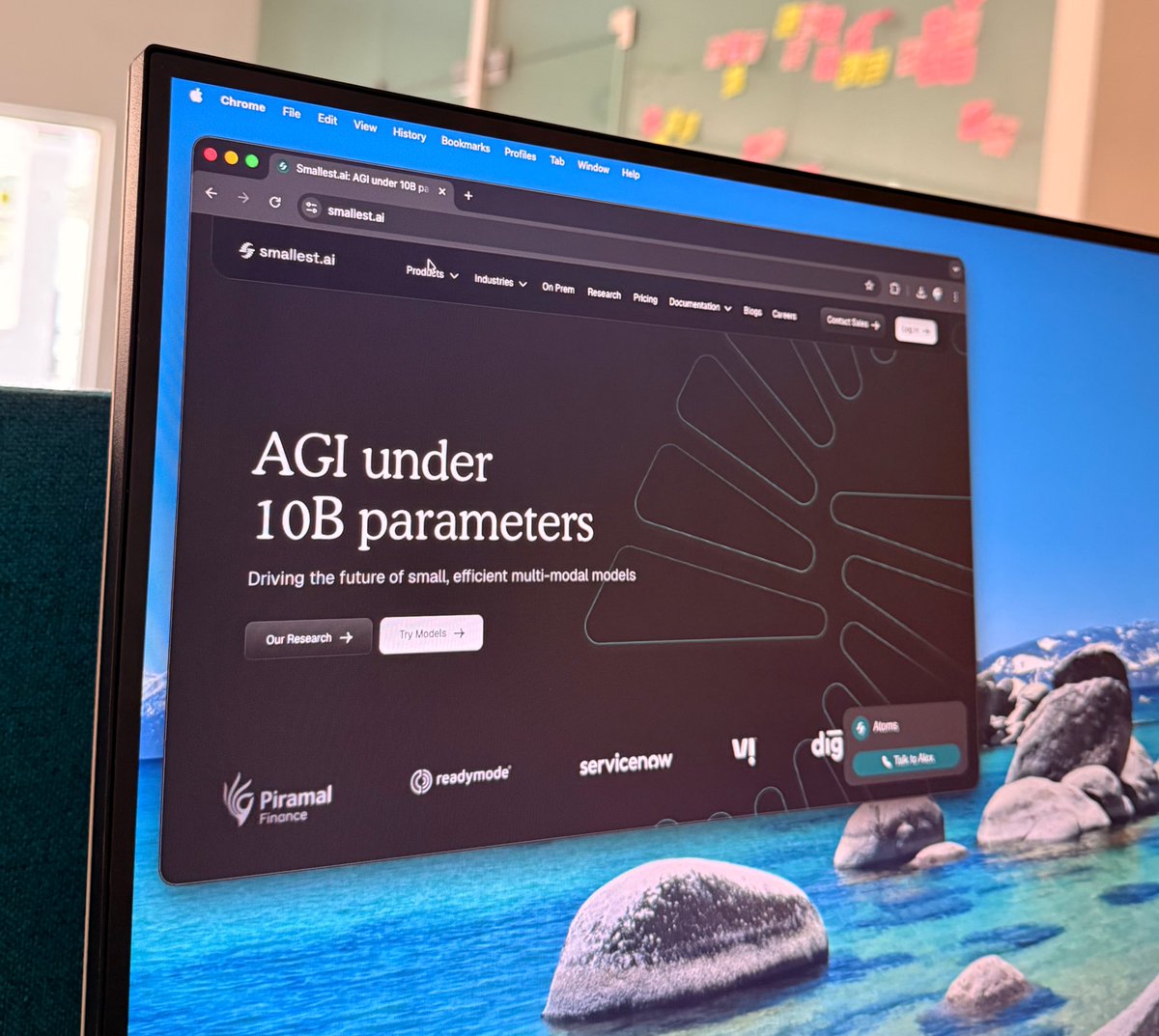

@kamath_sutra took the stage at the Voice AI panel, and we launched Hydra – our Async Thinking Multimodal LLM – live in front of the room.

This is the statement we opened with: “we are not close to passing the Turing test in voice. Not even for a single speaker, in a single language, in a single use case. And that's exactly the problem we're here to solve”

The gap between AI voice agents and human conversation isn't subtle. Today's agents listen, then think, then respond. Humans do something fundamentally different – they think while listening, act while listening, and respond with contextual emotion. That's not a feature gap. That's an architectural gap. And offline LLMs can't be retrofitted to close it.

That's the conviction behind everything we build at smallest.ai. Small, real-time models – built from the ground up for async inference, partial context, and sub-500ms multimodal response – are the path to human-level voice intelligence. Not bigger models. Faster ones.

Hydra is our step in that direction: an async thinking Speech-to-Speech model that listens and reasons in parallel, with ~50ms latency. Paired with our Lightning TTS, Lightning ASR, and Electron SLM (which outperforms GPT-4.1 on realtime conversational tasks) – the full stack is finally coming together.

A massive thank you to Joshua and @lynn_aisv for building @Aiplus__ into the kind of event where everyone can have meaningful conversations, and learn from those around them.

And to @Sky9Capital and @Topify_AI for co-organizing the afterparty with us – 300+ signups speaks for itself. That kind of momentum doesn't happen without people who care about the ecosystem as much as the technology.

We're just getting started.

The question we left the room with: Attention is all you need -but attention on what?

English