Jonathan Staples

34 posts

Jonathan Staples

@staples46198

AI & Business Transformation | Turning hype into workforce wins. Thrivers master AI. Survivors adapt.

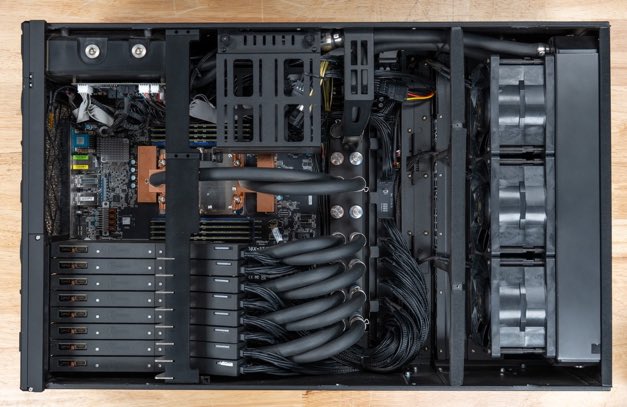

Do you understand how cool this is? On my desk is a DGX Spark running a Hermes agent powered by Qwen 3.6 It runs 24/7/365 doing tasks for me. Doesn't matter if the internet goes out. I have super intelligence running for me at all times Next step I want to get a Tesla solar roof so I'm dependent on NOBODY to run my intelligence. Even if they cut off my power I'll keep going. This is the future. Sovereign intelligence.

Today we're releasing Personal Computer. Personal Computer integrates with the Perplexity Mac App for secure orchestration across your local files, native apps, and browser. We’re rolling this out to all Perplexity Max subscribers and everyone on the waitlist starting today.