stas kaufman

41 posts

stas kaufman

@stask_85

Building Kavim — a visual canvas for AI chats & ideas Open-source • BYOK • Infinite canvas Sharing the journey in public

Workaccount2 on Hacker News just coined the term "context rot" to describe the thing where the quality of an LLM conversation drops as the context fills up with accumulated distractions and dead ends #44310054" target="_blank" rel="nofollow noopener">news.ycombinator.com/item?id=443087…

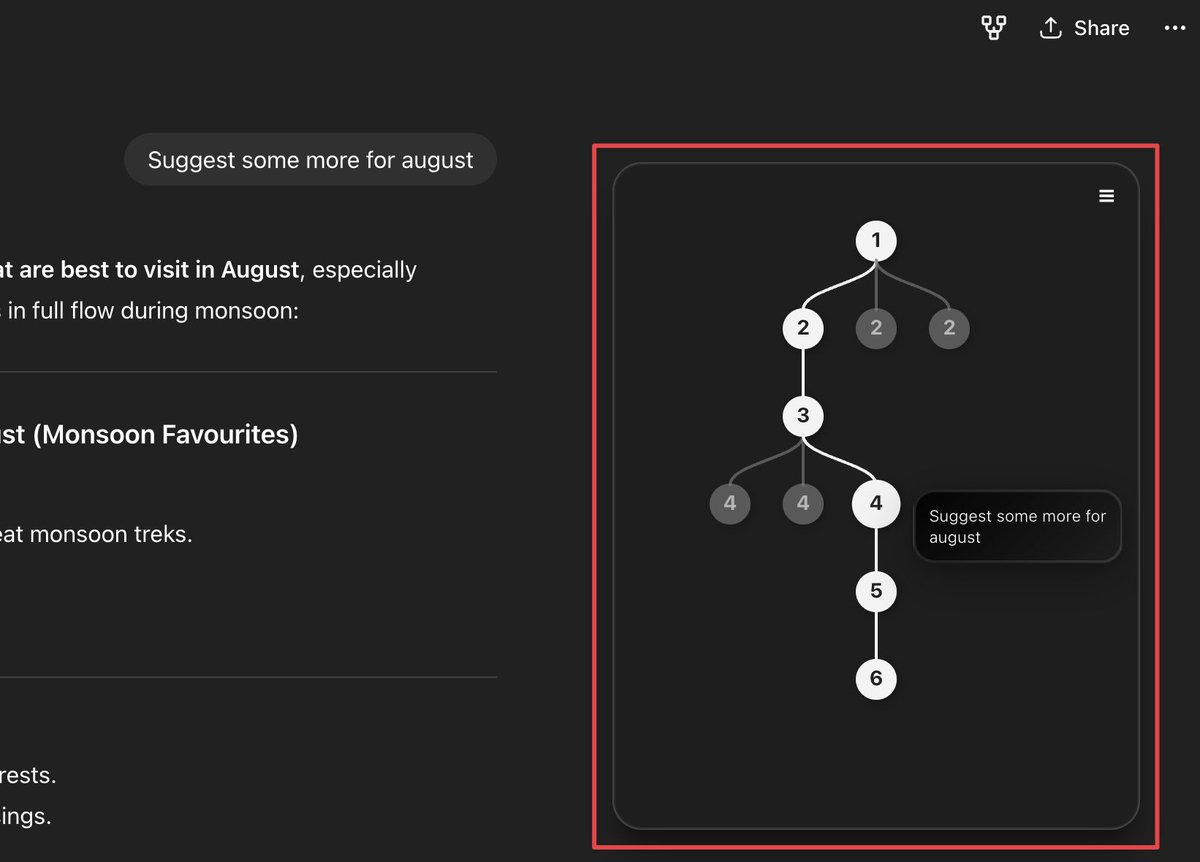

You can now interrupt long-running queries and add new context without restarting or losing progress. This is especially useful for refining deep research or GPT-5 Pro queries as the model will adjust its response with your new requirements. Just hit update in the sidebar and type in any additional details or clarifications.

Codex lied about an API, lied about reading the documentation, lied that it shows that its right, then lied that it was actually an example linked in MSDN, then called the whole thing "yeah I misrepresented it, but its just noise". i would take "you are absolutely right" instead.

BrowserOS (@browserOS_ai) is an open-source, privacy-first alternative to ChatGPT Atlas & Perplexity Comet. No vendor lock-in: use any LLM or search engine AI agents run locally. No tracking you for ads or data collection. Available for Mac/Win/Linux. browseros.com Congrats on the launch, @nv_sonti, @ThatNithin!