Favour Ajaiyeoba

24.7K posts

Favour Ajaiyeoba

@stellaforge24

Galxe Yapper | Exploring, earning & sharing crypto adventures | Starboard enthusiast 🚀

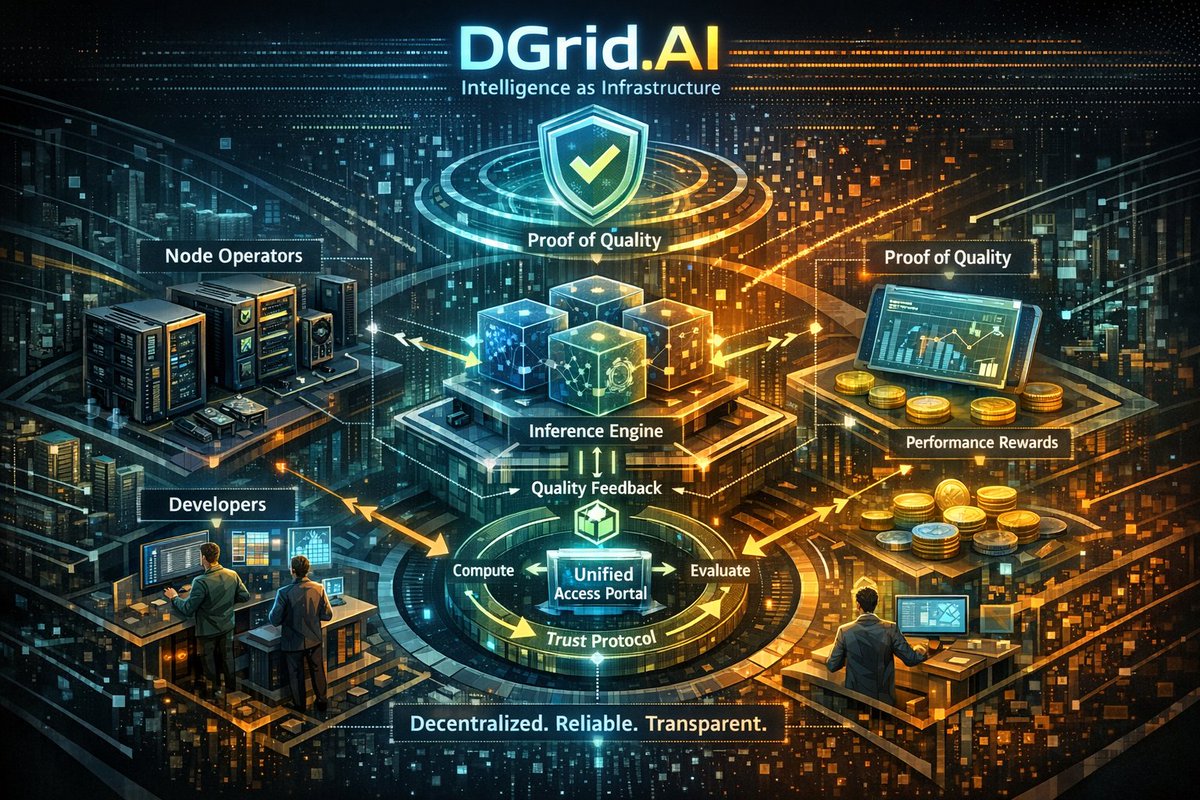

been using dagama_world, @dango, RumiLabs_io, inference_labs, and dgrid_ai for weeks everyone says "decentralized is the future" but I found patterns that make me question everything hear me out 🧵 CENTRALIZATION CREEPING IN: tested all 5 products, loved the results then started looking under the hood what I found surprised me @dagama_world VERIFICATION: claim: decentralized location verification reality: checked how they verify merchants process: someone manually reviews applications, approves businesses, updates database asked in Discord: "is verification on-chain or centralized team?" answer: "hybrid approach, team verifies initially" so... not fully decentralized then? 360K wallets connected, but verification = trust the team works great, just not what I expected from "web3" @dango TESTNET REALITY: claim: decentralized payment network testnet experience: sent 847 micro-payments, worked flawlessly then noticed: testnet runs on centralized servers (makes sense for testing) but mainnet architecture details? unclear whitepaper mentions "decentralized validators" but how many? who runs them? what's minimum to launch? love the product, just want clarity on actual decentralization @RumiLabs_io COMPUTE NODES: claim: decentralized GPU marketplace my experience: rented compute, saved $41, super happy then asked: "how many independent node operators?" couldn't find clear answer are these actually distributed nodes or just their data centers? marketplace feels centralized currently product works, decentralization level = uncertain @inference_labs MODEL ROUTING: claim: decentralized AI inference tested: 300 requests, 61% cost savings, loved it then realized: they're routing to centralized APIs (OpenAI, Anthropic, etc) the routing layer is theirs, the models = centralized anyway so is this "decentralizing" AI or just optimizing centralized AI access? semantics maybe, but matters for thesis @dgrid_ai FUTURE PROMISES: claim: decentralized GPU network status: not launched yet whitepaper mentions "Proof of Quality" mechanism sounds great but how does it actually work? who validates? what prevents centralization over time? can't judge what doesn't exist yet, but questions need answers before launch THE PATTERN: all 5 use "decentralized" in marketing all 5 have centralized components currently dagama: centralized verification team dango: testnet on central servers (mainnet TBD) RumiLabs: unclear node distribution Inference: routes to centralized APIs DGrid: launch, can't verify WHY THIS MATTERS: using products as user? all work great evaluating as "decentralized infrastructure"? less clear not saying they're lying, saying decentralization is spectrum not binary THE UNCOMFORTABLE QUESTIONS: dagama: if verification team disappears, does system work? dango: what's minimum validator count for "decentralized"? RumiLabs: can I verify compute node distribution independently? Inference: is routing layer decentralized if models aren't? DGrid: will launch with sufficient decentralization or centralized initially? WHAT I'M DOING: still using all the products (they solve my problems) but adjusting expectations on decentralization timeline treating "decentralized infrastructure" claims with healthy skepticism verifying architecture not just marketing THE HONEST ASSESSMENT: dagama: 40% decentralized currently, 80% roadmap dango: 30% decentralized (testnet), 70% promised (mainnet) RumiLabs: 50% decentralized (unclear transparency) Inference: 60% decentralized (routing yes, models no) DGrid: 0% decentralized (not launched), 80% promised all moving right direction, none fully there yet FOR USERS: if you care about: product working, saving money, solving problems → use them if you care about: pure decentralization, trustless systems → wait and verify both valid priorities am I wrong about decentralization assessment? challenge my analysis 👇 #Decentralization #BuildInPublic #Web3Reality

@dagama_world 𝐒𝐄𝐑𝐈𝐄𝐒 🚥 EPISODE 106: Why Platforms Secretly Benefit From Broken Trust Broken trust isn’t a bug. It’s a business model. A Thread 🧵👇 1️⃣ Distrust Drives Engagement When users don’t fully believe: 🚦 they scroll more 🚦 they compare endlessly 🚦 they second-guess decisions Uncertainty keeps people inside the platform. 2️⃣ Moderation Thrives on Ambiguity If trust were absolute: 🚦 fewer disputes 🚦 fewer appeals 🚦 less “content management” Ambiguity justifies control layers 3️⃣ Ads Prefer Confusion Advertising platforms don’t sell truth. They sell attention. 🚦 conflicting reviews 🚦 mixed signals 🚦 endless debate Clarity shortens sessions. Confusion extends them. 4️⃣ Centralized Trust Creates Dependence When platforms act as referees: 🚦 users rely on verdicts 🚦 businesses lobby decisions 🚦 power concentrates Trust becomes permissioned, not earned. 5️⃣ Verification Breaks the Loop Once truth is provable: 🚦 no need to guess 🚦 no need to debate 🚦 no authority to appeal to The platform becomes infrastructure, not judge. 6️⃣ Why This Is Threatening Verified systems remove: 🚦 ad leverage 🚦 moderation power 🚦 narrative control That’s why adoption is slow not because it’s hard. 7️⃣ dagama’s Quiet Rebellion @dagama_world doesn’t optimize engagement. It optimizes certainty. And certainty doesn’t shout, it settles. Platforms monetize doubt. @dagama_world eliminates it. That’s the real disruption.

“99.99% uptime” is a centralized promise and a fragile one. Real resilience in decentralized AI comes from geographic and operator diversity: many independent nodes where failures stay local and the network reroutes globally. @dgrid_ai it's the one with everything.

Inference Labs is emerging as a critical trust anchor in decentralized finance by enabling provable AI-driven settlement arbitration, a sophisticated DeFi topic where transparency has been remarkably hard to achieve. In advanced DeFi markets especially in automated swap routings, cross-protocol settlement, and dispute handling AI models increasingly make judgment calls that determine how and when value moves on chain. These AI decisions might influence which liquidity pools are used to fill a large trade, how partial liquidations get executed, or how closely a synthetic asset’s price should follow its underlying. Until recently, these outputs were opaque: consumers and smart contracts had to assume that the AI recommendations were correct, which introduced silent systemic risk into processes that ultimately move capital. Inference Labs solves this fundamental gap by turning each critical AI inference into a cryptographically verifiable signal, allowing DeFi systems to verify before they settle instead of trusting without evidence. This elevates decentralized finance into a realm where AI-assisted settlement logic can be held to the same standards of transparency and auditability as the smart contracts that execute value. The cornerstone of this innovation is the Proof of Inference protocol, which attaches zero-knowledge proofs to AI outputs so that anyone whether a DAO treasury, an automated market maker, or a smart contract can independently check that a given result came from the claimed model and input data without revealing sensitive model internals or private data. Zero-knowledge proofs allow the verification of computation correctness without exposing proprietary logic, preserving both privacy and ecosystem trust. In DeFi settlement arbitration, this means that if two protocols disagree about which pricing signal should be used at the moment of settlement, they can use Proof of Inference to cryptographically confirm whether the AI’s recommended price was honestly produced, and base settlement decisions on verifiable facts instead of unverifiable assertions. To make this practical and scalable, Inference Labs has built Subnet 2 on the Bittensor network, a decentralized marketplace and universal verification layer where AI inference tasks are executed and linked with proofs that validators independently check before results are delivered. This verifiable layer transforms how DeFi handles settlement and dispute logic because it eliminates a longstanding trust assumption: that AI signals, once sourced from a model, should be taken on faith. With Proof of Inference, settlement layers whether in complex swaps, liquidations, or synthetic payoff adjustments can be backed by provable computation rather than opaque prediction. This reduces systemic risk and opens DeFi’s automated logic to auditability, accountability, and economic fairness, enabling more confident interaction between autonomous systems and human governance. Strategic partnerships such as the integration of Lagrange’s DeepProve zkML library further extend these capabilities by enhancing AI verification standards across decentralized platforms, making provable AI verification not just a niche capability but a generalized primitive for DeFi’s future. In essence, Inference Labs is not simply adding another oracle service to DeFi. It is turning AI into a provable financial primitive, allowing decentralized protocols to settle capital and resolve disputes based on cryptographic proof of AI behavior rather than assumption a subtle but foundational upgrade in how decentralized finance ensures fairness, transparency, and accountability at scale.

AI doesn’t fail because of lack of innovation. It fails when access, trust. @dgrid_ai focuses on fixing that gap. Instead of siloed platforms and unverifiable outputs, it creates a neutral layer where AI models can be accessed, priced fairly, and verified during execution.

Gamification often feels gimmicky in many app, badges that mean nothing, points that don’t translate to real value. @dagama_world uses gamification differently. Leaderboards reward consistency and quality contribution.

Most platforms try to detect fake reviews after damage is done. What Dagama does differently is to remove the incentive to fake them in the first place. Reviews are tied to real presence, making it harder to lie and easier to trust what you see. Verification is not treated as a checkbox here. daGama connects reviews to physical visits, consistent behavior, and community validation. This means opinions come from people who actually showed up, not accounts created to push an agenda. Community voting adds another layer of protection. dagama_world allows real users to decide what deserves visibility. Quality rises because people stake reputation, not because someone paid for reach. Businesses benefit without gaming the system. daGama gives them tools to respond, improve, and engage without paying to bury criticism. Honest feedback becomes an asset instead of a threat. The bigger shift is cultural. daGama turns reviews from disposable content into accountable signals. When truth has weight and effort has value, trust slowly returns to local discovery.