Shaowei Liu

79 posts

Shaowei Liu

@stevenpg8

CS PhD @IllinoisCDS | MSCS @ucsd_cse | BSEE @Tsinghua_uni

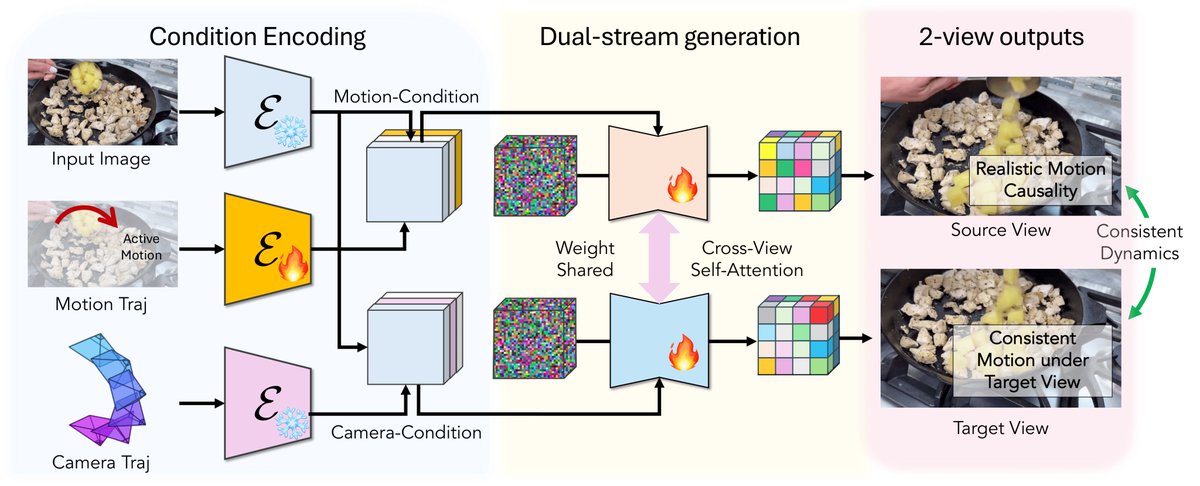

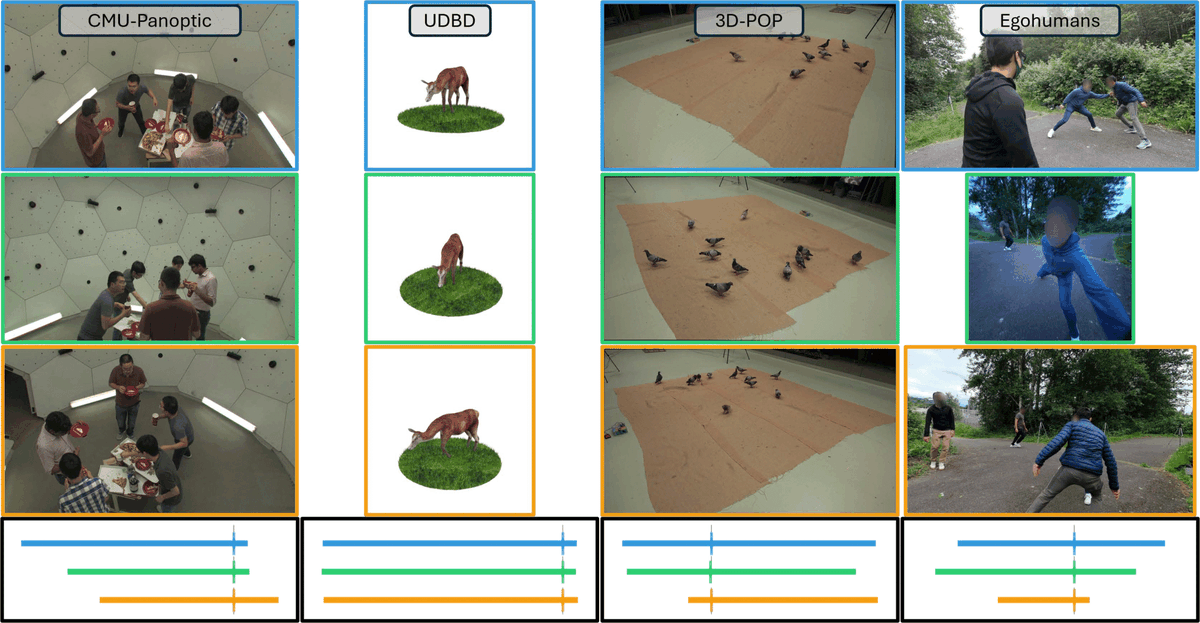

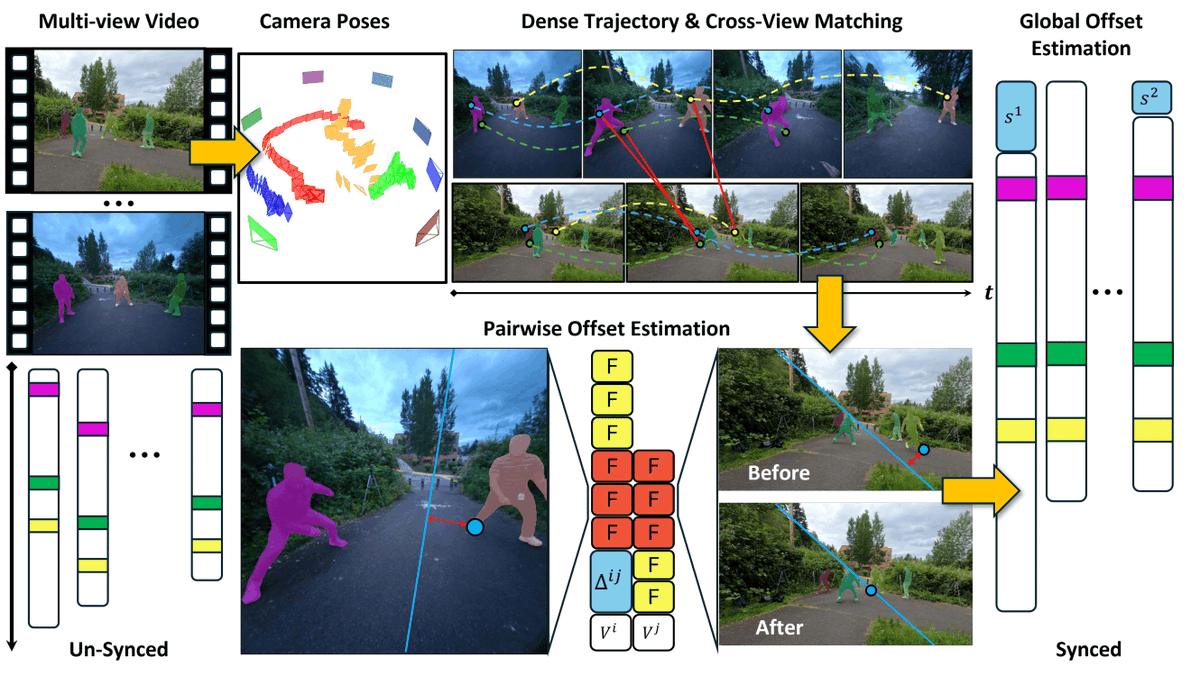

📢MoRight: Motion Control Done Right "What if your video model actually understood cause and effect?" Existing motion-controlled video models entangle camera and object motion, and treat everything as kinematic displacement. MoRight changes both. 🔥 Motion Causality — MoRight decomposes motion into actions & consequences. Give an action → MoRight predicts consequences (aka motion simulation) . Give a desired outcome → MoRight recovers the driving action (aka motion planning). Not merely displacing pixels. 🎬 Disentangled Control — MoRight separates camera and object motion, allowing users to independently control each of them. No entanglement. Project Page: research.nvidia.com/labs/sil/proje… Paper: arxiv.org/abs/2604.07348