Yoshi Suhara

185 posts

@suhara

Building Small Language Models @nvidia

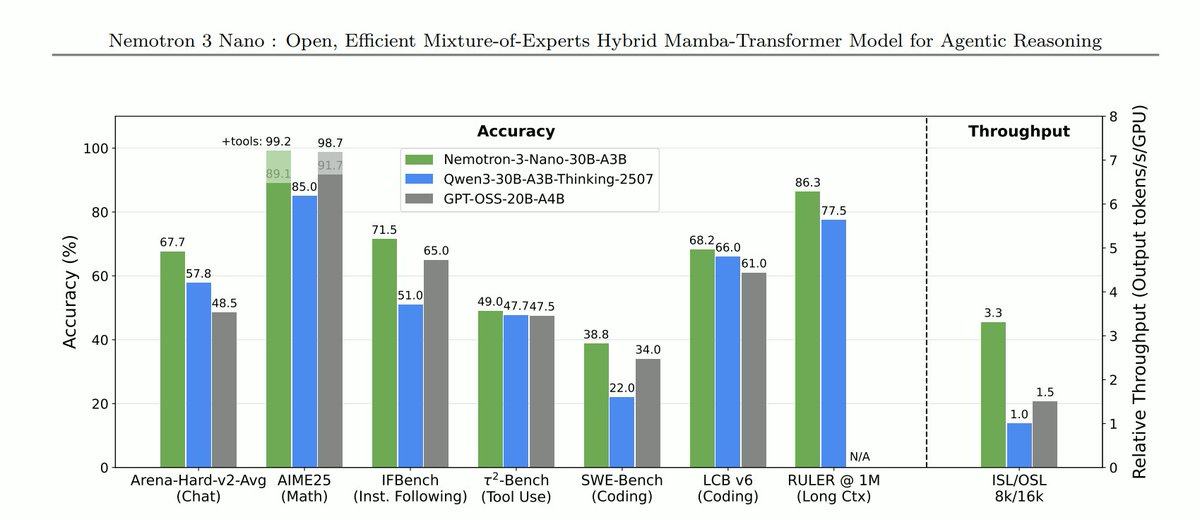

We just launched an ultra-efficient NVFP4 precision version of Nemotron 3 Nano that delivers up to 4x higher throughput on Blackwell B200. Using our new Quantization Aware Distillation method, the NVFP4 version achieves up to 99.4% accuracy of BF16. Nemotron 3 Nano NVFP4: nvda.ws/4t63z9y Tech Report: nvda.ws/4bj3pp0

🚀 新データセット公開: Nemotron-Personas-Japan 日本の人口構成や文化に合わせて作られた、初のオープンな日本語合成データセットを公開しました。商用利用も可能です。 nvda.ws/47Y6A3O

Thrilled to share my first project at NVIDIA! ✨ Today’s language models are pre-trained on vast and chaotic Internet texts, but these texts are unstructured and poorly understood. We propose CLIMB — Clustering-based Iterative Data Mixture Bootstrapping — a fully automated framework that reorganizes pre-training data into clusters and iteratively search the best mixture. CLIMB does three things: ➤ Embeds and clusters web-scale data semantically. ➤ Searches, iteratively and efficiently, for optimal data mixtures using a lightweight proxy model + predictor loop. ➤ Learns how different domains interact, and how the right mix can unlock downstream performance we didn’t know was possible. On paper, the gains are real: ➤ Our 1B model, trained on CLIMB mixtures with 400B tokens, outperforms LLaMA 3.2-1B. ➤ In some specific domains e.g., Social Sciences, we see up to +5% improvements. ➤ We open-sourced ClimbLab (1.2T tokens across 20 domains) and ClimbMix (400B tokens, outperforming existing baselines under the same budget). The real win isn’t just numbers, it’s the idea that we can bootstrap searching 🔎 . This improves the data efficiency a lot. We hope CLIMB can be a small step toward more transparent, structured, and efficient pertaining. One where we curate not by filtering noise, but by discovering signal. We’d love to hear from others exploring the frontiers of data-centric AI. Let’s CLIMB together! 🔗 Read our paper: arxiv.org/abs/2504.13161 📂 Datasets available on Hugging Face: huggingface.co/collections/nv… 🌐 Project page: research.nvidia.com/labs/lpr/climb (check cluster visualizations) 🗨️ Discussion: huggingface.co/papers/2504.13…

🚨New Paper Alert As a game company, @Krafton_AI is actively exploring how to apply LLM agents to video games. We present Orak—a foundational video gaming benchmark for LLM agents! Includes Pokémon, StarCraft II, Slay the Spire, Darkest Dungeon, Ace Attorney, and more in🧵

🤝 Meet NVIDIA Llama Nemotron Nano 4B, an open reasoning model that provides leading accuracy and compute efficiency across scientific tasks, coding, complex math, function calling, and instruction following for edge agents. ✨ Achieves higher accuracy and 50% higher throughput than other leading open models with 8 billion parameters or fewer 📗 Supports hybrid reasoning, optimized for low-cost inference 👨💻 Deploy at the edge with NVIDIA Jetson and NVIDIA RTX GPUs, maximizing security, flexibility, and cost Now on @huggingface 📥 huggingface.co/nvidia/Llama-3…