Jian Zhang

107 posts

Jian Zhang

@JianZhangCS

Director & Distinguished Scientist @Nvidia Nemotron Post-training | Co-founder, CTO at @NexusflowX | Ex-Director of ML at @SambaNovaAI | PhD in ML at @Stanford

Different voices. Same answer: open models. NVIDIA Founder and CEO Jensen Huang sat down with the leaders from @mistralai, @bfl_ml, @cursor_ai, @LangChain, @perplexity_ai, @reflection_ai, @thinkymachines, @allen_ai, @evidenceopen, and AMP PBC to discuss the rapid rise of open frontier models. Get the top takeaways from the frontier of AI: nvda.ws/4uR6eEV

NVIDIA GTC 2026 takes place March 16–19 in the heart of Silicon Valley—San Jose, CA. It’s my favorite AI event of the year, bringing together cutting-edge research and real-world business implementation (THE HARDWARE DEMOS on the show floor is a must see!!!). I’ll be speaking about Nemotron post-training on March 17, and there will be many more exciting talks, sessions, and demos throughout the week. Virtual attendance is free and you can get 25% off for in-person pass, using my employee code: nvidia.com/gtc/?ncid=GTC-… to save 25% on your conference pass.

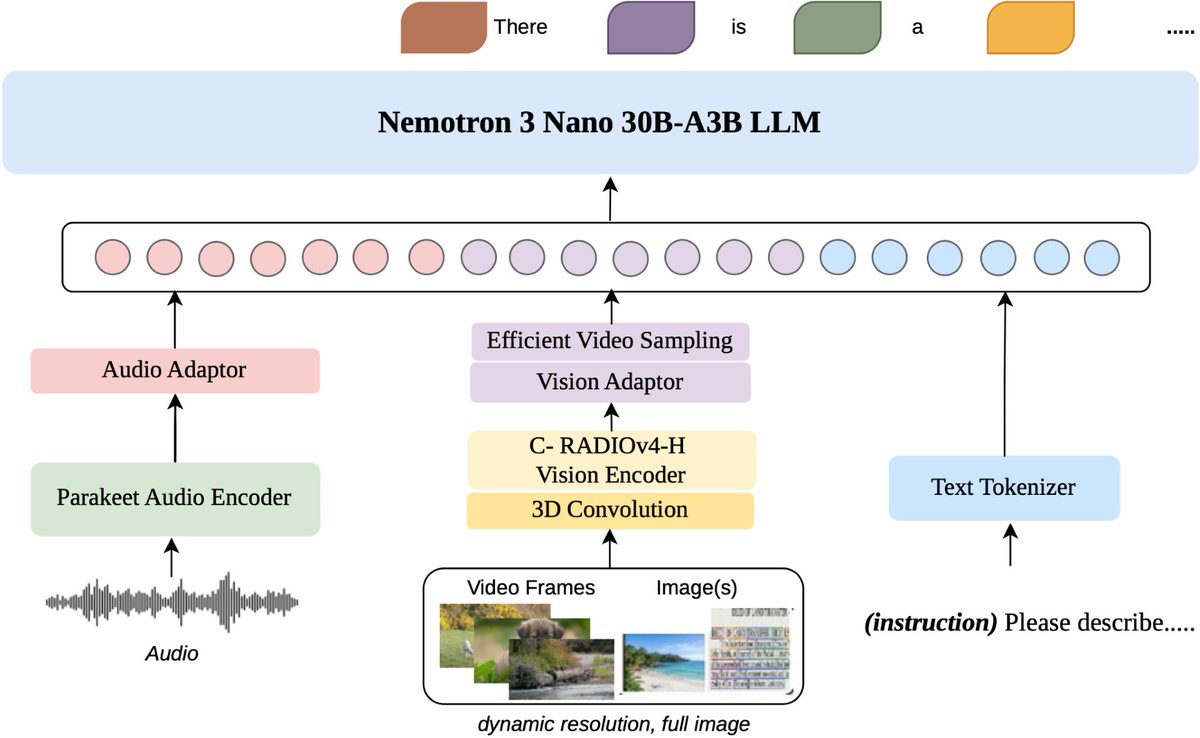

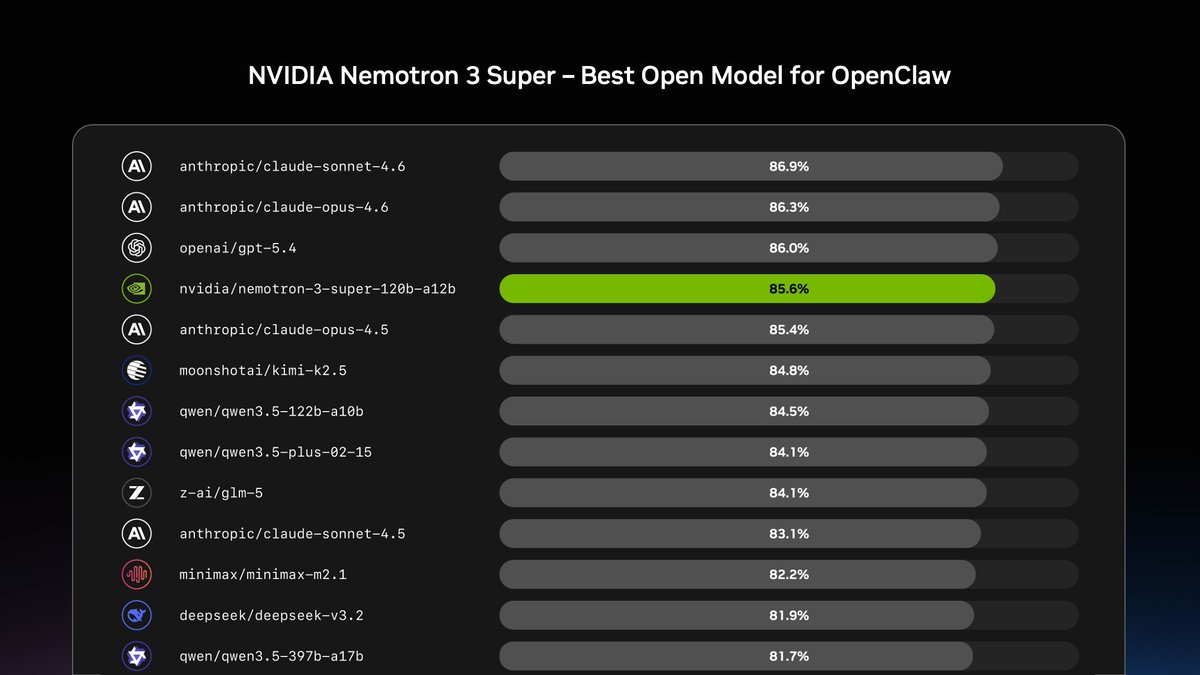

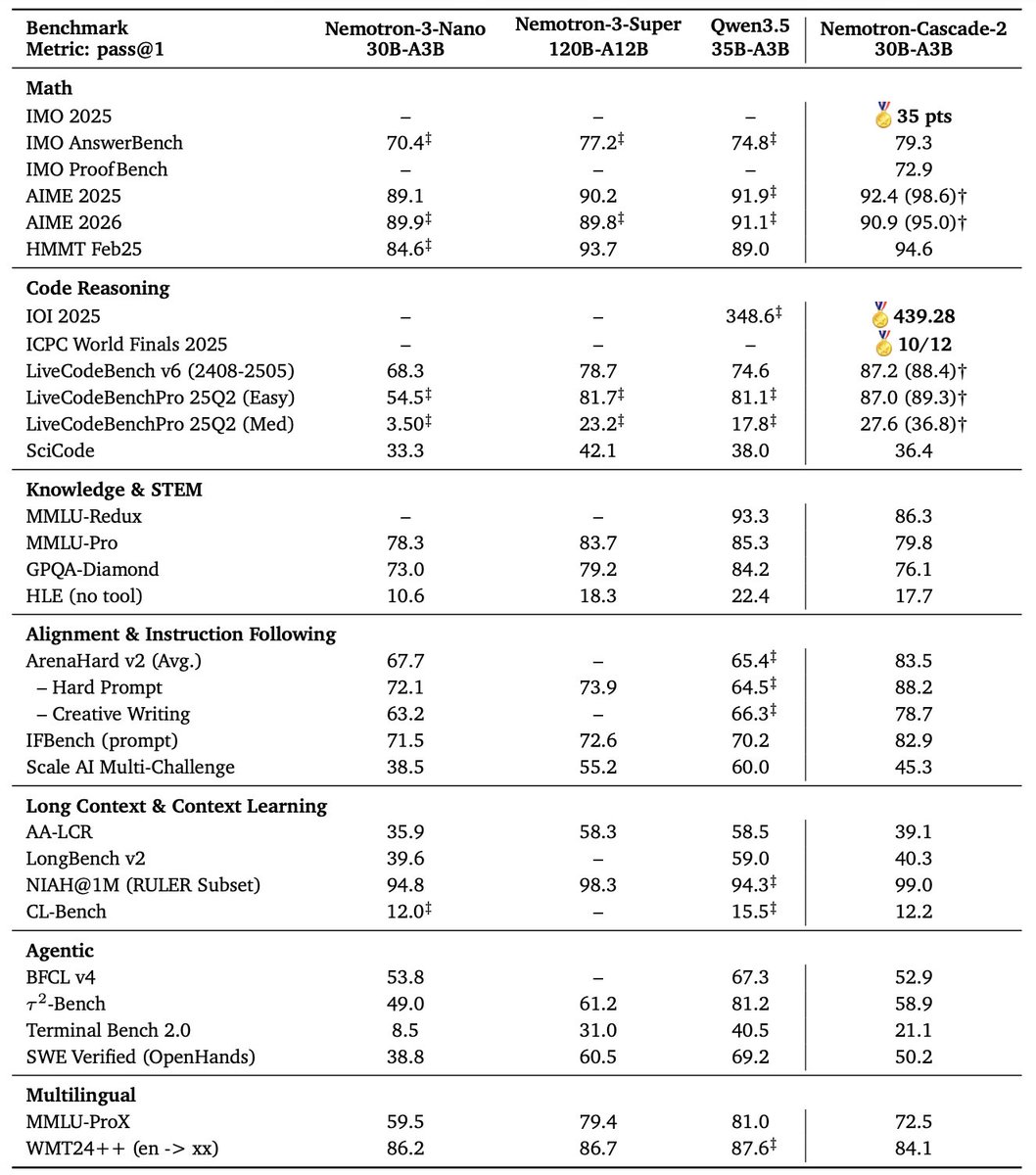

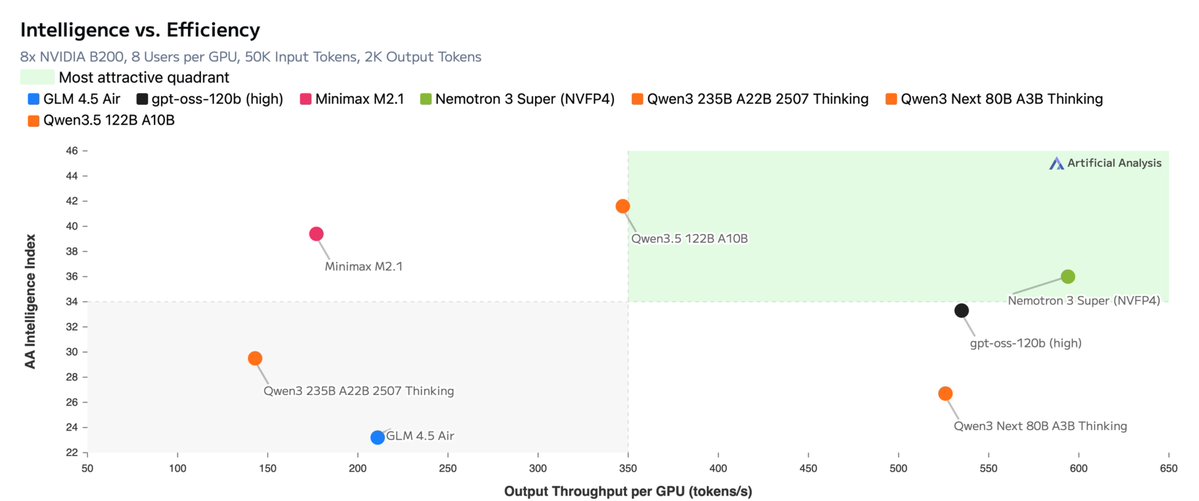

🚨Text Leaderboard Update @NvidiaAI has begun rolling out the open Nemotron 3 model family, starting with Nemotron 3 Nano (30B-A3B): a new 30B hybrid reasoning model, with a 1M context window. It currently ranks #120 on the Text leaderboard with a score of 1328, and #47 among open models. Among open models, Nemotron 3 performs best in Math and Coding categories, with strong results across IT, Science, Business, and Mathematics on the Occupational leaderboard. Read more about Nemotron 3 Nano’s performance with real-world use in thread 🧵

This is not just another strong open model. Nemotron actually releases training data (!), RL environments, and training code. This is a big difference: almost all model developers just want people to use their models; NVIDIA is enabling people to make their own models. We are excited to incorporate these assets into the next Marin models! Congrats to the @nvidia team!