AC.Huang

90 posts

AC.Huang

@sz1117

No longer comfortable. Some things don't happen now and won't do them in the future.

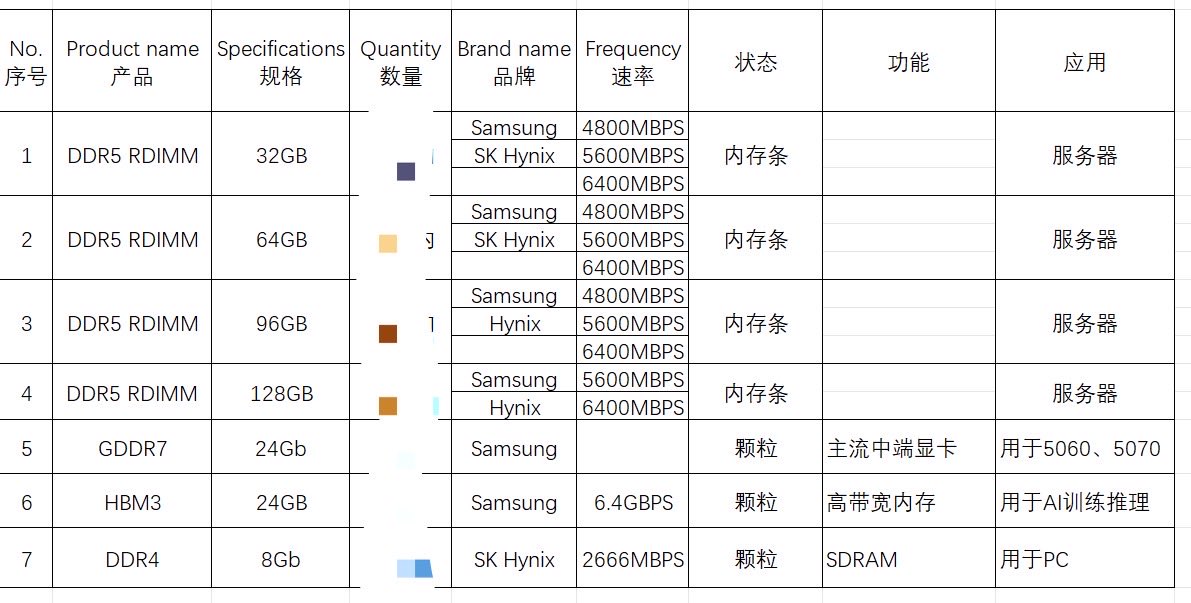

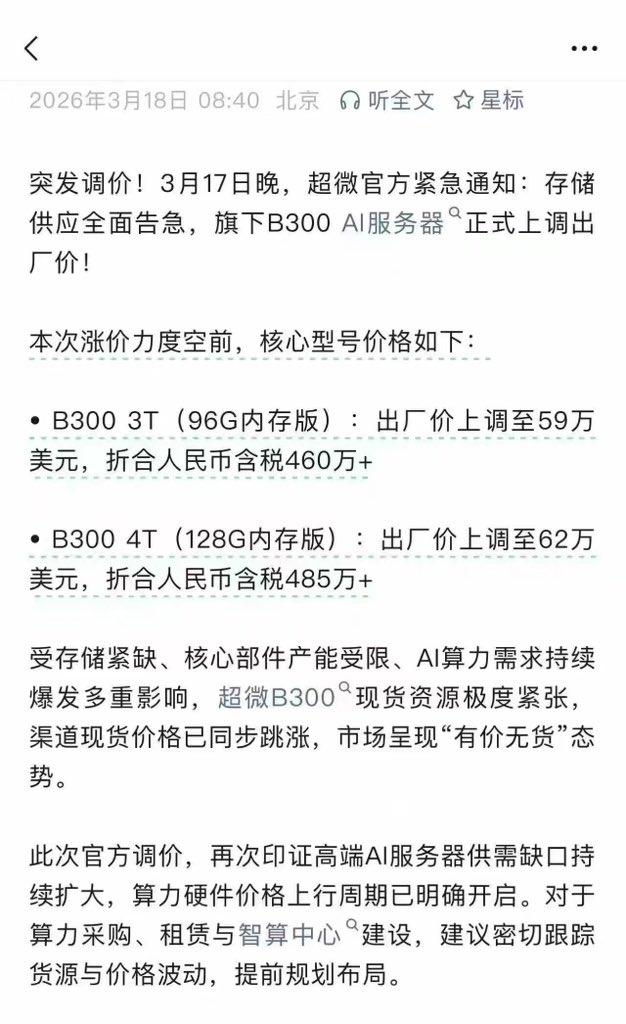

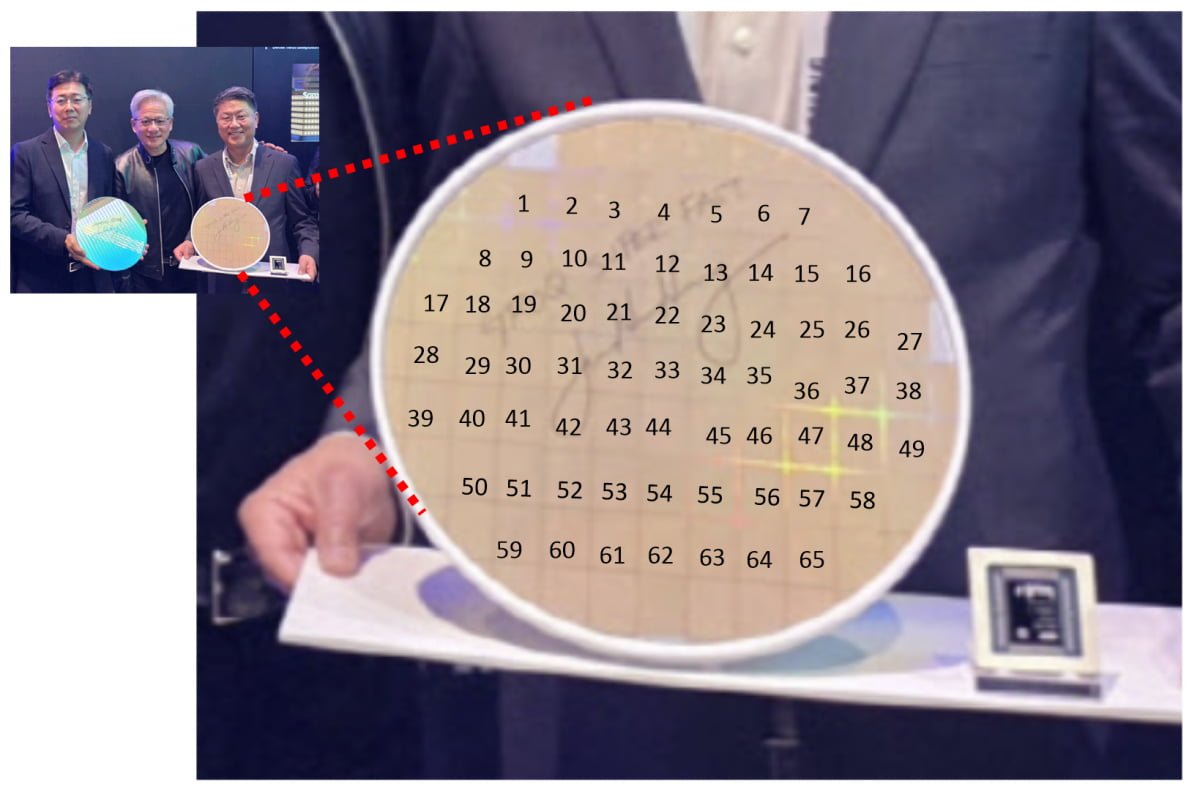

龙虾吃Token,Token吃芯片。这条食物链的终点,是一张六百多亿美元的账单 MiniMax的M2.5模型2月单日Token消耗量暴涨6倍,编程套餐消耗飙升10倍。智谱Coding Plan每天早上10点抢购。涨价30%,上线即售罄。中国大模型周调用量首超美国,5.16万亿Token对2.7万亿。 黄仁勋说,Agent的Token消耗是传统对话的1000倍。人会睡觉,龙虾不会。 但所有人在讨论龙虾,没人问一个更根本的问题:这些指数级激增的Token需求,是用多少AI芯片? 摩根士丹利上周发了篇60页深度,试图回答这个问题。 中国AI芯片TAM从2024年的191亿美元增长到2030年的670亿美元。GPU自给率从2021年的10%爬到2030年的76%。华为一家拿走63%国产份额,寒武纪11%,剩下十几家厂商分不到三成。而且2026到2027年,整个市场仍然是供给驱动。不是没人买,是造不出来。 报告最有意思的判断是:中国AI芯片的故事不是"追制程",是"用基建换纳米"。 MS画了一张中美AI九维雷达图。晶圆前道和HBM内存,美国明显领先。但电力供应、数据中心空间、政策支持,中国反而占优。仅看制程节点会高估性能差距,换成单位功耗和单位成本的口径,差距大幅收敛。中国利润率要求更低,电价更便宜,推理场景下TCO反而有优势。 这就解释了为什么MiniMax输出价格能做到Claude的十分之一。不只是模型层面的工程优化,背后是整条基础设施成本曲线在支撑。 估值上就更刺激了。寒武纪32倍P/S,摩尔线程139倍P/S,对应隐含收入不过3到5亿美元。英伟达才17倍。市场定价的不是盈利能力,是稀缺性。 MS给出的关键判断:2027年后产能释放叠加设计趋同,同质化风险急升,行业整合2到3年内大概率发生。 把两件事放一起看就清楚了。龙虾爆火证明推理侧Token需求可以指数级增长。MS报告证明供给侧产能在2027年前仍是硬约束。供不应求意味着有产能就有收入。但窗口会关。一旦产能不再稀缺,139倍市销率就失去了存在的理由。 Token正在变成新时代的电。中国正在用廉价绿电加极致工程能力,把自己变成全球AI推理的水电煤供应商。但这条叙事听着耳熟吗?政策扶持、产能狂飙、价格战、全行业亏损、最后剩者为王。 光伏走过的路,AI芯片大概率要再走一遍。唯一的区别是,光伏用了十年,这一轮可能AI芯片会用几年?