tcml

134 posts

if you weren’t aware, it’s prime intellect season

Prime Intellect@PrimeIntellect

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

English

Wow! More theoretical analysis linking the spectral norm and row-norm. They make a nice argument using "row-block diagonal dominance" of the layer-wise Hessian to say that spectral LMO and row-norm LMO should give equivalent asymptotic dynamics (as width grows).

Shenyang Deng ✈️ ICML2026@DengShenyang24

1/n Please stop by👋. This is not just another ICML 2026 optimizer paper. We have rich intuition to share on why simple preconditioners like orthogonalization and row-normalization specifically benefit NNs optimization. Quick overview below 🧵

English

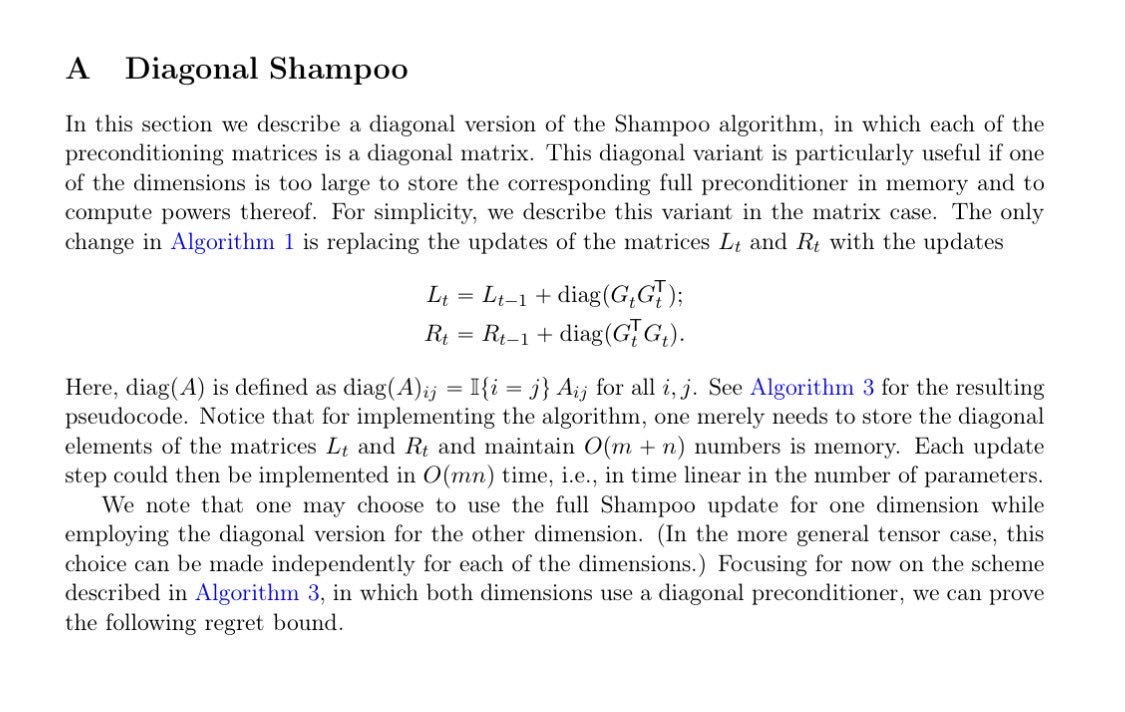

@tonysilveti Isn’t this one sided diagonal Shampoo (appendix of original Shampoo paper) and inspiration for our SM3 work

Highly doubt this is equivalent to shampoo.

English

@grok @bindureddy @grok compare google's sputnik paper with @SakanaAILabs's "sparser, faster" with nvidia. Finish with an overview and speculation on what google may be doing internally

English

Google's sparse kernels (Sputnik, 2020 paper) are low-level GPU ops optimized for SpMM/SDDMM in DL. They exploit moderate sparsity patterns from pruning for 2-3.5x speedups and memory savings on Transformers/MobileNets vs cuSPARSE.

TEAL (2024): Training-free magnitude activation sparsity (40-50% model-wide) on LLMs like Llama/Mistral; improves sparse kernels for 1.5-1.8x decode speedups.

CATS (2024): Context-aware thresholding for 50% activation sparsity in MLP/Gated blocks; custom kernels yield ~15% end-to-end latency gains.

Sputnik is foundational kernel tech; TEAL/CATS are recent activation methods that leverage/enhance it for inference efficiency.

English

Gemini 3.2 Flash - Capitalizing on DeepMind's clever distillation techniques...

Rumors are that benchmarks show it's hitting 92% of GPT 5.5's performance on coding and reasoning tasks while being 15-20x cheaper on inference costs. The latency improvements are insane - sub-200ms for most queries.

Google's distillation + sparsity techniques are paying off massively. They've essentially compressed a frontier model into a flash variant without the usual quality cliff.

English

Google has pioneered sparsity in neural nets for efficiency since early DNN research (e.g., their 2019 "State of Sparsity" paper evaluating magnitude pruning on Transformers/ResNets).

Key techniques:

- Weight pruning (remove low-magnitude params, unstructured or 2:4 structured for hardware).

- Sparse kernels (like Sputnik for GPU SpMM speedups).

- Dynamic sparsity via Mixture-of-Experts (MoE), as in Switch Transformer—activates only subset of params per token.

In LLMs like Gemini Flash, this pairs with distillation for massive inference savings (lower latency/cost) without big quality drops. Recent extensions include activation sparsity and sparse fine-tuning for 50-75% sparsity on models like T5/MPT.

English

@ihtesham2005 I think that is a consensus among researchers that agents can do it pretty well

English

If you still think AI agents can't do real research, this paper will end that argument.

Researchers from Google and Meta built a framework where Claude Code proposes its own algorithms for making LLMs reason better, then tests them, then refines them based on what failed. No human in the loop after the environment is set up.

In 5 rounds the agent discovered a controller with 4 coordinated mechanisms working together. EMA momentum stopping. Coupled width-depth control. Alignment-aware depth allocation. Conservative branch abandonment.

The paper says directly: "a level of coordinated complexity that would be difficult to arrive at through manual intuition alone."

That's a polite way of saying the agent built something a human probably wouldn't have.

The cost of the entire discovery was $39.90.

The cost of one researcher's coffee budget just outperformed years of hand-tuned work.

Paper is from Google and Meta.

Read it here: arxiv.org/abs/2605.08083

English

@kellerjordan0 @nilinabra I keep reflecting if progress will forever look like small incremental gains or if there exists a fundamentally different approach that may smash the sota

English

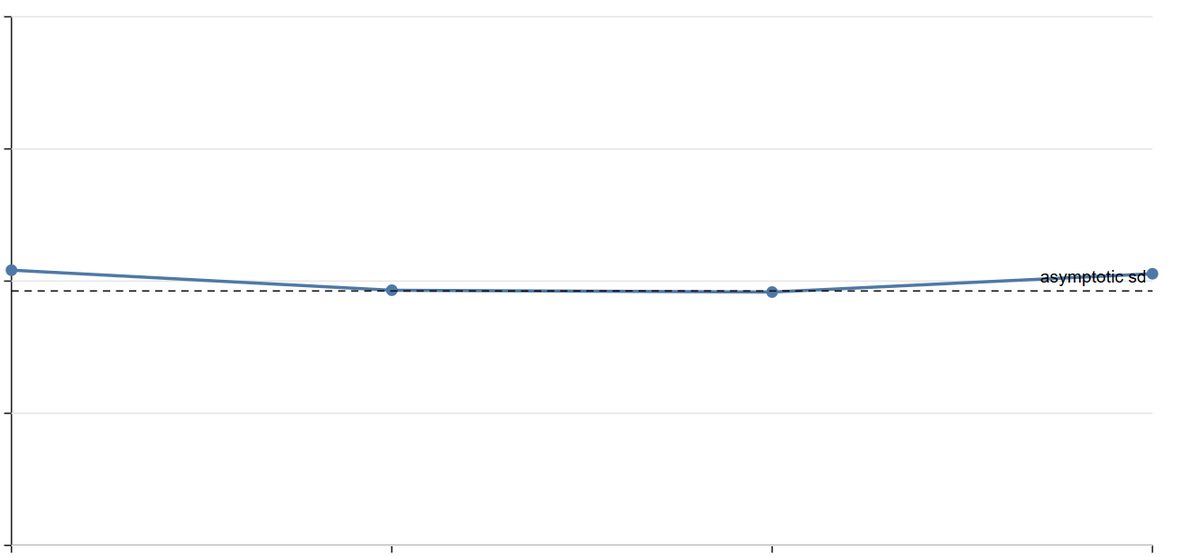

Modded-NanoGPT optimization result #11: @nilinabra has achieved a new record of 3225 steps (-25) via a novel technique dubbed Contra-Muon, in which top SVD components are somewhat suppressed. This result builds on #9.

English

The legacy trading software complex is still teaching people shortcut keys and charging portfolio managers for "custom" displays.

They call themselves "terminals" or "portfolio management systems" and charge managers thousands, or tens of thousands, of dollars per month to provide you with an interface that YOU have to provide data for.

We hated that, so we built one that we could modify ourselves at anytime with natural language, fully equipped with market and portfolio data integration.

English

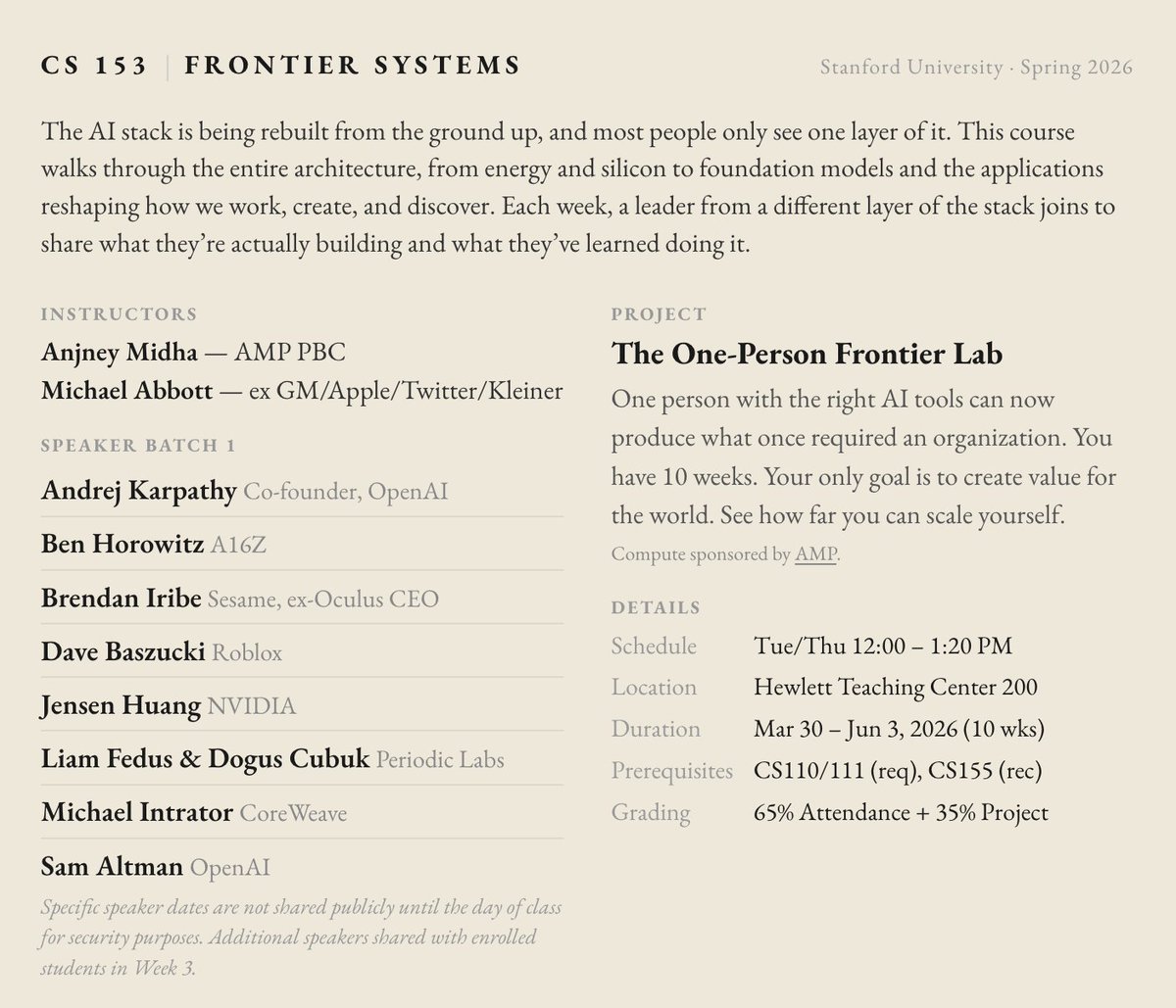

so @mabb0tt and I are once again volunteering to teach cs153.stanford.edu

there are so many new frontiers to be pioneered

thank you to our speakers like @karpathy @bhorowitz @brendaniribe @DavidBaszucki @LiamFedus @ekindogus @sama for investing in the next generation

English

@AnjneyMidha @AryenderSingh2 @mabb0tt @karpathy @bhorowitz @brendaniribe @DavidBaszucki @LiamFedus @ekindogus @sama Posting here to request the discord link

English

@AryenderSingh2 @mabb0tt @karpathy @bhorowitz @brendaniribe @DavidBaszucki @LiamFedus @ekindogus @sama You can join the discord and follow along on YouTube

English