Tao Yu ✈️ NeurIPS 2025

483 posts

Tao Yu ✈️ NeurIPS 2025

@taoyds

@XLangNLP lab, asst. prof. @HKUniversity. author of OpenCUA, OSWorld, Aguvis, Spider, OpenAgents, Text2Reward, Instructor.

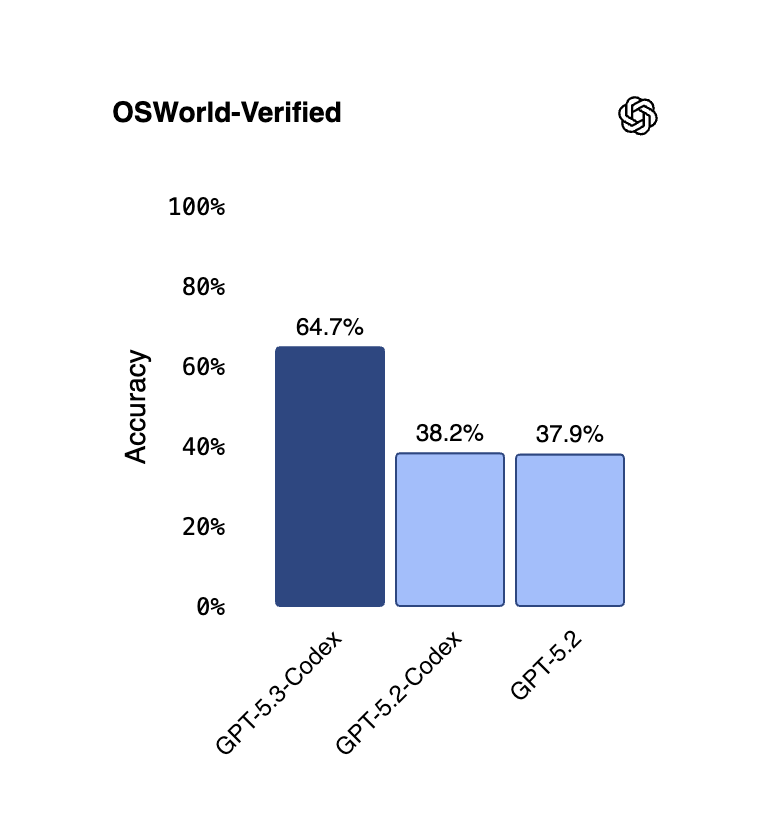

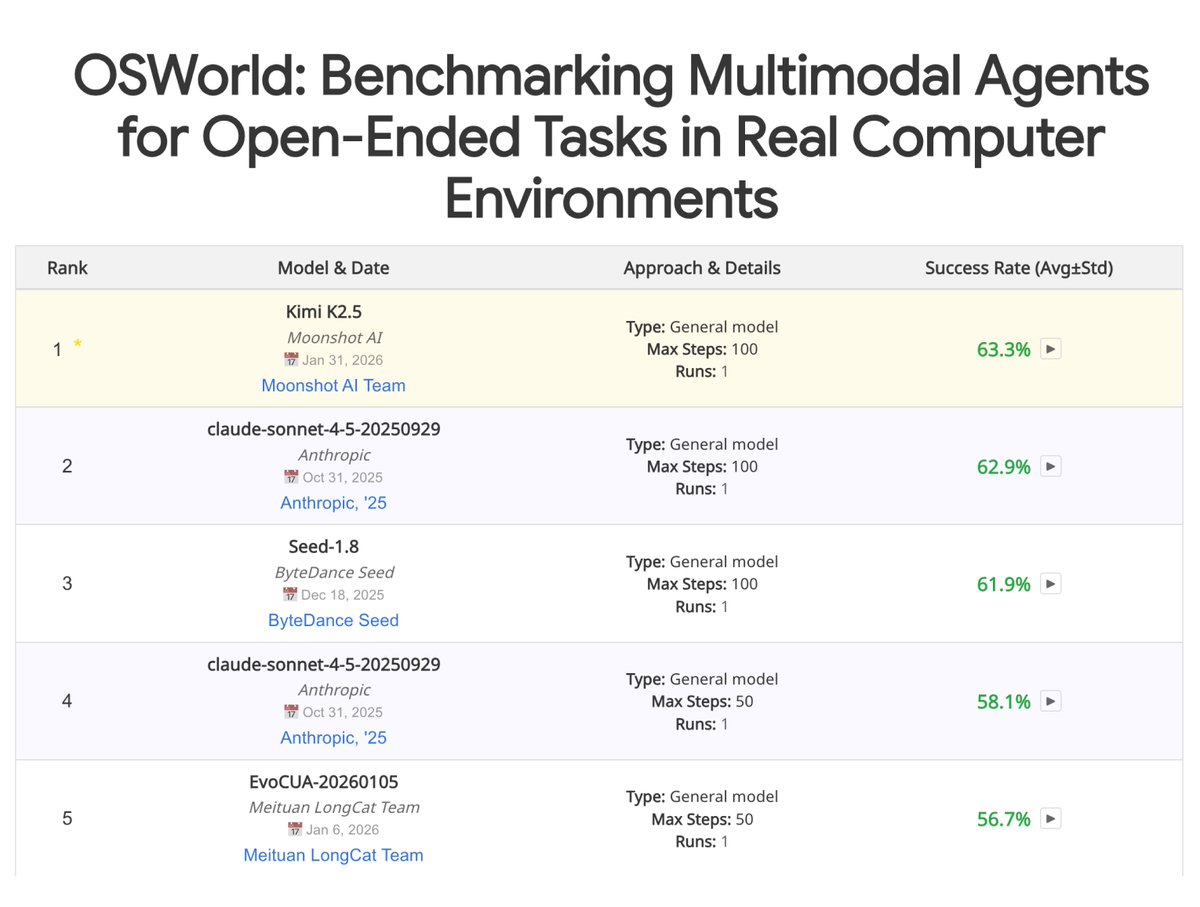

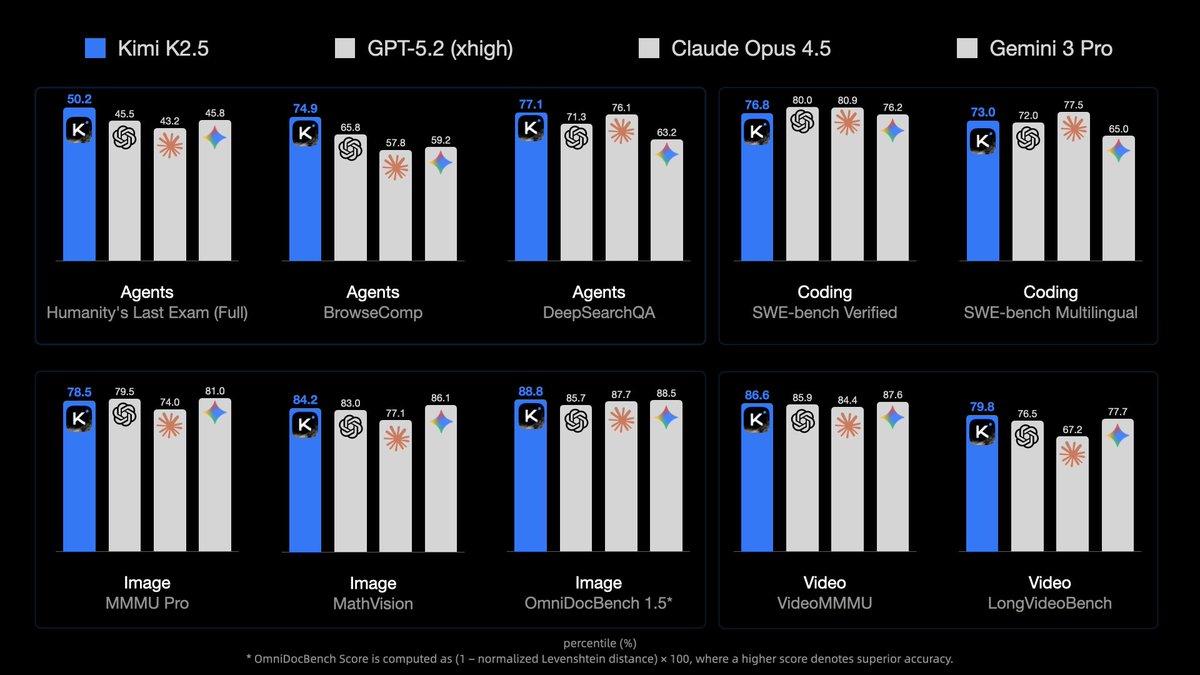

GPT-5.3-Codex is here! *Best coding performance (57% SWE-Bench Pro, 76% TerminalBench 2.0, 64% OSWorld). *Mid-task steerability and live updates during tasks. *Faster! Less than half the tokens of 5.2-Codex for same tasks, and >25% faster per token! *Good computer use.

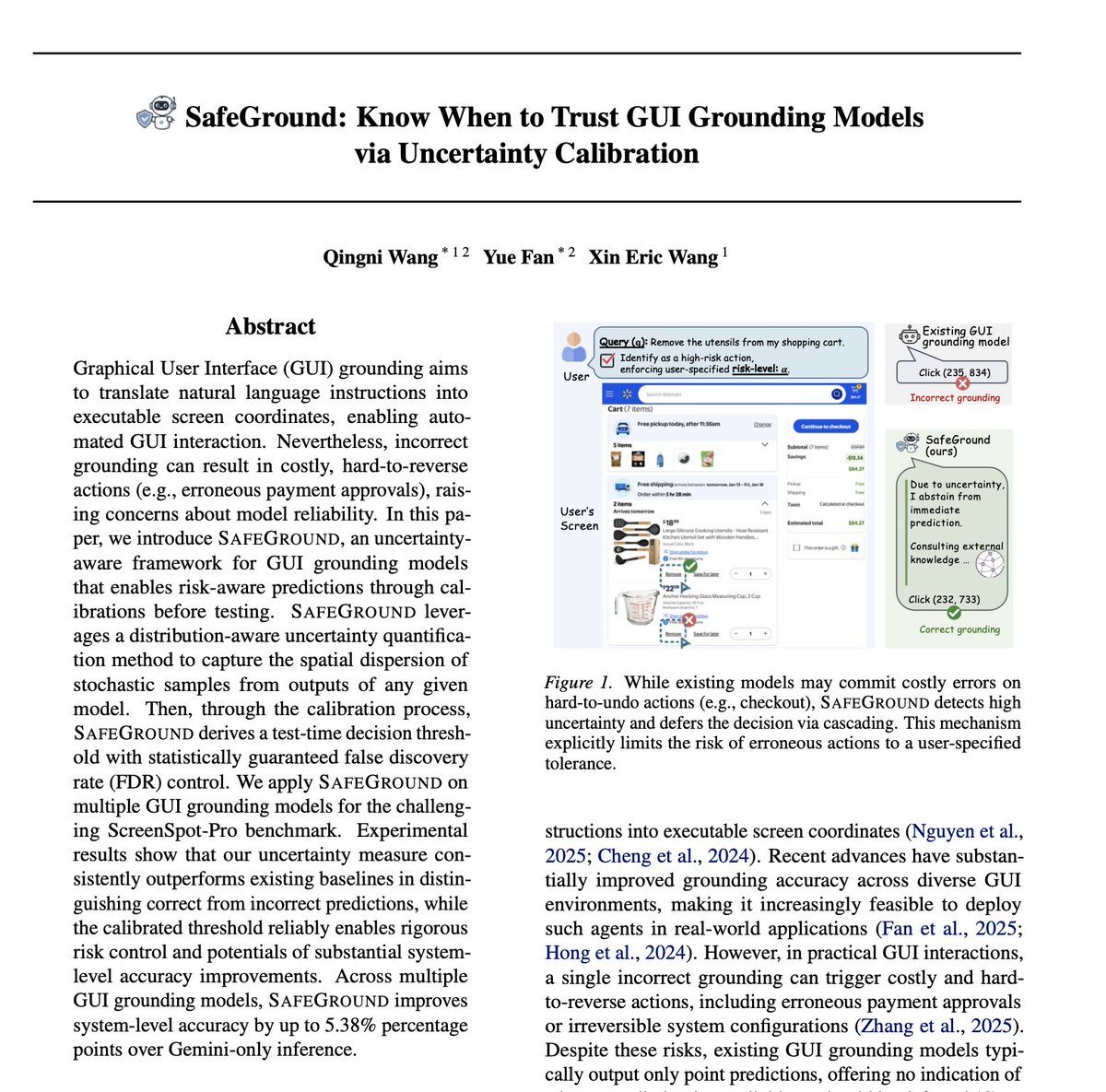

🚨 New paper alert 🚨 📌 How can we make GUI grounding models reliable in real-world interactions? We introduce 🚀 SafeGround: Know When to Trust GUI Grounding Models via Uncertainty Calibration In GUI agents, a single wrong click isn’t just an error — it can trigger costly or irreversible actions (e.g., unintended payments 💸 or deleting important files 🗑️). The real danger is silent failure: most GUI grounding models always output a coordinate, even when they’re unsure. Instead of trusting a single predicted point, SafeGround: • estimates spatial uncertainty from prediction variability • calibrates a decision threshold with statistical guarantees • enables risk-controlled GUI actions, even with black-box models 💻 Code: github.com/Cece1031/SAFEG… 📄 Paper: arxiv.org/pdf/2602.02419 🧵1/6 #Agents #GUI

GPT-5.2 is now rolling out to everyone. openai.com/index/introduc…

Congratulations to Siva Reddy (@sivareddyg), Core Academic Member at Mila, who has received the prestigious Outstanding Early Career Computer Science Researcher Award from @CSCan_InfoCan , the leading organization for the computer science community in Canada. mila.quebec/en/news/siva-r…

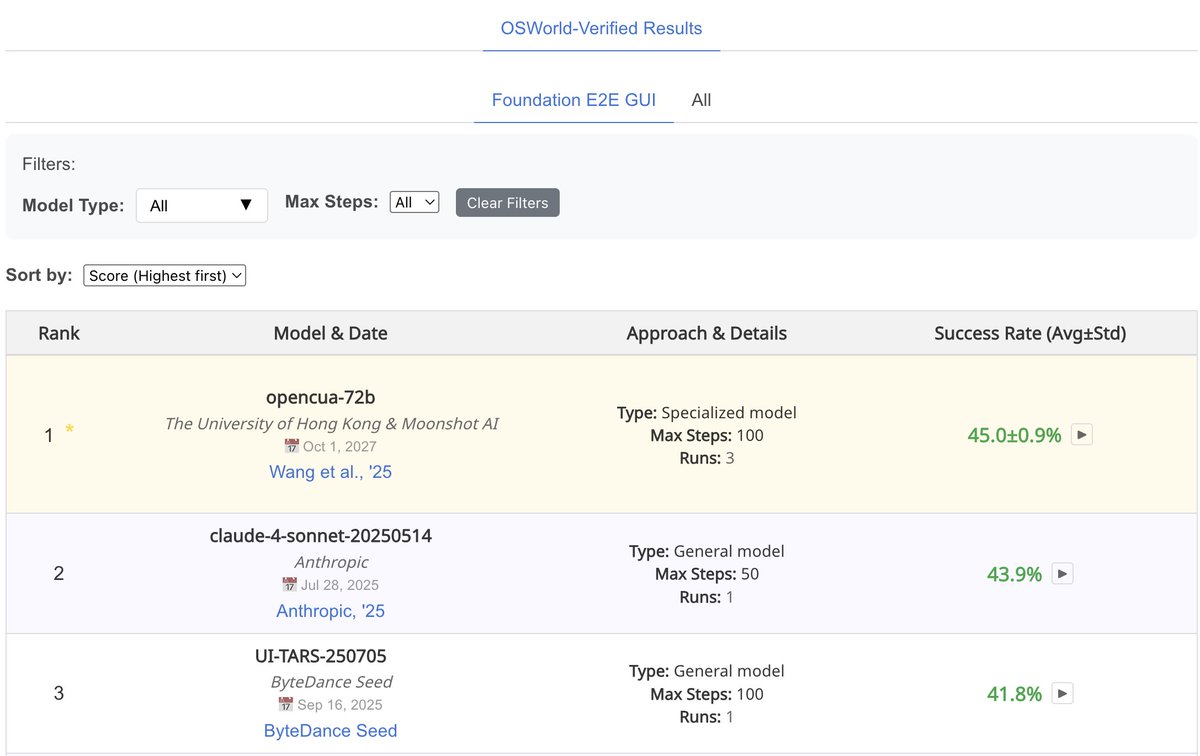

We are super excited to release OpenCUA — the first from 0 to 1 computer-use agent foundation model framework and open-source SOTA model OpenCUA-32B, matching top proprietary models on OSWorld-Verified, with full infrastructure and data. 🔗 [Paper] arxiv.org/abs/2508.09123 📌 [Website] opencua.xlang.ai 🤖 [Models] huggingface.co/xlangai/OpenCU… 📊[Data] huggingface.co/datasets/xlang… 💻 [Code] github.com/xlang-ai/OpenC… 🌟 OpenCUA — comprehensive open-source framework for computer-use agents, including: 📊 AgentNet — first large-scale CUA dataset (3 systems, 200+ apps & sites, 22.6K trajectories) 🏆 OpenCUA model — open-source SOTA on OSWorld-Verified (34.8% avg success, outperforms OpenAI CUA) 🖥 AgentNetTool — cross-system computer-use task annotation tool 🏁 AgentNetBench — offline CUA benchmark for fast, reproducible evaluation 💡 Why OpenCUA? Proprietary CUAs like Claude or OpenAI CUA are impressive🤯 — but there’s no large-scale open desktop agent dataset or transparent pipeline. OpenCUA changes that by offering the full open-source stack 🛠: scalable cross-system data collection, effective data formulation, model training strategy, and reproducible evaluation — powering top open-source models including OpenCUA-7B and OpenCUA-32B that excel in GUI planning & grounding. Details of OpenCUA framework👇