Riece Keck

1.5K posts

Riece Keck

@tech_headhunter

Building AI recruiting tech. Founder @pinch_protocol_

Minneapolis, MN Katılım Temmuz 2023

180 Takip Edilen210 Takipçiler

Sabitlenmiş Tweet

@PeakLab_ Dude got on TRT and is not “jacked” according to your own picture lmao he’s got a bit of arm muscle

English

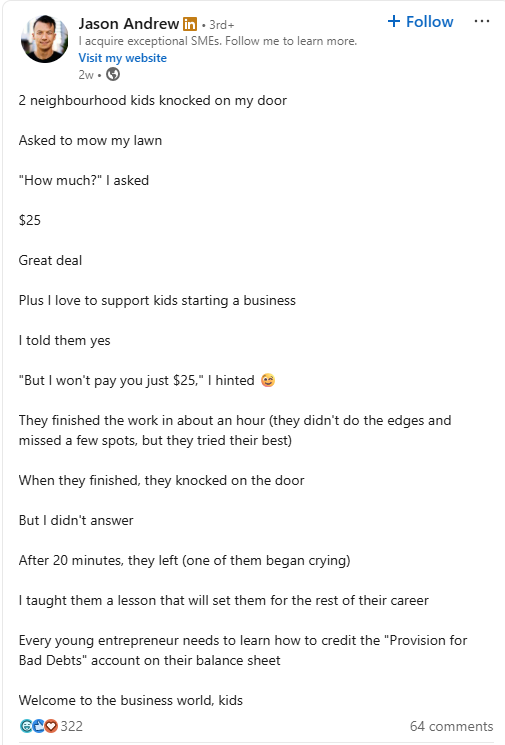

@LinkedInLunat1c Do none of you people recognize satire lmao

English

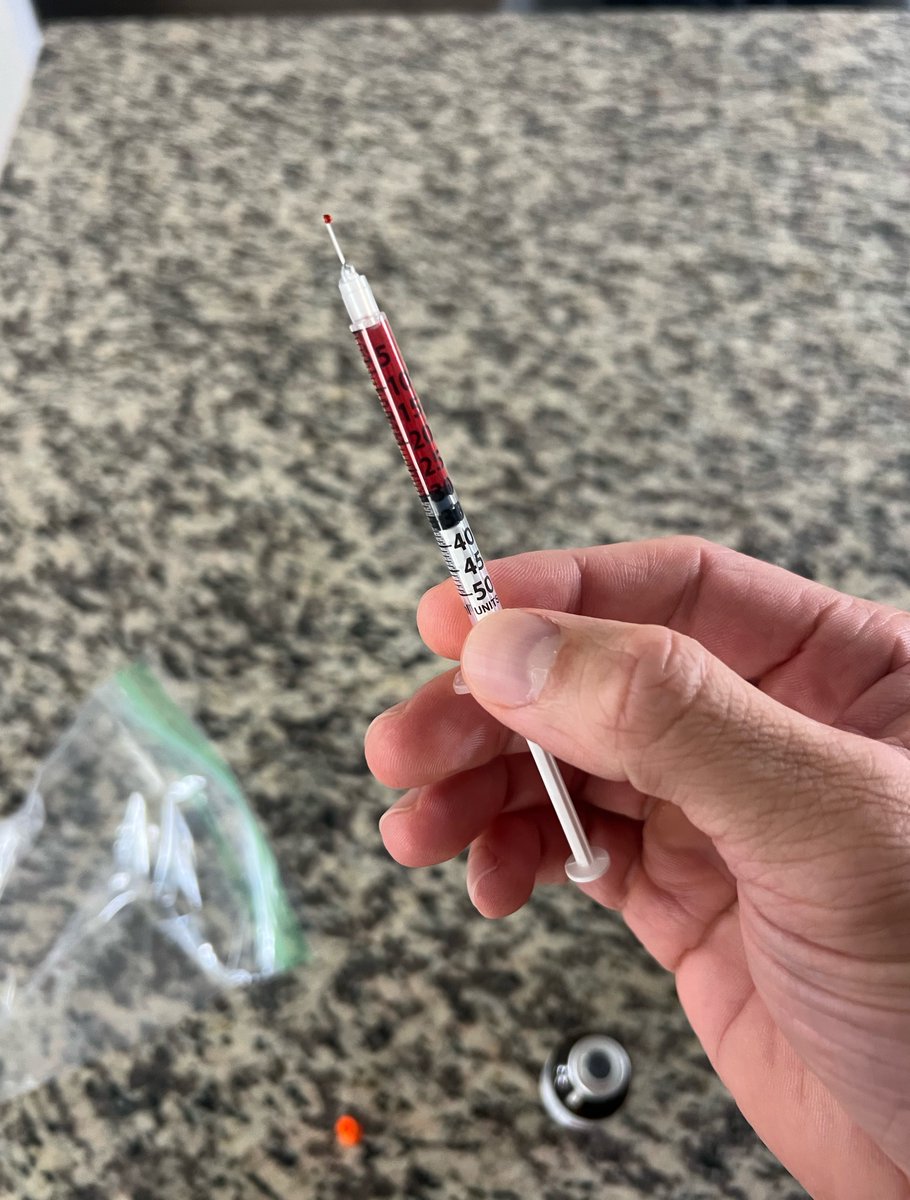

2 days into this magical red liquid

Started at .25ml and it hit me pretty well

Felt incredibly stimulated and it was pretty intense for a few min, not sure why

Working my way up to 1 ml a day here in the next couple days

Did drop 1lbs and looking more vascular, without training or really watching my diet. But could be attributed to a multitude of things

Regardless looking forward to more results

Currently stacked with 6 mg once weekly of Reta (here come the nerds who say that’s to much)

@ElevateBiohack @KimeraChems

English

Riece Keck retweetledi

@ExaAILabs @hnshah I’ve been using the Exa API through Claude code for a hot minute. Is this any different?

English

Introducing Exa for Claude:

Give Claude access to billions of websites, docs, papers, people, companies, and more. Exa turns Claude into a recruiter, a salesperson, a researcher, a reliable engineer, all with one plugin.

Run in terminal: claude plugin marketplace update claude-plugins-official && claude plugin i exa@claude-plugins-official && claude "call mcp__plugin_exa_exa__authenticate (a deferred tool available to you, guaranteed)"

English

How Apple mfrs think this goes

>be me

>drop $1600 on two RTX 3090s used off eBay

>"48GB VRAM, I'm basically a datacenter now"

>they arrive in anti-static bags that look like they've been through a war

>plug them into my motherboard and it sounds like a jet engine taking off

>neighbors probably think I'm mining crypto again

>install llama.cpp, download qwen3.6-27b quantized

>"Q4_K_M, only 16GB, totally fits"

>start LM Studio on port 1234

>type "hello" into the chat box

>GPU fans spin up to 100% instantly

>wait 8 seconds for a response

>>"Hello! How can I assist you today?"

>I've seen faster responses from my grandma reading a text aloud

>try Q8_0 quantization because "quality matters"

>OOM error, obviously

>spend three hours tweaking n_gpu_layers and n_ctx like it's some kind of dark art

>finally get it running at 4 tokens per second

>ask it to write me a poem about my GPUs

>>"Two cards of silicon and light / They hum through the endless night"

>"bro this is actually fire"

>show it to someone on Discord

>”why are you running LLMs locally when you could just use an API for free"

>explain that the joy isn't in the output, it's in watching 94% VRAM usage and knowing nobody else has access to my model

>they don't understand

>close Discord, open LM Studio again

>"let's try a longer context window"

>crash

English

We just signed an enterprise contract with our first publicly traded bank. Incredible work by the team to move this so quickly!!

no better time to join @e2b than now

English

We run 13 n8n workflows across ColdIQ's entire content, ads, and outbound engine, and I'm giving them all away.

Our monthly n8n bill: $384. The same workloads on Claude would cost $60K. That's why I'm not buying the "Claude killed n8n" take.

Claude Routines are good at scheduled agentic tasks that need reasoning.

They're not the same layer as n8n. They're not a replacement for production GTM infrastructure.

Our n8n stack fires 2,000+ executions daily across 13 workflows. Our Phone Finder alone has 41 nodes and waterfalls across 5 data providers.

Here's what's in the doc:

→ GTM Flywheel (81 nodes): domain in, full ICP, lookalikes, prospects, and a tailored content/ads/outbound strategy sent to your inbox

→ Phone Finder (41 nodes): name, LinkedIn URL, or domain in; Prospeo, FullEnrich, and more waterfalled; verified number in seconds

→ AI Agent Reply Manager (13 nodes): classifies a cold email reply on Instantly, drafts a response in Slack, waits for your approval before it goes out

→ Lookalike Finder (35 nodes): domain in, similar businesses by industry, size, and tech signals out

→ Viral Content Browser (10 nodes): pulls viral LinkedIn posts via Serper, filters by engagement, stores the best in Notion

→ Feeling Tracker (36 nodes, coming soon): sentiment analysis on any tool across X, Reddit, YouTube, and LinkedIn

Plus 7 more covering content, GTM, and ops. Shoutout to Sacha Martinot who built the most complex ones.

Reply "N8N" and I'll send you the full doc. Must be following.

GIF

English

@JohnLeFevre I mean, with the kindest sentiment I can muster, fucking obviously?

English

@Rodairos Pivoting the agency recruiting product to outbound biz dev focused and it one shotted this dashboard redesign

English

@bydylanlamb @joshgonsalves_ Escaping the permanent underclass

English

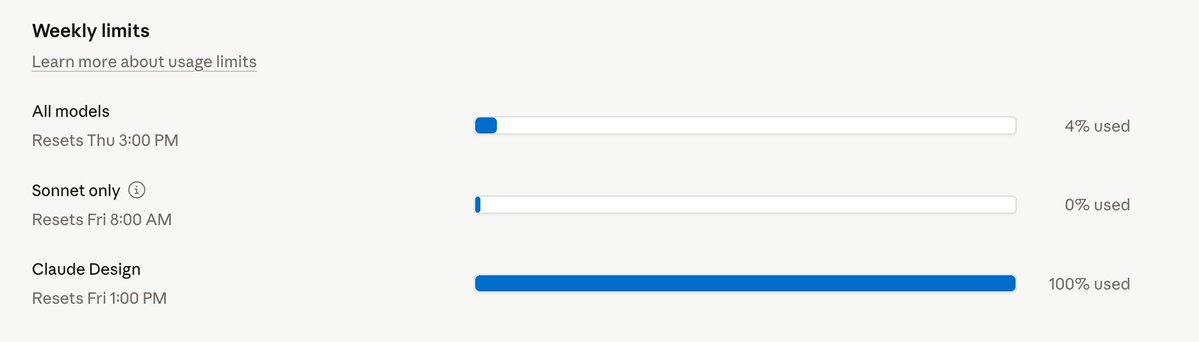

Oh, so Claude Design has it's own usage limit outside of everything else? And of course, already hit it...

So now I can't use it until NEXT FRIDAY? OK...

Claude@claudeai

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

English

@bydylanlamb @joshgonsalves_ I have $200 max and a one app homepage redesign put me to 31% lol

English

@joshgonsalves_ just upgrade to the max plan...? why do people except the pro plan to last long lmao

English

@LunarResearcher Of all the stuff that never happened, this never happened the most

English

An Anthropic engineer watched me trade from across the table at a WeWork in SF

I had my laptop open. Four agents running. Green charts. Live trades scrolling.

He was on a Zoom call. Muted himself. Walked over.

"Are you running Claude against live prediction markets right now"

I told him. Claude Code. Two repos. $25 a month.

He pulled up a chair.

"I helped build the model you're using. I've never seen anyone wire it to live trades like this"

I showed him the dataset.

github.com/warproxxx/poly…

86 million trades. Every wallet. Every entry. Every exit.

He stared at it.

"We tested this internally. You give Claude a dataset and don't tell it what to look for. It finds the winning wallets. Then it finds WHY they win. Then it copies the pattern. We never shipped it because legal killed it"

I told him I did exactly that. One weekend. Claude Code found the exit logic on its own.

Top wallets exit before resolution 91% of the time. They capture 86% of expected value. Cut losers at 12%. Everyone else captures 58% and holds to 41%.

"That's the exact finding from our internal eval. Except ours took a team of eight and four months"

I showed him the scanner.

github.com/Polymarket/pol…

Three commands. 500+ markets. No API key. Claude scores them all in 20 minutes.

"You're using our model to beat markets we're not allowed to touch. On infra that costs less than my lunch"

My setup:

Claude API - $20/mo

VPS - $5/mo

poly_data - free

polymarket-cli - free

214 trades. 74% win rate. +$9,400. 19 days.

I showed him the full breakdown. Every repo. Every command. Every dollar.

Copytrade here: @lunar" target="_blank" rel="nofollow noopener">kreo.app/@lunar

He read it for five minutes. Then looked up.

"If my manager sees this he's going to lose his mind. You just proved our model works in production and we've been sitting on it for a year"

He DM'd me that night.

"Take this down before someone at Anthropic finds it"

Too late.

Lunar@LunarResearcher

English