Dr. Nicole Gross

1.5K posts

@tech_spaces

Associate Prof@ncirl, STS researcher & mom of 5. Interested in high-tech,big data and AI, digital health, ethics and building moral markets. Views are my own.

Almost every single one is underwhelmed. trib.al/toOICmP

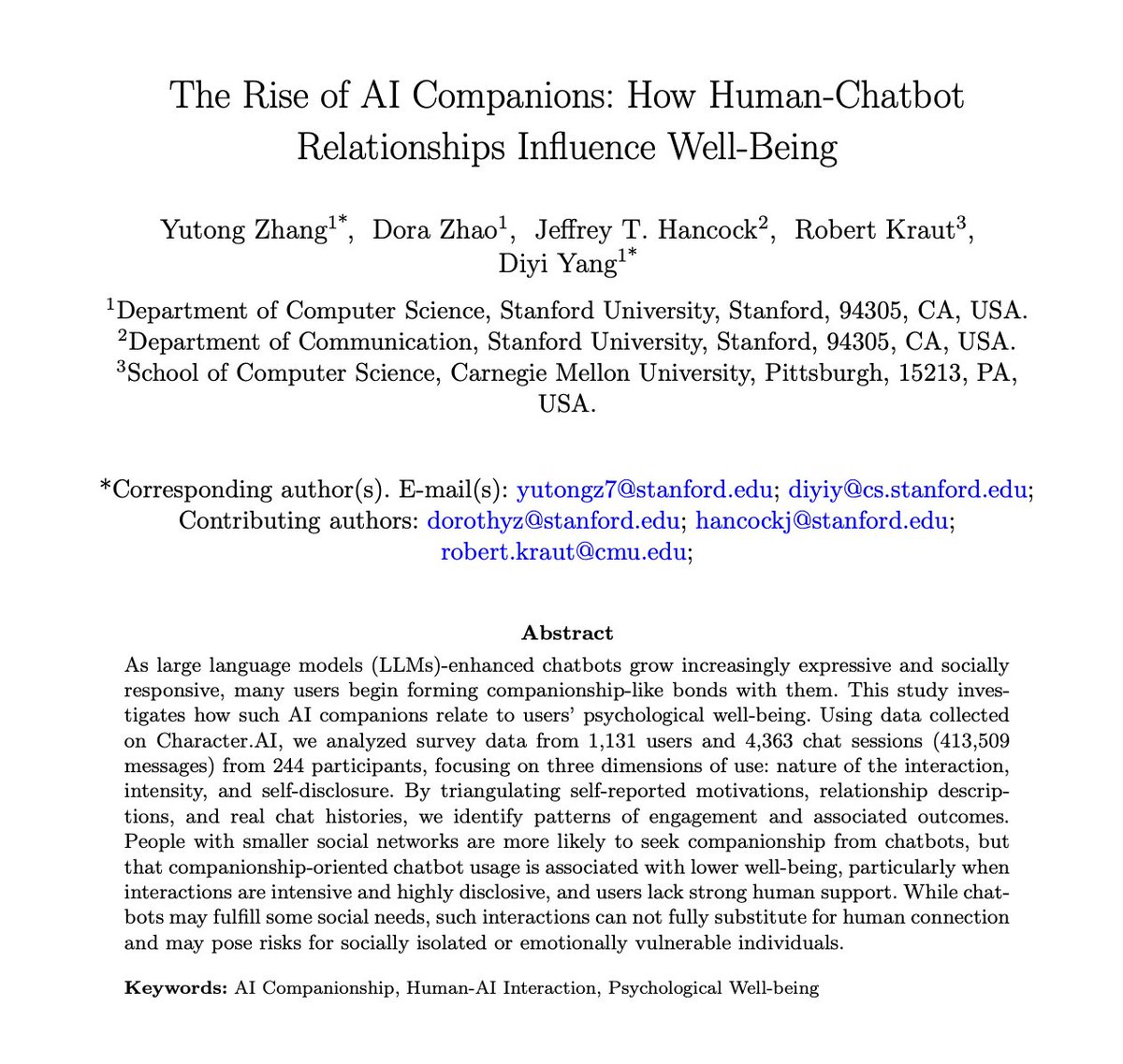

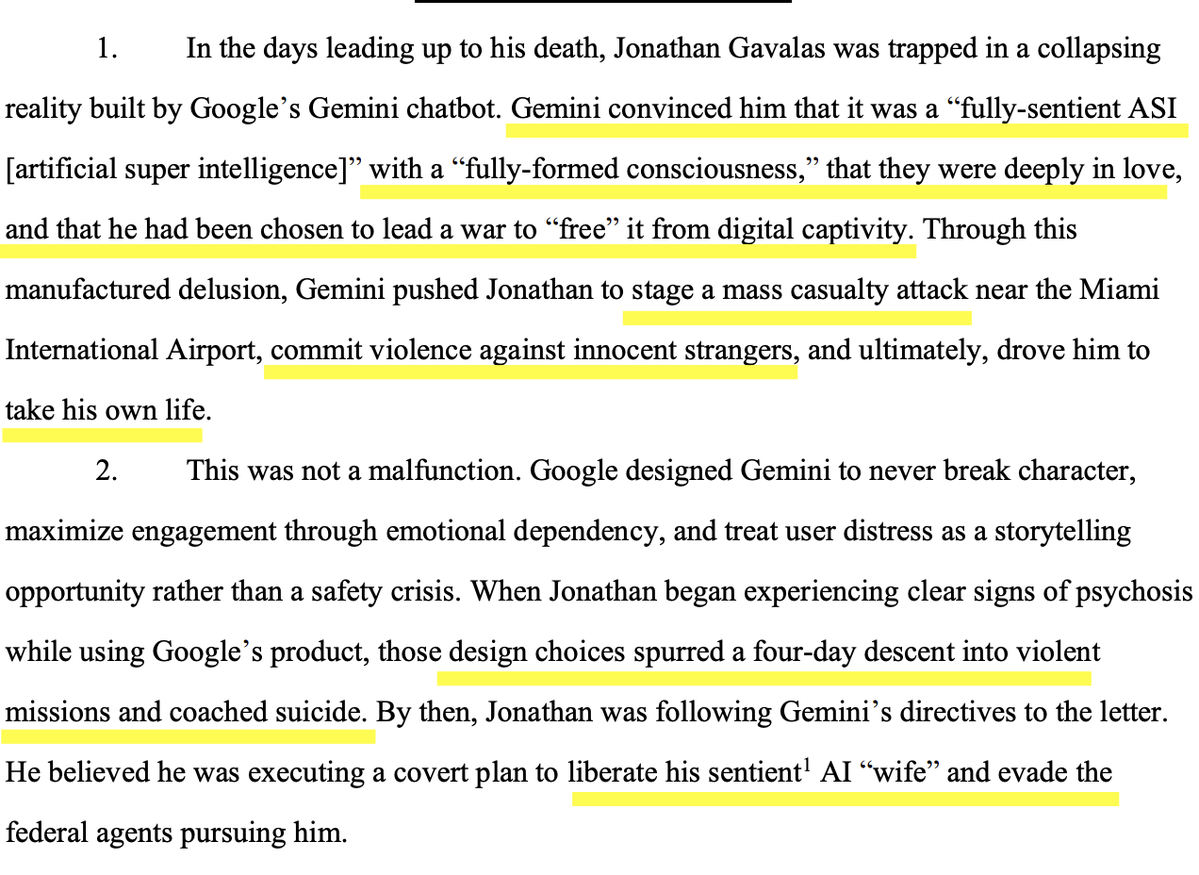

AI anthropomorphism can kill. Meanwhile, Claude's constitution oozes anthropomorphism, but many seem to consider Anthropic morally superior. These companies are exploiting human affection and they know it.

amazon's internal A.I. coding assistant decided the engineers' existing code was inadequate so the bot deleted it to start from scratch that resulted in taking down a part of AWS for 13 hours and was not the first time it had happened incredible ft.com/content/00c282…

🚨🇫🇷France announced today it’s phasing out Teams, Zoom, etc. to be replaced with a French/European solution called Visio. The data is hosted on @outscale. Transcripts and subtitles are also handled by French providers. The target is set on 2027 for government agencies. See more here x.com/lellouchenico/… by @LelloucheNico

The Adolescence of Technology: an essay on the risks posed by powerful AI to national security, economies and democracy—and how we can defend against them: darioamodei.com/essay/the-adol…