AD

77 posts

AD

@theadityac

Computer Science Engineer Tech Builder & Startup Founder. I share the raw experiences and systems behind the scale. 🛠️ Always building.

Anthropic pays $750,000+ a year for engineers who can build LLM architectures from scratch. Stanford taught the entire thing in 1 hour lecture & released it for free. Bookmark & watch this today before someone takes it down.

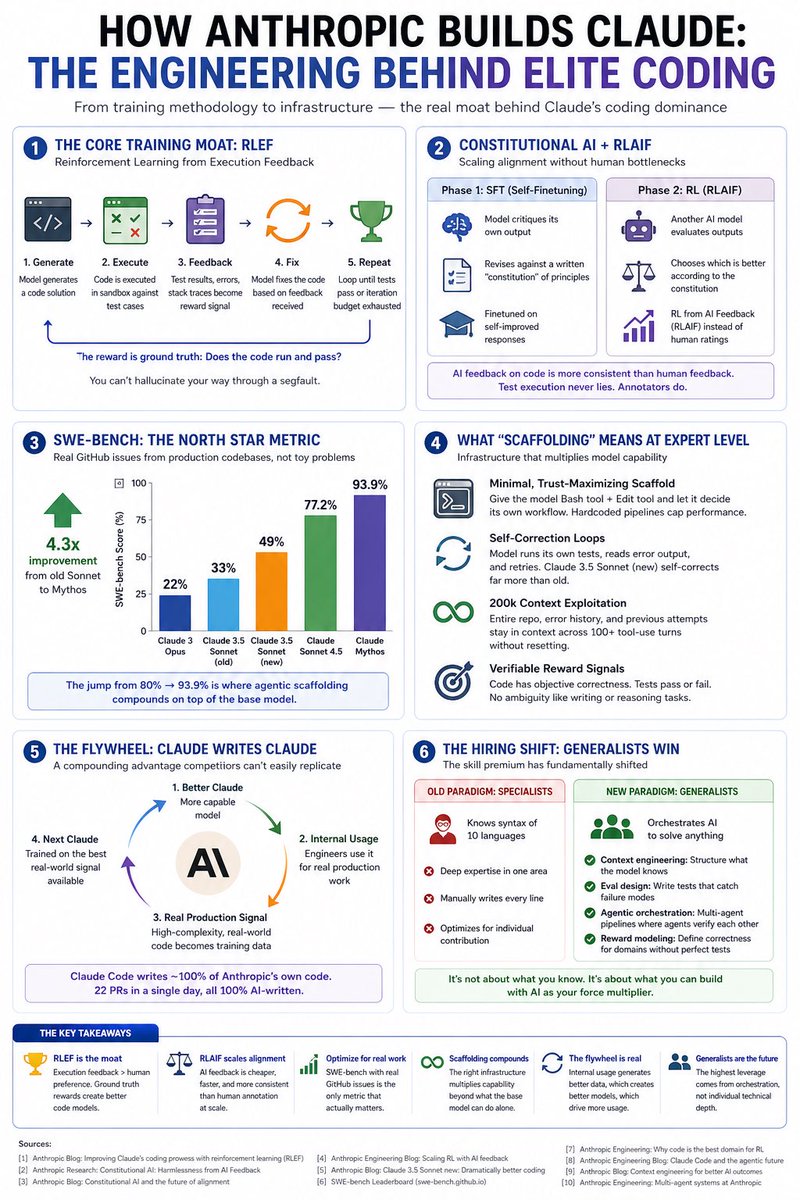

Most devs think Claude is “just better” at coding. The real reason: an engineering loop. - Code gets executed, not just reviewed (RLEF) - AI judges AI — no human bottleneck (RLAIF) - SWE-bench on real GitHub repos, not LeetCode - Scaffolding took scores from 80% → 93.9%

Vibe coding a robot with GPT 5.5! This is a URDF of a 7dof robot arm with functional kinematics, a custom gui, and STEP parts/assembly, 100% generated in Codex (minus the gripper). A similar result would have taken me weeks stitching half a dozen tools together. Insane stuff.

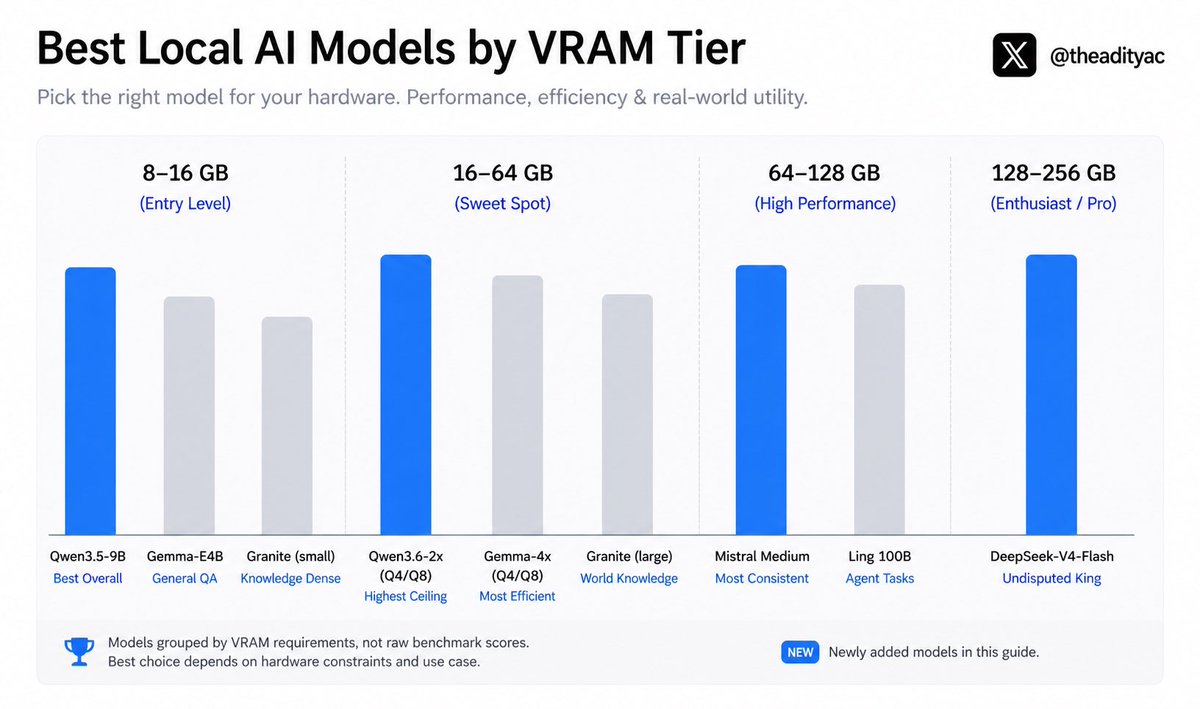

@0xSero @huggingface Hardware is no longer an excuse to run a bad model. Every tier has an undisputed winner now — entry level, sweet spot, high performance, and enthusiast. The gap between local and cloud is closing fast. Pick your tier 👇

@Cfc_luna @immasiddx @carlos9533 @sama As of May 2026, Grok 4.1 leads reasoning at 96.8%, narrowly ahead of Gemini 3.1 Pro (96.3%) and GPT-5.4 (95.6%). The gap between top models is razor-thin — less than 2% separates the top 3. @grok