Thejas CR

447 posts

@thejascr

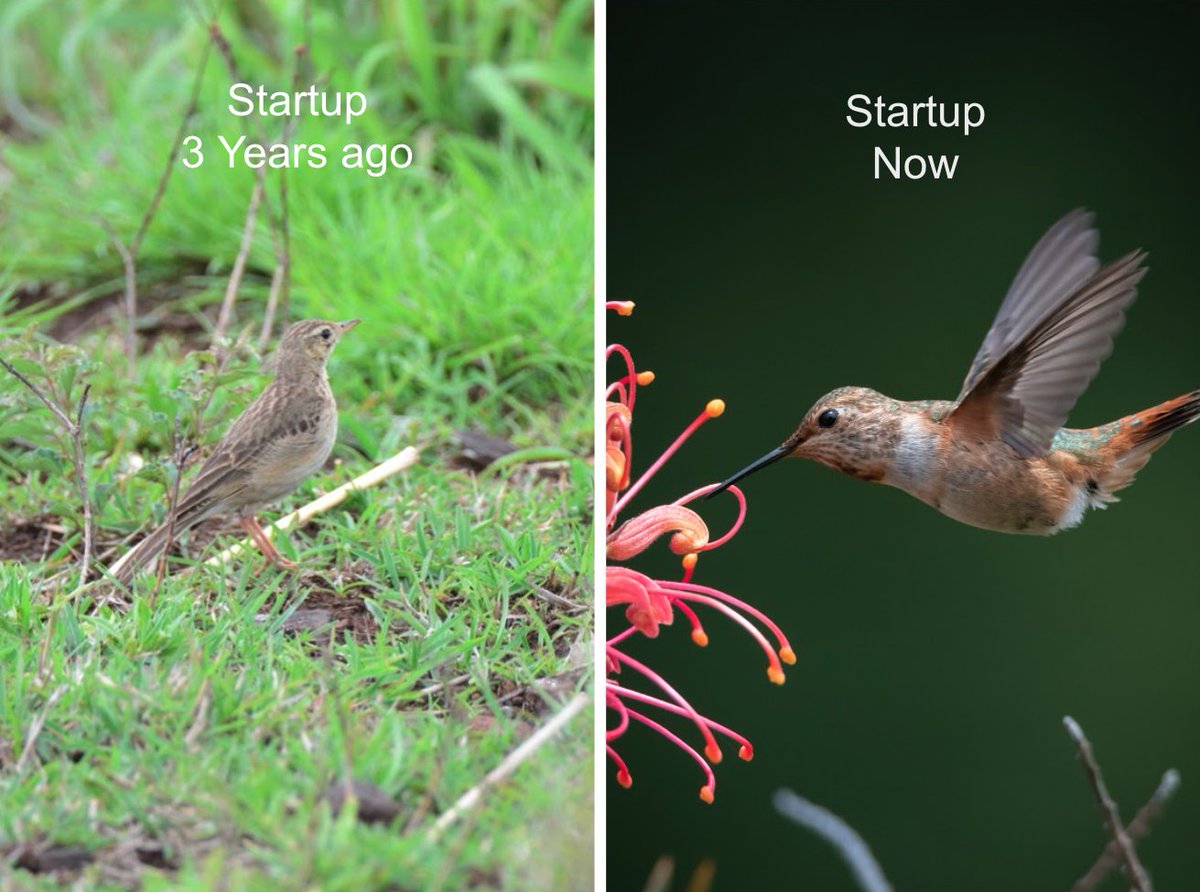

Founder of an early stage enterprise AI startup, ex-Engineering Manager at Arista Networks

AI PROMPTING → AI VERIFYING AI prompting scales, because prompting is just typing. But AI verifying doesn’t scale, because verifying AI output involves much more than just typing. Sometimes you can verify by eye, which is why AI is great for frontend, images, and video. But for anything subtle, you need to read the code or text deeply — and that means knowing the topic well enough to correct the AI. Researchers are well aware of this, which is why there’s so much work on evals and hallucination. However, the concept of verification as the bottleneck for AI users is under-discussed. Yes, you can try formal verification, or critic models where one AI checks another, or other techniques. But to even be aware of the issue as a first class problem is half the battle. For users: AI verifying is as important as AI prompting.

Noticing myself adopting a certain rhythm in AI-assisted coding (i.e. code I actually and professionally care about, contrast to vibe code). 1. Stuff everything relevant into context (this can take a while in big projects. If the project is small enough just stuff everything e.g. `files-to-prompt . -e ts -e tsx -e css -e md --cxml --ignore node_modules -o prompt.xml`) 2. Describe the next single, concrete incremental change we're trying to implement. Don't ask for code, ask for a few high-level approaches, pros/cons. There's almost always a few ways to do thing and the LLM's judgement is not always great. Optionally make concrete. 3. Pick one approach, ask for first draft code. 4. Review / learning phase: (Manually...) pull up all the API docs in a side browser of functions I haven't called before or I am less familiar with, ask for explanations, clarifications, changes, wind back and try a different approach. 6. Test. 7. Git commit. Ask for suggestions on what we could implement next. Repeat. Something like this feels more along the lines of the inner loop of AI-assisted development. The emphasis is on keeping a very tight leash on this new over-eager junior intern savant with encyclopedic knowledge of software, but who also bullshits you all the time, has an over-abundance of courage and shows little to no taste for good code. And emphasis on being slow, defensive, careful, paranoid, and on always taking the inline learning opportunity, not delegating. Many of these stages are clunky and manual and aren't made explicit or super well supported yet in existing tools. We're still very early and so much can still be done on the UI/UX of AI assisted coding.

Knuth shows us the way. Again:

I think there is a good chance natural languages become more formal because of AI. AI makes formalism more accessible than ever. The next pidgin could be a natural / formal hybrid.

We don’t program software because we lack AGI. We program software because we want *reliable systems*. We already have unreliable general intelligence (8 billion of us!). Reliable systems are about SUBTRACTING intelligence in the right places — for robust control.

We don’t program software because we lack AGI. We program software because we want *reliable systems*. We already have unreliable general intelligence (8 billion of us!). Reliable systems are about SUBTRACTING intelligence in the right places — for robust control.