Brian Cheung

259 posts

@thisismyhat

This is my hat, there are many like it, but this one is mine. @MIT_CSAIL 🧢 / ex: @berkeley_ai 🎓 Google B̶r̶a̶i̶n̶ DeepMind 🎩

Software agents can self-improve via self-play RL Introducing Self-play SWE-RL (SSR): training a single LLM agent to self-play between bug-injection and bug-repair, grounded in real-world repositories, no human-labeled issues or tests. 🧵

We discovered an emergent property of VLAs like π0/π0.5/π0.6: as we scale up pre-training, the model learns to align human videos and robot data! This gives us a simple way to leverage human videos. Once π0.5 knows how to control robots, it can naturally learn from human video.

This also shows up in the representations learned by the model. We plot the model’s representations of human and robot images. As pre-training is scaled up, the representation of humans and robots become more aligned: to a scaled-up model, human videos "look" like robot demos.

This also shows up in the representations learned by the model. We plot the model’s representations of human and robot images. As pre-training is scaled up, the representation of humans and robots become more aligned: to a scaled-up model, human videos "look" like robot demos.

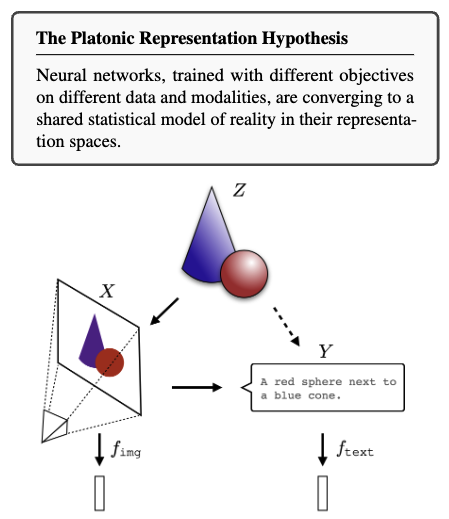

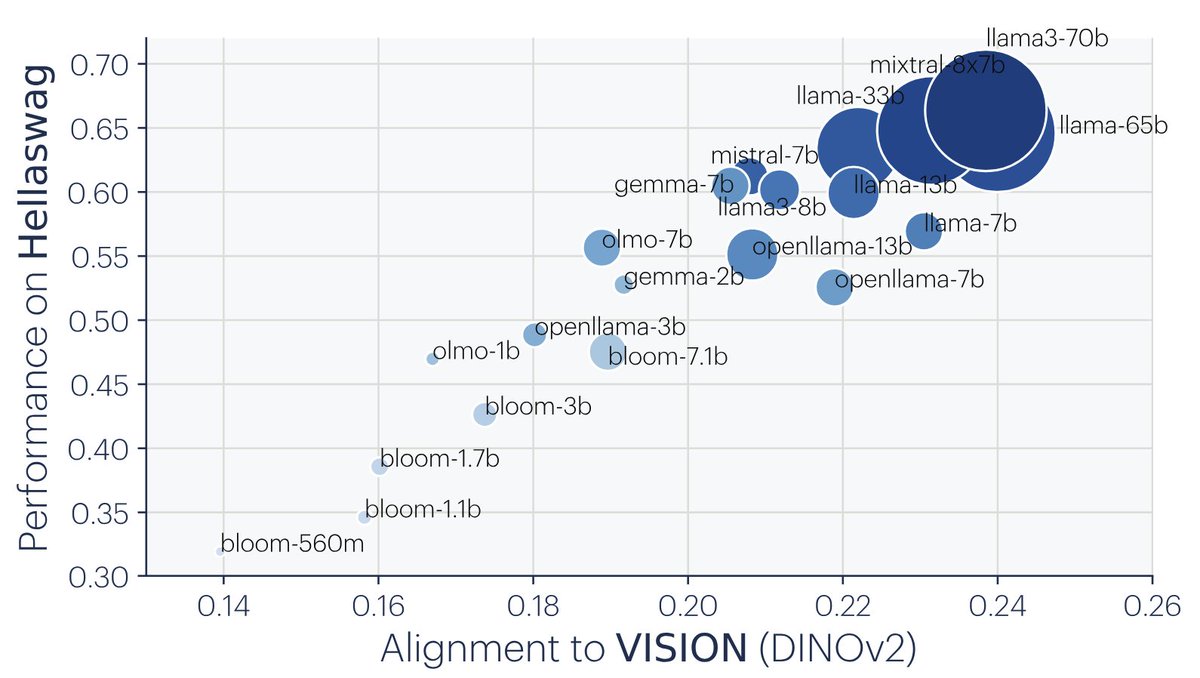

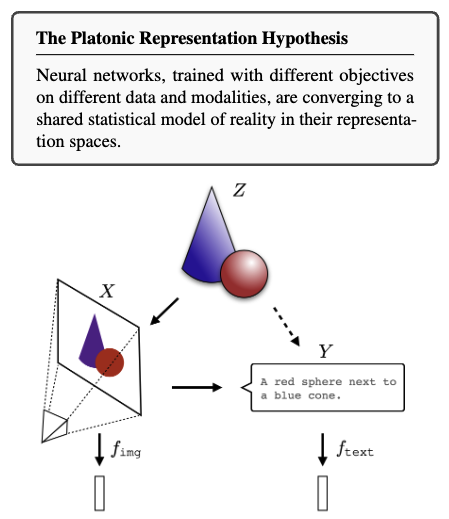

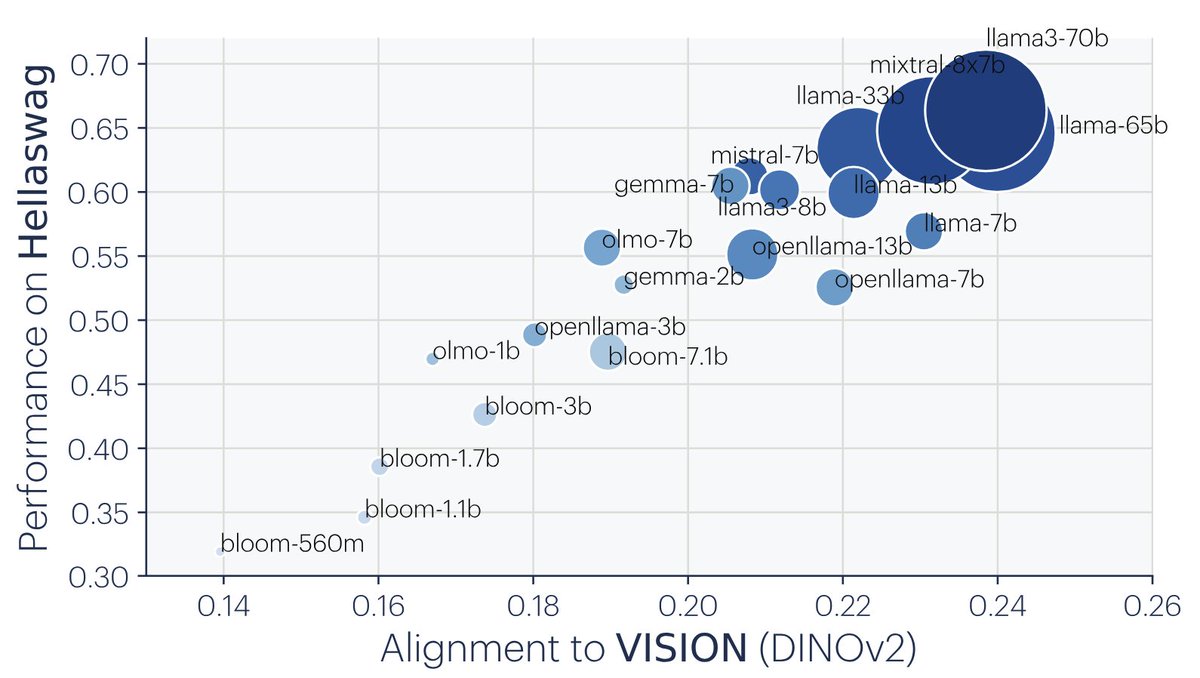

Today seems to be a fitting day for @GoogleDeepMind news, so I'm excited to announce our new preprint! Prior work suggests that text & img repr's are converging, albeit weakly. We found these same models actually have strong alignment; the inputs were too impoverished to see it!

Sakana AI’s CTO says he’s ‘absolutely sick’ of transformers, the tech that powers every major AI model “You should only do the research that wouldn’t happen if you weren’t doing it.” (@thisismyhat) 🧠 @YesThisIsLion venturebeat.com/ai/sakana-ais-…