@i2cjak The midwit constructs elaborate networks of tubes while the genius knows that we can just dig more wells

English

thomasg.eth

260 posts

@thomasg_eth

Student of consciousness and coordination. Building open source VTOL aircraft @arrowair_

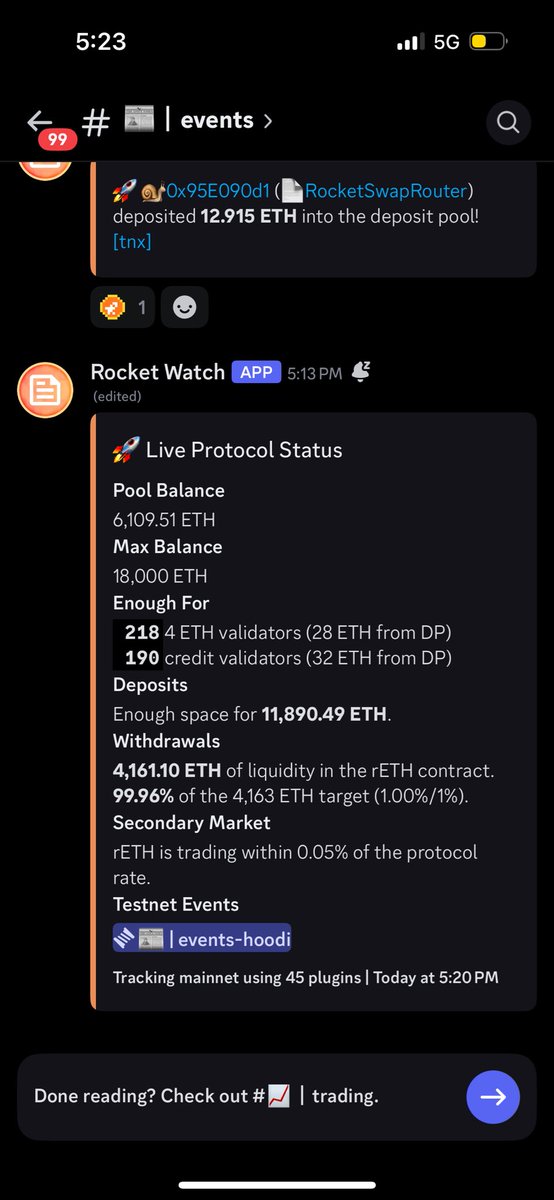

Yummy Yummy in the @Rocket_Pool tummy

The beautiful thing about recording with an old Sony camcorder is that it forces you into what I call digital realism, and it's exactly what it sounds like. December on Camcorder

Feels like this went largely un-noticed but it’s an important read as OpenAI starts to have an unnerving attitude about its financing

The rocket is stacked. ✅ The Orion spacecraft with its launch abort system is stacked atop the Space Launch System rocket. Launch of the Artemis II mission around the Moon is targeted for early next year.