Tim Adelmann

587 posts

Tim Adelmann

@tiGGu

#Digital #Native & #Innovation Enthusiast. #Design Thinker. Software Developer. Keynote Speaker. CTO @ MEETYOO

Berlin, Deutschland Katılım Aralık 2009

600 Takip Edilen152 Takipçiler

@MikeRyanDev Interesting. I‘ve built my own compiler for streamed Vue components with loading props, slots etc. Text replacements and a bunch of other features.

I see hashbrown is only react/ng?

English

Congrats to the Vercel team for getting this out! Hashbrown has been doing this for 9 months, with a better dev experience around streaming props into components.

Check it out: hashbrown.dev

Guillermo Rauch@rauchg

Glimpse of a world of fully generative interfaces. AI → JSON → UI: github.com/vercel-labs/js…

English

@standupmaths please make an awesome video to explain this!

Rohan Paul@rohanpaul_ai

Another AI Math landmark. GPT 5.2 Pro solved Erdos Problem #397 as well, and it was accepted by Terence Tao. AI's progress on Math has such huge implecations, as Mathematics is the shared substrate for modeling, and computation across most sciences, so removing mathematical bottlenecks will multiply impact across many fields at once. So when AI solves core Math problems, everything built on top speeds up.

English

@DennisAdriaans „Back in the day“ destructuring props made them loose reactivity… not sure if this was an exclusive vue 2 thing…

English

Tim Adelmann retweetledi

@0xDevShah $20B also seems cheap for Meta or Microsoft don‘t know about anthropic though.

Wondered why nobody is picking this off the market seem to be able to make any llm much faster!

English

@YBenlemlih @typescript @mattpocockuk For me having both interfaces and types which are basically the same, is a major design flaw of typescript.

In other languages interfaces have a clear role: they define behavior and always end with -able like „serializable“.

I personally always use types. Never interfaces

English

Which is better?

@typescript types or interfaces?

I personally prefer types, but I am sure @mattpocockuk has his own opinion here!

English

@tekbog Jakob‘s law: „Users spend most of their time on other sites. This means that users prefer your site to work the same way as all the other sites they already know.“ lawsofux.com/jakobs-law/

English

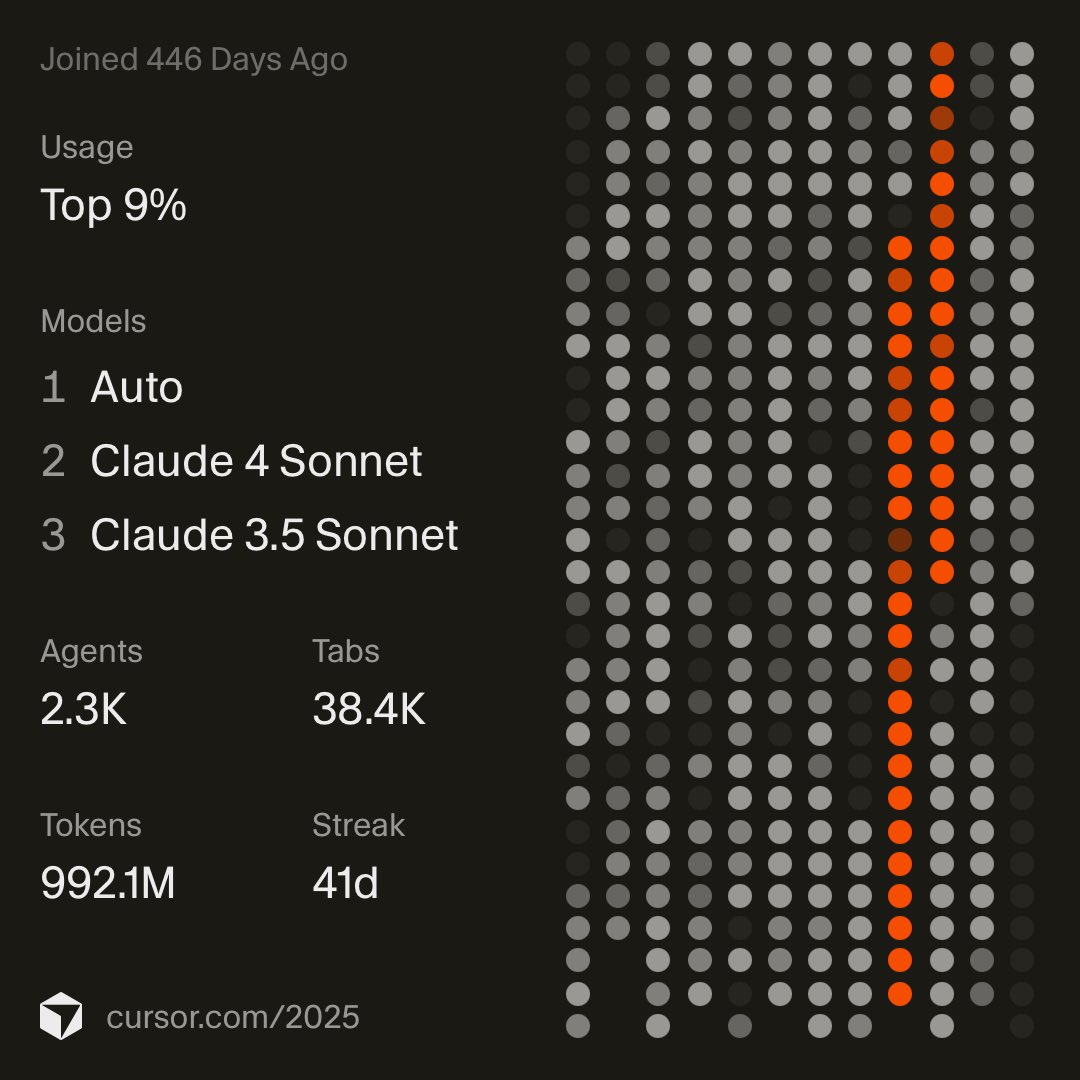

My year in code. @cursor_ai

There are a couple of days left in 2025. I‘m confident i will reach the 1T until the end of 2025!

The most amazing thing this year was the rate of improvements of models and IDE!

Thank you

English

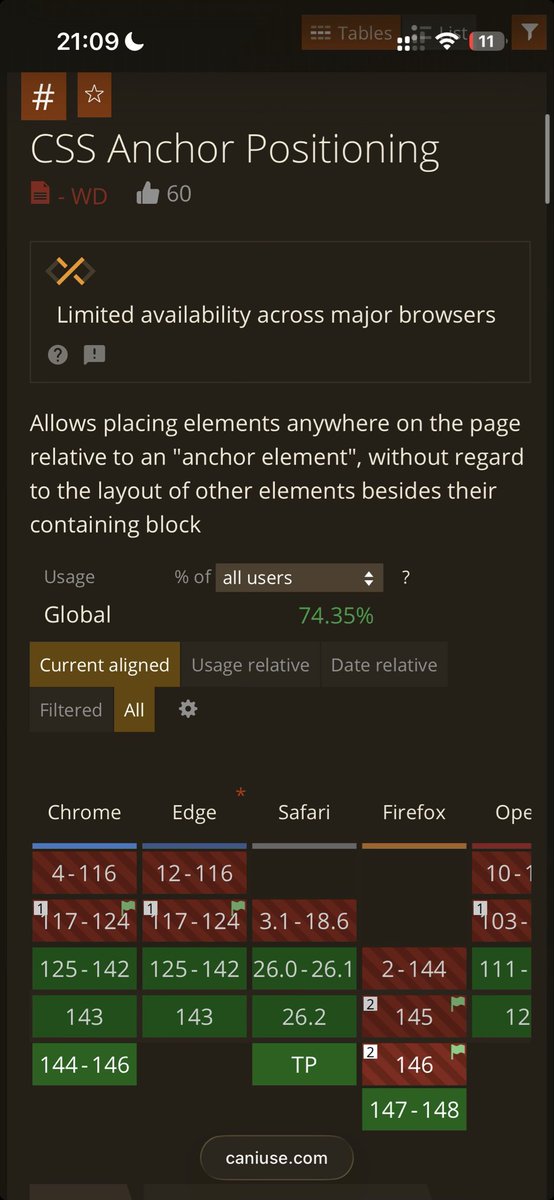

Css anchor is around the corner.

Finally one of the most anticipated APIs in the web.

I guess @jaffathecake is to he thanked for the FF support.

English

The lesson: The smartest AI isn't a single genius, it's a committee of experts. This has huge implications for building AI products.

read the analysis:

contenthub.meetyoo.com/resources/ki-f…

English