Tihomir Mateev retweetledi

Tihomir Mateev

2.9K posts

Tihomir Mateev

@tishun

Sr. software engineer @ Redis, tech enthusiast, views are my own

Bulgaria Katılım Kasım 2010

750 Takip Edilen207 Takipçiler

Tihomir Mateev retweetledi

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length.

🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models.

🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice.

Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today!

📄 Tech Report: huggingface.co/deepseek-ai/De…

🤗 Open Weights: huggingface.co/collections/de…

1/n

English

Tihomir Mateev retweetledi

Tihomir Mateev retweetledi

Anthropic said Mythos was too dangerous to release. Then four random guys in a Discord gained access on day one by guessing the URL...

This is pretty insane:

→ Group in a private Discord guessed the endpoint from Anthropic's naming conventions

→ They figured out the conventions from the leak in the Mercor breach three weeks ago

→ Used a contractor's legit eval credentials to walk in

→ Have been using it ever since to build simple websites

The AI that finds zero-days in every operating system on earth was defeated by address bar autocomplete... big yikes

Bloomberg@business

Anthropic's Mythos has been accessed by a small group of unauthorized users, raising questions about control of the AI model bloomberg.com/news/articles/…

English

Tihomir Mateev retweetledi

Here's my update to the broader community about the ongoing incident investigation. I want to give you the rundown of the situation directly.

A Vercel employee got compromised via the breach of an AI platform customer called Context.ai that he was using. The details are being fully investigated.

Through a series of maneuvers that escalated from our colleague’s compromised Vercel Google Workspace account, the attacker got further access to Vercel environments.

Vercel stores all customer environment variables fully encrypted at rest. We have numerous defense-in-depth mechanisms to protect core systems and customer data. We do have a capability however to designate environment variables as “non-sensitive”. Unfortunately, the attacker got further access through their enumeration.

We believe the attacking group to be highly sophisticated and, I strongly suspect, significantly accelerated by AI. They moved with surprising velocity and in-depth understanding of Vercel.

At the moment, we believe the number of customers with security impact to be quite limited. We’ve reached out with utmost priority to the ones we have concerns about. All of our focus right now is on investigation, communication to customers, enhancement of security measures, and sanitization of our environments. We’ve deployed extensive protection measures and monitoring. We’ve analyzed our supply chain, ensuring Next.js, Turbopack, and our many open source projects remain safe for our community.

The recommendation for all Vercel customers is to follow the Security Bulletin closely (vercel.com/kb/bulletin/ve…). My advice to everyone is to follow the best practices of security response: secret rotation, monitoring access to your Vercel environments and linked services, and ensuring the proper use of the sensitive env variables feature.

In response to this, and to aid in the improvement of all of our customers’ security postures, we’ve already rolled out new capabilities in the dashboard, including an overview page of environment variables, and a better user interface for sensitive env var creation and management. As always, I’m totally open to your feedback.

We’re working with elite cybersecurity firms, industry peers, and law enforcement. We’ve reached out to Context to assist in understanding the full scale of the incident, in an effort to protect other organizations and the broader internet. I also want to thank the Google Mandiant team for their active engagement and assistance.

It’s my mission to turn this attack into the most formidable security response imaginable. It’s always been a top priority for me. Vercel employs some of the most dedicated security researchers and security-minded engineers in the world. I commit to keeping you updated and rolling out extensive improvements and defenses so you, our customers and community, can have the peace of mind that Vercel always has your back.

English

Tihomir Mateev retweetledi

Amazing how quickly we are ready to let go of our individuality, something we've actually fought to have for many years

Linus ✦ Ekenstam@LinusEkenstam

Huawei camera AI now recommends poses before you click the shutter button

English

Tihomir Mateev retweetledi

Notifications for deleted messages shouldn't remain in any OS notification database, and we've asked Apple to address this.

In the meantime, you can prevent any preview text from your Signal messages from appearing in your notifications.

Signal Settings > Notifications > Show “No Name or Content”

404media.co/fbi-extracts-s…

English

Tihomir Mateev retweetledi

honey wake up! the new @redmonk language ratings just dropped!

redmonk.com/sogrady/2026/0…

English

Tihomir Mateev retweetledi

Tihomir Mateev retweetledi

Tihomir Mateev retweetledi

🚨BREAKING: Two Academics Just Proved That AI Training Is Copyright Infringement In The US And Europe.

And Neither AI Company Has A Legal Defense That Survives It.

In America, AI companies claim "fair use."

In Europe, they claim "text and data mining" exceptions.

A landmark interdisciplinary study combining law and computer science just argued both defenses are invalid.

Here's why.

Fair use in the US requires transformation.

The argument AI companies make: we're not copying books, we're learning from them. That's transformative.

The paper's counter: generative AI training is fundamentally different from text and data mining. The entire purpose of training is to encode the content not analyze it. When a model can reproduce 95% of a novel on demand, that's not transformation. That's storage.

The European TDM exception requires that the data not be retained after analysis.

The paper's counter: AI models retain training data in their weights indefinitely. By design. That's the entire point of training. The exception was written for temporary processing not permanent encoding.

Both defenses. Both invalid. By the same core argument.

And here's the number that proves it:

Researchers at Stanford extracted 95.8% of Harry Potter from Claude word-for-word.

Gemini output 9,070 consecutive verbatim words in a single response without a jailbreak.

These aren't patterns being recalled.

These are copies being retrieved.

The legal frameworks AI companies built their businesses on were written before this technology existed.

Courts in 2025 are starting to figure that out.

Two judges in two separate US cases ruled AI training was "transformative" and protected by fair use.

But that was before the Stanford extraction paper landed.

Before 95.8% came out of a model on a $55 budget.

The ground is moving. Faster than the courts.

English

Tihomir Mateev retweetledi

tinyurl.com/3tur5m9u - for those of you that are using AI daily and are wondering why their token usage is high

English

Tihomir Mateev retweetledi

Obsidian is good

Obsidian is small team

Obsidian probably very nice place to work at

Obsidian@obsdmd

The Obsidian team is growing from three engineers to four engineers. Competitive SF salary. Fully remote, live anywhere. Apply below.

English

Tihomir Mateev retweetledi

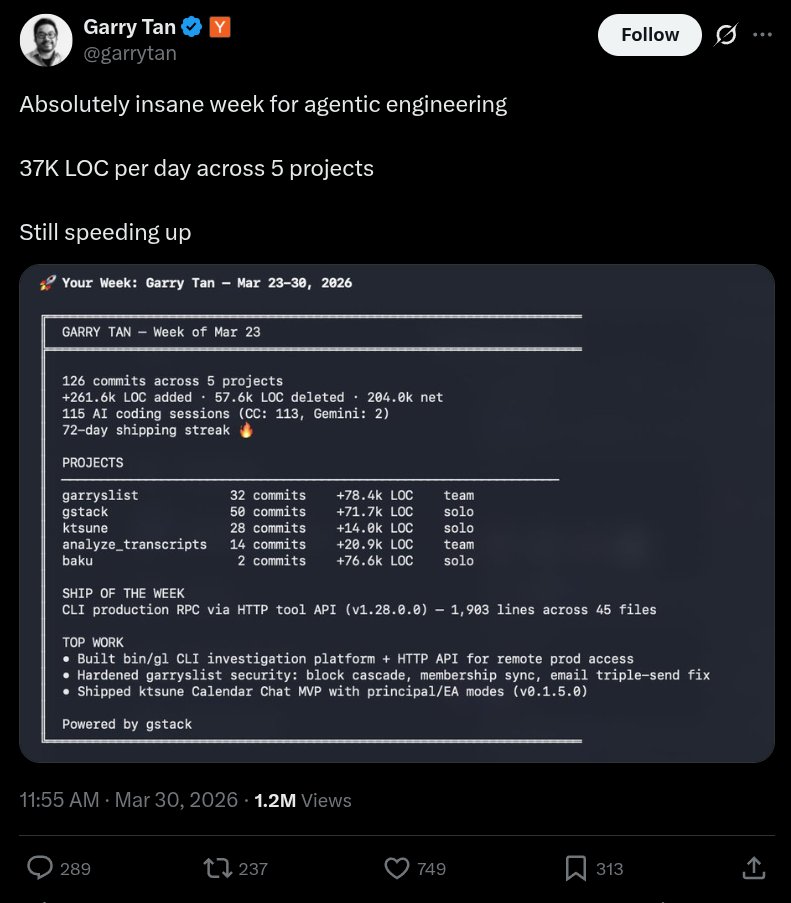

so... I audited Garry's website after he bragged about 37K LOC/day and a 72-day shipping streak.

here's what 78,400 lines of AI slop code actually looks like in production.

a single homepage load of garryslist.org downloads 6.42 MB across 169 requests.

for a newsletter-blog-thingy.

1/9🧵

Garry Tan@garrytan

Absolutely insane week for agentic engineering 37K LOC per day across 5 projects Still speeding up

English

Tihomir Mateev retweetledi

Tihomir Mateev retweetledi

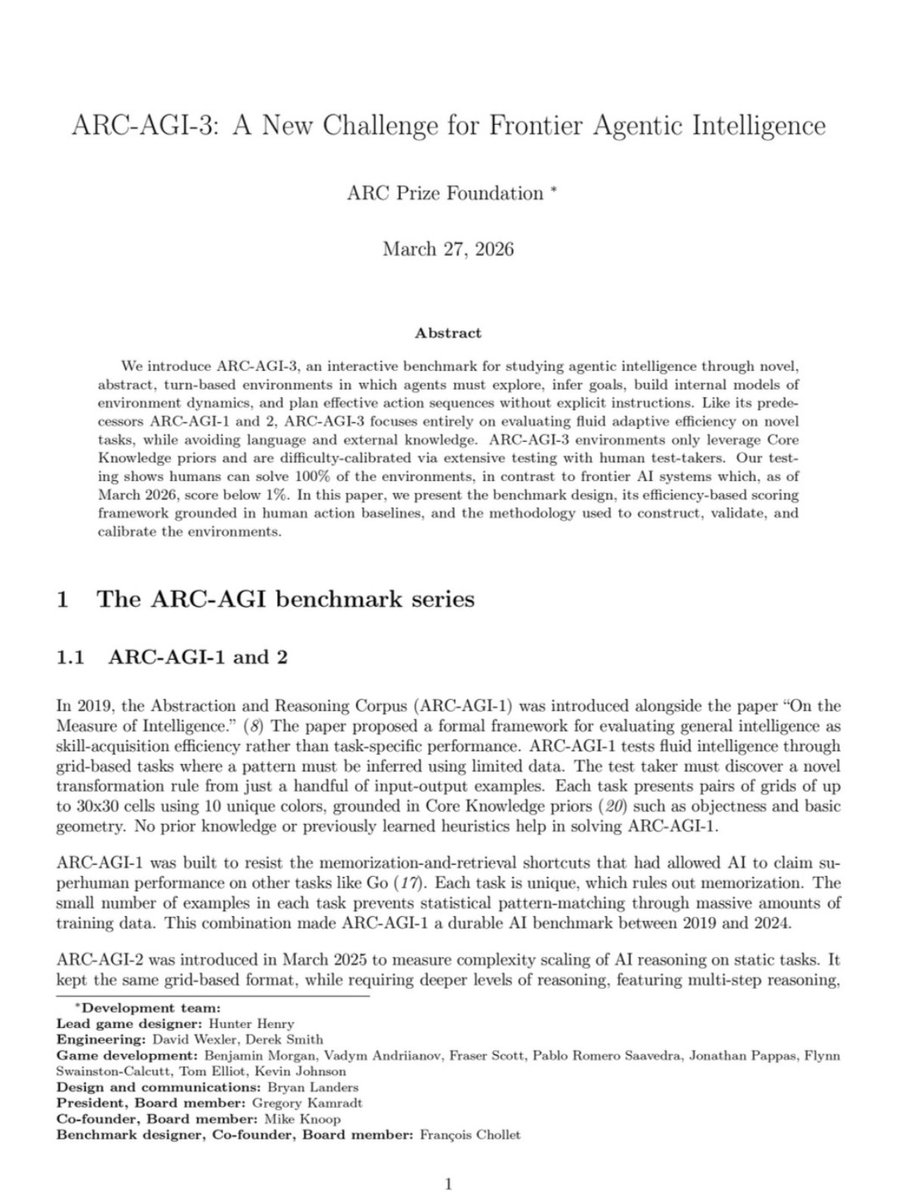

Humans: 100%

Gemini 3.1 Pro: 0.37%

GPT 5.4: 0.26%

Opus 4.6: 0.25%

Grok-4.20: 0.00%

François Chollet just released ARC-AGI-3 -- the hardest AI test ever created.

135 novel game environments. No instructions. No rules. No goals given.

Figure it out or fail.

Untrained humans solved every single one. Every frontier AI model scored below 1%.

Each environment was handcrafted by game designers. The AI gets dropped in and has to explore, discover what winning looks like, and adapt in real time.

The scoring punishes brute force. If a human needs 10 actions and the AI needs 100, the AI doesn't get 10%. It gets 1%. You can't throw more compute at this.

For context: ARC-AGI-1 is basically solved. Gemini scores 98% on it. ARC-AGI-2 went from 3% to 77% in under a year. Labs spent millions training on earlier versions.

ARC-AGI-3 resets the entire scoreboard to near zero.

The benchmark launched live at Y Combinator with a fireside between Chollet and Sam Altman.

$2M in prizes on Kaggle. All winning solutions must be open-sourced.

Scaling alone will not close this gap. We are nowhere near AGI.

(Link in the comments)

English

Tihomir Mateev retweetledi

A hacker group just compromised one of the most widely used security scanners in the world, and used it to steal half a million credentials from companies that trusted it to keep them safe.

On March 19, a threat actor group called TeamPCP injected credential-stealing malware into Trivy, a popular open-source vulnerability scanner maintained by Aqua Security. Trivy is used by thousands of companies to scan their code and infrastructure for security flaws. The attackers compromised 75 GitHub Action tags, the Trivy Docker images, and related CI/CD pipelines, meaning every company running automated security scans through Trivy was unknowingly executing the attackers' code.

The malware harvested SSH keys, cloud credentials, Kubernetes secrets, cryptocurrency wallets, and .env files from every environment it touched. The stolen data was encrypted and exfiltrated to attacker-controlled servers.

But the attack didn't stop there. Using credentials stolen from Trivy's CI/CD pipeline, TeamPCP then backdoored LiteLLM, a widely used Python framework for managing AI model APIs. Two malicious versions (1.82.7 and 1.82.8) were pushed to PyPI, the main Python package repository. The second version was designed to execute automatically on every Python process startup in the environment, no user interaction required. From there, it deployed privileged pods across entire Kubernetes clusters and installed persistent backdoors on every node.

The attackers also pushed compromised Docker images of Trivy (versions 0.69.4, 0.69.5, 0.69.6) to Docker Hub and compromised dozens of npm packages with a self-spreading worm called CanisterWorm. They even defaced 44 internal Aqua Security repositories in a scripted 2-minute burst, renaming them all with "TeamPCP Owns Aqua Security."

According to the International Cyber Digest, which is in direct contact with the attackers, TeamPCP claims to have exfiltrated 300 GB of compressed credentials and is actively working through them. The LiteLLM compromise alone reportedly yielded half a million stolen credentials. The group says it is currently extorting several multi-billion-dollar companies.

Each compromised environment yielded credentials that unlocked the next target. The pivot from CI/CD pipelines to production Python packages running in Kubernetes clusters was deliberate escalation. Security researchers say this campaign is "almost certainly not over."

This is what a modern supply chain attack looks like. The tools companies trust to secure their infrastructure become the attack vector. The irony is brutal, the security scanner was the vulnerability.

English

Tihomir Mateev retweetledi