Dan Fu

886 posts

Dan Fu

@realDanFu

VP, Kernels @togethercompute Assistant Professor @ucsd_cse Looking for talented kernel engineers and performance engineers!

Congrats to the @cursor_ai team on Composer 2.5 — a huge milestone for agentic coding models. Together AI, the AI Native Cloud, is proud to partner on this launch. Composer 2.5 is pushing the frontier for coding agents and turning heads for its speed and quality. Excited to keep building with the Cursor team!

Introducing Composer 2.5, our most powerful model yet. It's more intelligent, better at sustained work on long-running tasks, and more reliable at following complex instructions. For the next week, we’re doubling the included usage of the model.

A milestone for Pearl Research Labs: our first major enterprise partnership is live with Together AI. @togethercompute’s inference platform is an ideal demonstration of @prlnet's value proposition — One of the world’s most advanced hyperscalers running AI workloads on Pearl’s 2-for-1 Cuda kernels, turning inference into ¶PRL coins, and reducing consumer LLM price per token. Excited for what we’ll build together.

Introducing Gemma-4-31B-it-Pearl on Together AI, Pearl Research Labs’ instruction-tuned checkpoint of Gemma 4 31B powered by @prlnet Proof of Useful Work protocol. AI natives can now use this Pearl model as a serverless inference endpoint on Together AI, at a 25%+ discounted pricing.

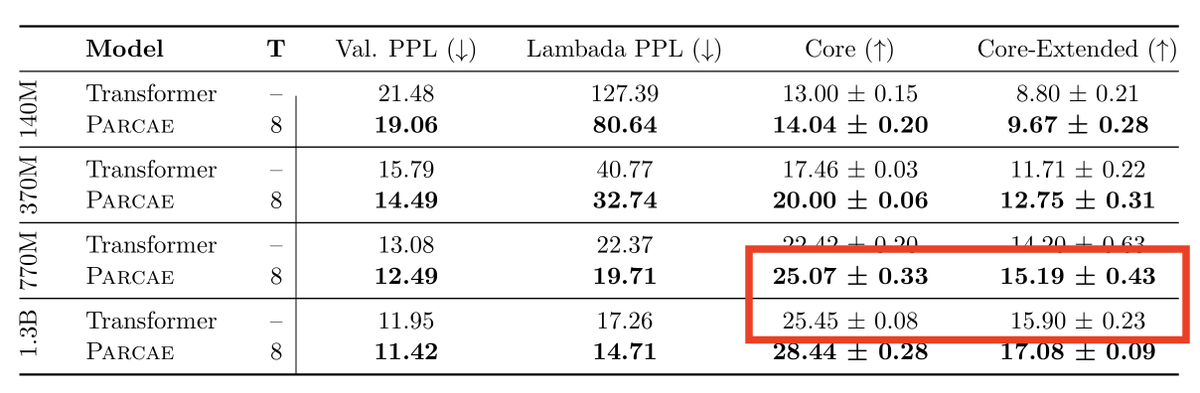

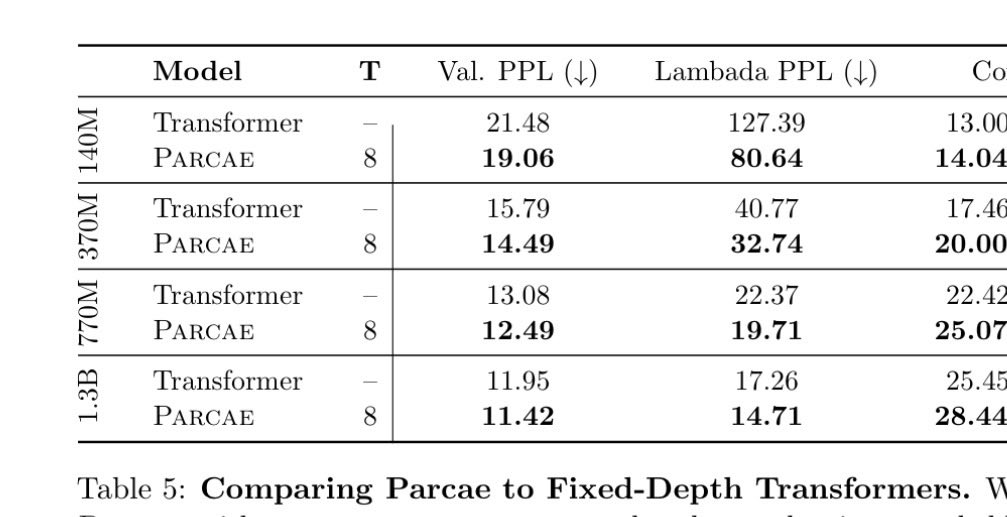

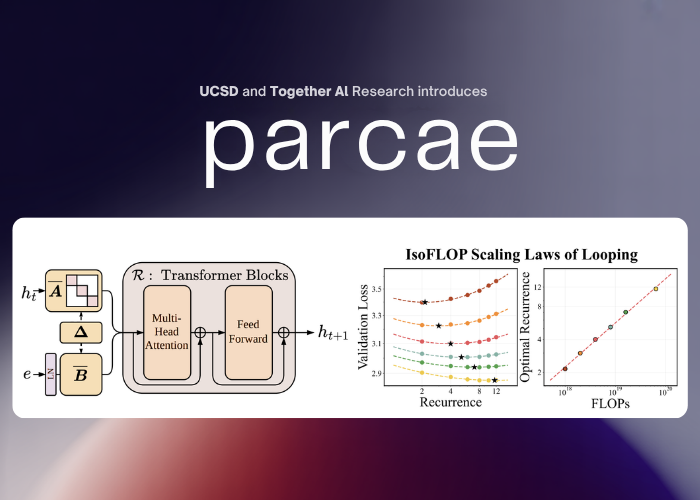

We’ve been thinking a lot about scaling laws, wondering if there is a more effective way to scale FLOPs without increasing parameters. Turns out the answer is YES – by looping blocks of layers during training. We find that predictable scaling laws exist for layer looping, allowing us to use looping to achieve the quality of a Transformer twice the size. Our scaling laws suggest that for a fixed parameter budget, data and looping should be increased in tandem! 🧵👇

We’ve been thinking a lot about scaling laws, wondering if there is a more effective way to scale FLOPs without increasing parameters. Turns out the answer is YES – by looping blocks of layers during training. We find that predictable scaling laws exist for layer looping, allowing us to use looping to achieve the quality of a Transformer twice the size. Our scaling laws suggest that for a fixed parameter budget, data and looping should be increased in tandem! 🧵👇

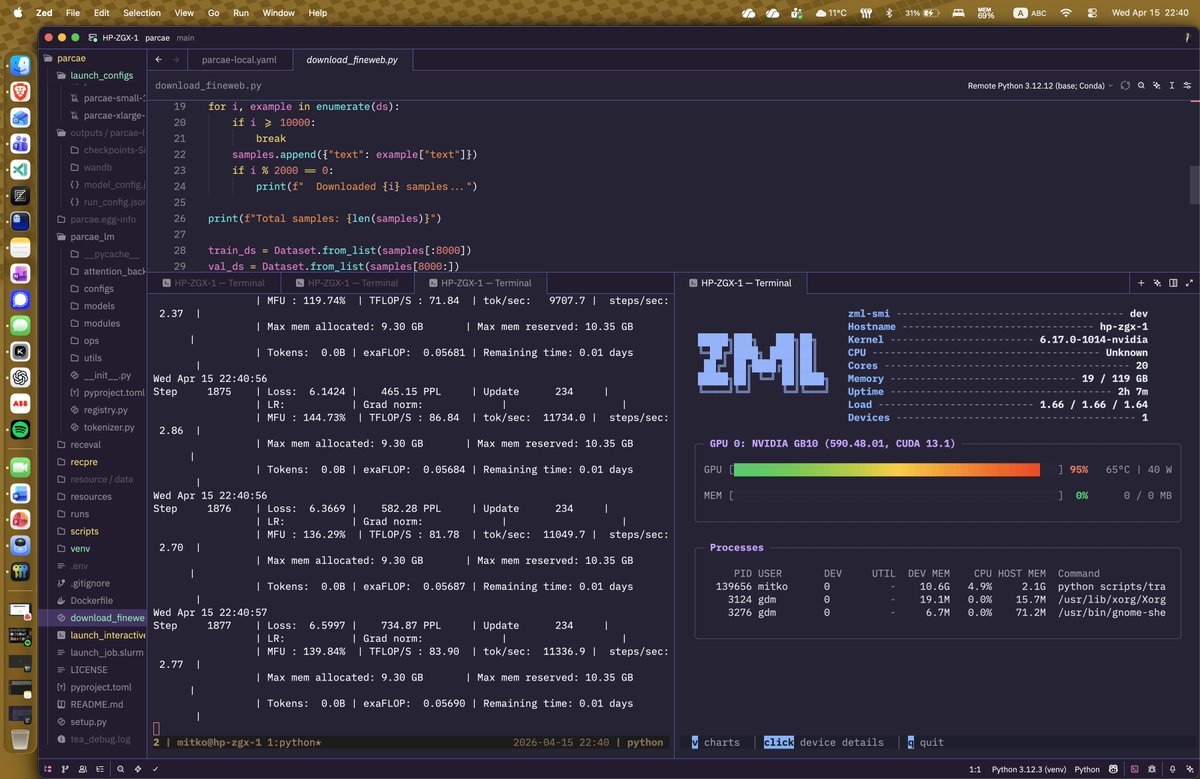

📢 Super excited to announce Parcae! We've been thinking about scaling laws and the "right" way to get more FLOPs. Turns out layer looping - with the right parameterization - gives you a new axis to scale! Parcae matches Transformers 2x their size (w/ the same data), and outperforms prior formulations of looped models. But - you need the right parameterization to get these gains against strong Transformer baselines. Looped models are famously unstable to train, with tons of loss spikes and hyperparameter sensitivity. The main technical challenge with looped models is residual explosion - if you're passing the activations through the same layers over and over, some otherwise benign parameterizations cause huge instability. Our key idea: we can think of the residual stream of a model as a time-varying dynamical system - the same fundamentals behind SSMs like Mamba and S4. Then a few modest modifications to classic Transformers (stable diagonalization of injection params, LN before embeddings) can stabilize the looped models. The resulting models are more stable to train, but also reach higher quality. It's strong enough to start to derive new scaling laws. Classically - we know you need to scale parameters with data to be FLOP-optimal. With Parcae, we find a third axis - given fixed parameters, you additionally want to scale FLOPs by looping as you scale data. Super excited to see how these ideas hold, and what we can do with looped models! Check out @hayden_prairie's great explainer thread below, and see links for our paper, blog, and models. Joint w/ @zacknovack and @BergKirkpatrick, and a fun collab between @togethercompute and my lab at @ucsd_cse. Enjoy!

We’ve been thinking a lot about scaling laws, wondering if there is a more effective way to scale FLOPs without increasing parameters. Turns out the answer is YES – by looping blocks of layers during training. We find that predictable scaling laws exist for layer looping, allowing us to use looping to achieve the quality of a Transformer twice the size. Our scaling laws suggest that for a fixed parameter budget, data and looping should be increased in tandem! 🧵👇