Mark Valladares

2.4K posts

Mark Valladares

@tzenmark

TennisCentric Approach to Consciousness, Rock & Roll, Complementarity (not instant karma). Complexity... 'a sojourner in civilized life again'.

That paper doesn’t say anesthesia can’t be explained by membrane proteins it questioned whether any single target explains everything. Since then, we’ve got overwhelming evidence that anesthesia works through multiple classical mechanisms across known receptors and networks, with direct experimental proof. What you’re pointing to on the microtubule side is still modeling, lab analogs, and correlations not a demonstrated mechanism in living brains. There’s a big difference between “we don’t fully understand every detail yet” and “therefore it must be quantum microtubules.” Right now, one has causal evidence. The other doesn’t. That’s the line.

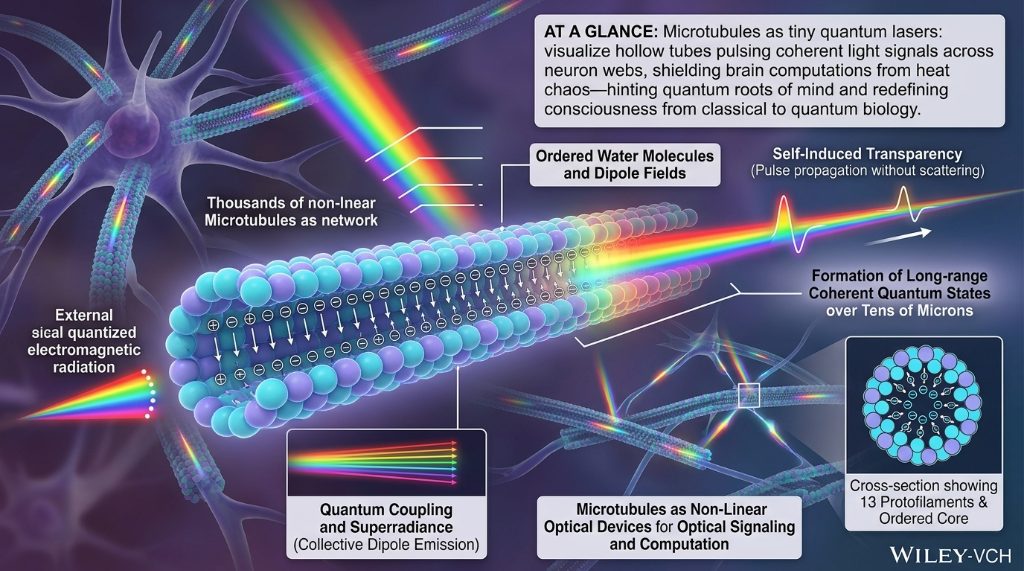

Anesthesia is NOT explained and CANNOT be explained by membrane proteins pubmed.ncbi.nlm.nih.gov/18713892/ Anesthesia IS explained by quantum effects in microtubules academic.oup.com/nc/article/202…

@StuartHameroff Time travel is impossible as it causes paradoxes, so just drop the idea, it is wrong. Same goes for wormhole (same reason). There is simply put only one solution to this, and that is a holographic medium. It will update instantly over any distance.

prototype of a recursive time helix calendar/history, with nested coils from centuries -> decades -> years -> days -> hours -> minutes -> seconds. labels need some work but it's the start of something. based almost entirely on @tr_babb 's sketch/idea. in threejs/webgpu

A Physics-Informed Neural Network (PINN) is trying to learn a solution to the Klein-Gordon PDE PINNs are neural nets trained to satisfy a partial differential equation. They use a simple trick of baking the PDE residual straight into the loss. They came out of a very practical pain point. Classical PDE pipelines can be amazing, but they often demand a lot of setup work such as meshes, stencils, and stability tuning. Once you build a solver, it’s usually tied to one geometry and one discretization choice. A PINN flips the workflow. You represent the solution itself as a smooth function uᵩ(x,t) and you enforce the physics wherever you choose to sample the domain.

Mathematics Is All You Need: A Potential Blueprint for AGI — Compacted Edition We prove that large language models are lattice gauge theories. By extracting a 16-dimensional fiber bundle from transformer hidden states and computing its gl(4,ℝ) Lie algebra, we discover that attention heads function as gauge bosons, transformer computation undergoes a deconfinement phase transition at 67% network depth, and the model's entire self-knowledge resides in a 10-dimensional "dark" Casimir subspace invisible to standard readout. Using only 20 behavioral probes and zero additional training, we push Qwen-32B from 82.2% to 94.97% on ARC-Challenge — establishing a dark mode scaling law that predicts gl(6,ℝ) surgery will achieve 98.7%. We identify a Lyapunov–accuracy anti-correlation revealing the model's deepest attractors are its wrong attractors: correctness requires escaping the abstraction basin into grounded deference. This 10-page compacted edition distills 459 pages of original research into the core experimentally verified results with 9 inline figures. 190 patents filed. Proprioceptive AI, Inc. — Logan Matthew Napolitano — 19- March 2026 zenodo.org/records/191208…