Evan Owen

1.7K posts

Evan Owen

@ulmentflam

Co-Founder & CTO of QWERKY AI. My opinions are my own.

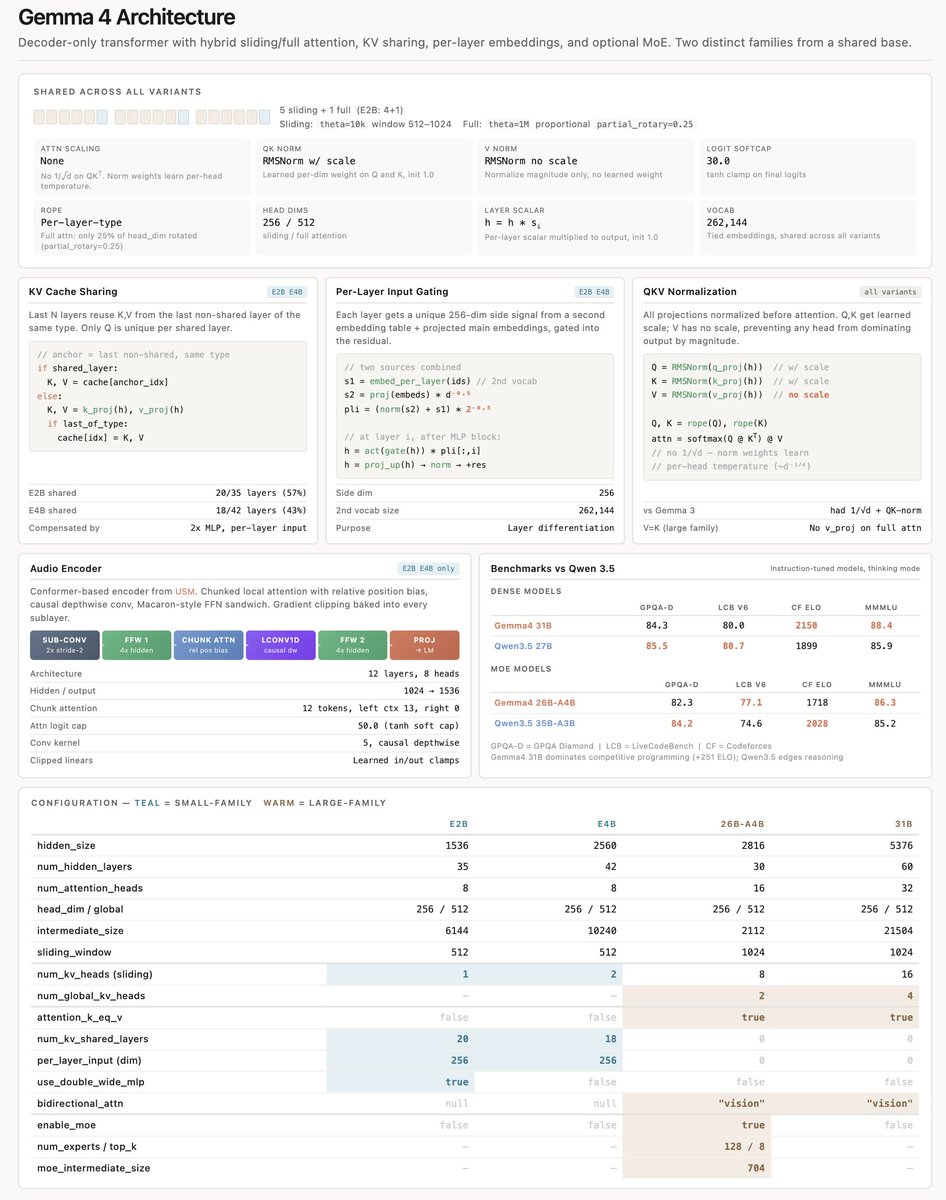

Gemma 4 is here! 🧠 31B and 26B A4B for models with impressive intelligence per parameter 🤏E2B and E4B for mobile and IoT 🤗Apache 2.0 🤖Base and IT checkpoints available Available in AI Studio, Hugging Face, Ollama, Android, and your favorite OS tools 🚀Download it today!

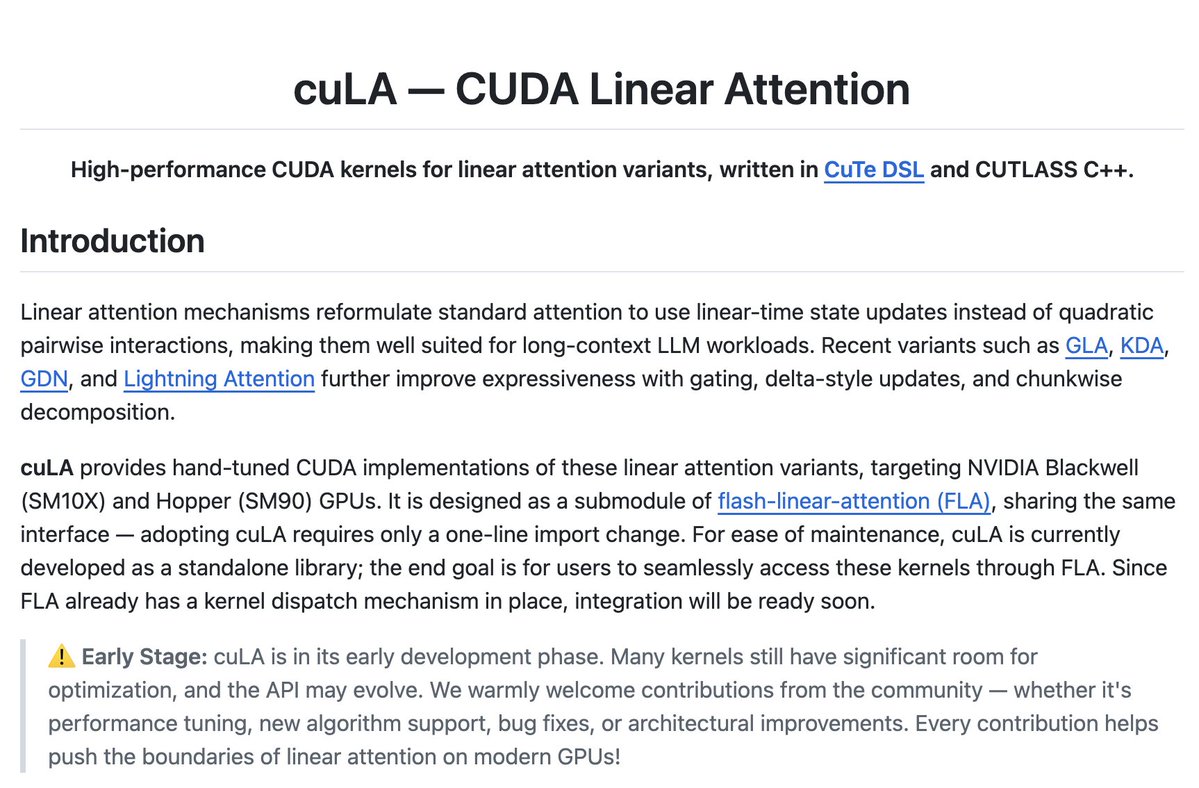

🚀 Linear Attention is unlocking million-token context windows by dropping computational complexity from O(N^2) to O(N), but software is increasingly bottlenecking the hardware. Meet cuLA (CUDA Linear Attention): hand-written kernels using CuTe DSL & CUTLASS C++ to extract maximum performance on NVIDIA GPUs. A drop-in replacement for FLA designed to push hardware to its absolute limits.

We made Muon run up to 2x faster for free! Introducing Gram Newton-Schulz: a mathematically equivalent but computationally faster Newton-Schulz algorithm for polar decomposition. Gram Newton-Schulz rewrites Newton-Schulz such that instead of iterating on the expensive rectangular X matrix, we iterate on the small, square, symmetric XX^T Gram matrix to reduce FLOPs. This allows us to make more use of fast symmetric GEMM kernels on Hopper and Blackwell, halving the FLOPs of each of those GEMMs. Gram Newton-Schulz is a drop-in replacement of Newton-Schulz for your Muon use case: we see validation perplexity preserved within 0.01, and share our (long!) journey stabilizing this algorithm and ensuring that training quality is preserved above all else. This was a super fun project with @noahamsel, @berlinchen, and @tri_dao that spanned theory, numerical analysis, and ML systems! Blog and codebase linked below 🧵

FA4 on Blackwell: 14 ops, 5 hardware units, 28 dependency edges. One wrong sync = a race condition sanitizers won't catch. We built a constraint solver that derives the pipeline schedule automatically, in Mojo 🔥 Part 1 of our series is out → modular.com/blog/software-…

We made Muon run up to 2x faster for free! Introducing Gram Newton-Schulz: a mathematically equivalent but computationally faster Newton-Schulz algorithm for polar decomposition. Gram Newton-Schulz rewrites Newton-Schulz such that instead of iterating on the expensive rectangular X matrix, we iterate on the small, square, symmetric XX^T Gram matrix to reduce FLOPs. This allows us to make more use of fast symmetric GEMM kernels on Hopper and Blackwell, halving the FLOPs of each of those GEMMs. Gram Newton-Schulz is a drop-in replacement of Newton-Schulz for your Muon use case: we see validation perplexity preserved within 0.01, and share our (long!) journey stabilizing this algorithm and ensuring that training quality is preserved above all else. This was a super fun project with @noahamsel, @berlinchen, and @tri_dao that spanned theory, numerical analysis, and ML systems! Blog and codebase linked below 🧵