Ushnish Sengupta @[email protected]

4.7K posts

Ushnish Sengupta @[email protected]

@ultush

Award Winning Teacher. Passionate about #SocialJustice #NonProfits #SocialEnterprise #OpenData #OpenGov #Technology4Good #AIEthics @[email protected]

Ezra Klein: "Having AI summarize a book or paper for me is a disaster. It has no idea what I really wanted to know and wouldn't have made the connections I would've made. I'm interested in the thing I will see that other people wouldn't have seen, and I think AI typically sees what everybody else would see. I'm not saying that AI can't be useful, but I'm pretty against shortcuts. And obviously, you have to limit the amount of work you're doing. You can't read literally everything. But in some ways, I think it's more dangerous to think you've read something that you haven't than to not read it at all. I think the time you spend with things is pretty important." @ezraklein

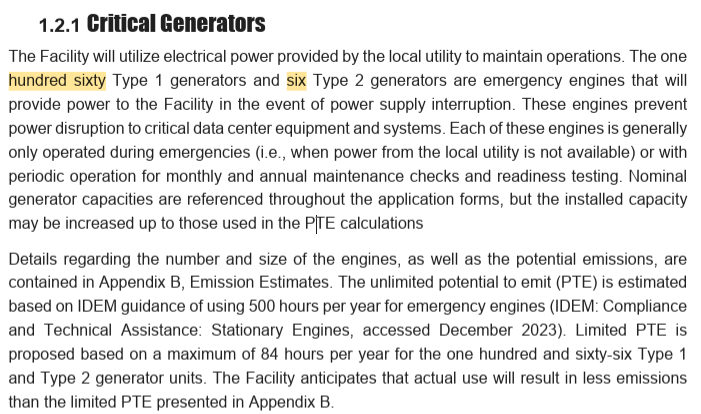

It’s been months and I’m still trying to figure out why AI data centers need fresh water. Not used water. Not recycled water. Fresh water???

🦔 Since Johnson & Johnson added AI to its TruDi Navigation System for sinus surgery in 2021, the FDA has received reports of at least 100 malfunctions and adverse events, up from 8 before the AI was added. At least 10 patients were injured. Two suffered strokes after surgeons accidentally damaged carotid arteries while the system allegedly misinformed them about where their instruments were inside patients' heads. My Take Medical device makers are racing to add AI to their products because it looks good in marketing materials and investor presentations. One lawsuit alleges the company pushed AI into TruDi "as a marketing tool" to claim it had "new and novel technology," and set a goal of only 80% accuracy before shipping it. Eighty percent accuracy is fine for a playlist recommendation. It's not fine for software telling a surgeon where his instrument is inside someone's skull. The FDA has now authorized over 1,350 AI-enabled medical devices, double the number from 2022. Researchers found that 43% of recalls for these devices happened less than a year after approval, twice the rate of non-AI devices. This is what happens when AI becomes a checkbox for fundraising and marketing instead of a technology you deploy because it actually works better. The rush to put AI on everything is running ahead of anyone's ability to know if it's safe. Patients are the ones finding out. Hedgie🤗