Justin Bayer

1.9K posts

Justin Bayer

@usuallyuseless

Student, scholar, teacher & builder of learning autonomous systems.

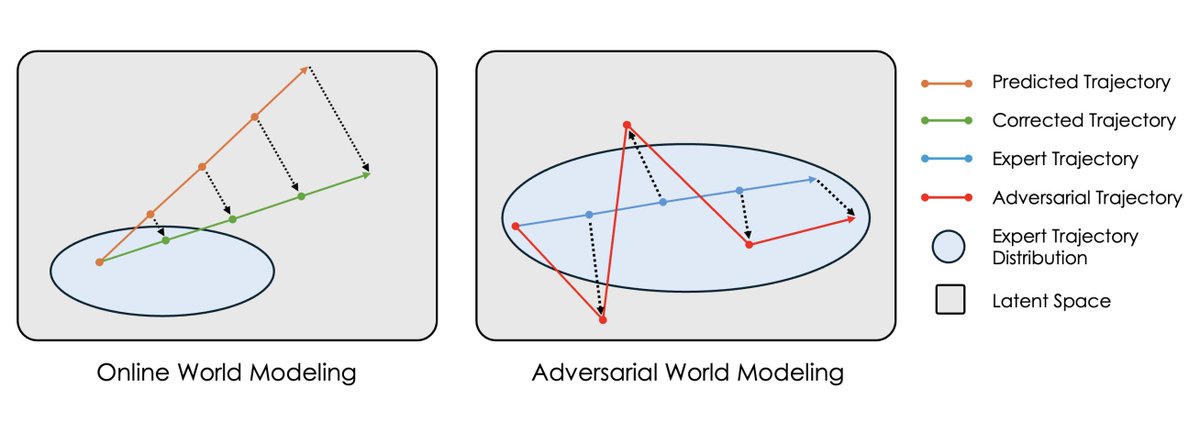

Everyone's freaking out about vibe coding. In the holiday spirit, allow me to share my anxiety on the wild west of robotics. 3 lessons I learned in 2025. 1. Hardware is ahead of software, but hardware reliability severely limits software iteration speed. We've seen exquisite engineering arts like Optimus, e-Atlas, Figure, Neo, G1, etc. Our best AI has not squeezed all the juice out of these frontier hardware. The body is more capable than what the brain can command. Yet babysitting these robots demands an entire operation team. Unlike humans, robots don't heal from bruises. Overheating, broken motors, bizarre firmware issues haunt us daily. Mistakes are irreversible and unforgiving. My patience was the only thing that scaled. 2. Benchmarking is still an epic disaster in robotics. LLM normies thought MMLU & SWE-Bench are common sense. Hold your 🍺 for robotics. No one agrees on anything: hardware platform, task definition, scoring rubrics, simulator, or real world setups. Everyone is SOTA, by definition, on the benchmark they define on the fly for each news announcement. Everyone cherry-picks the nicest looking demo out of 100 retries. We gotta do better as a field in 2026 and stop treating reproducibility and scientific discipline as second-class citizens. 3. VLM-based VLA feels wrong. VLA stands for "vision-language-action" model and has been the dominant approach for robot brains. Recipe is simple: take a pretrained VLM checkpoint and graft an action module on top. But if you think about it, VLMs are hyper-optimized to hill-climb benchmarks like visual question answering. This implies two problems: (1) most parameters in VLMs are for language & knowledge, not for physics; (2) visual encoders are actively tuned to *discard* low-level details, because Q&A only requires high-level understanding. But minute details matter a lot for dexterity. There's no reason for VLA's performance to scale as VLM parameters scale. Pretraining is misaligned. Video world model seems to be a much better pretraining objective for robot policy. I'm betting big on it.

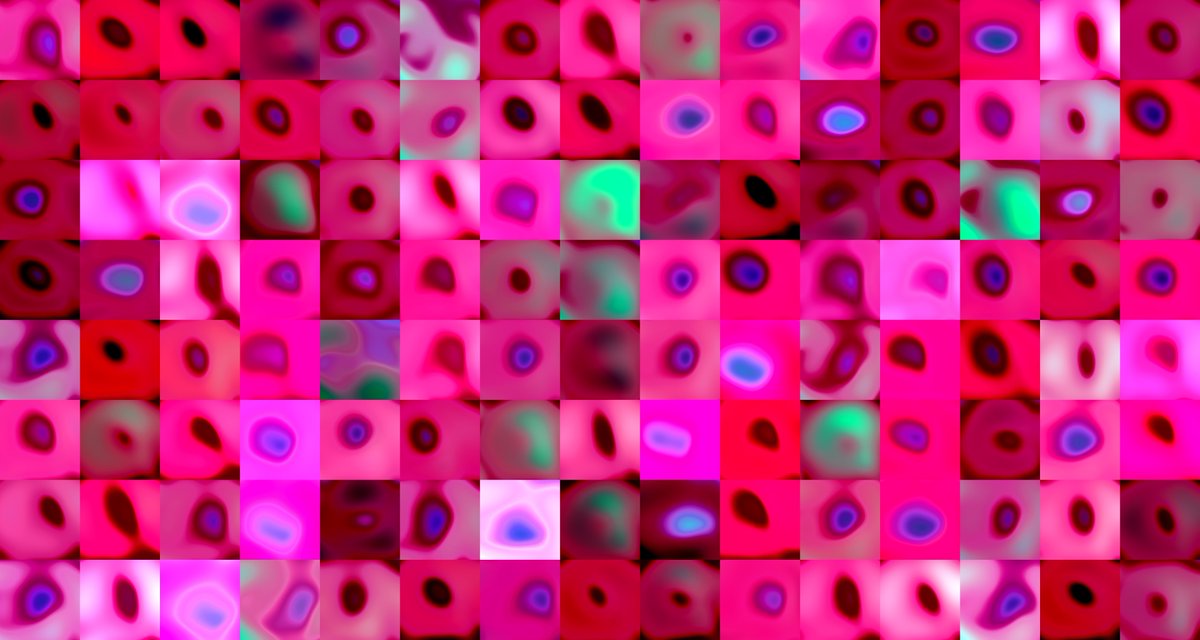

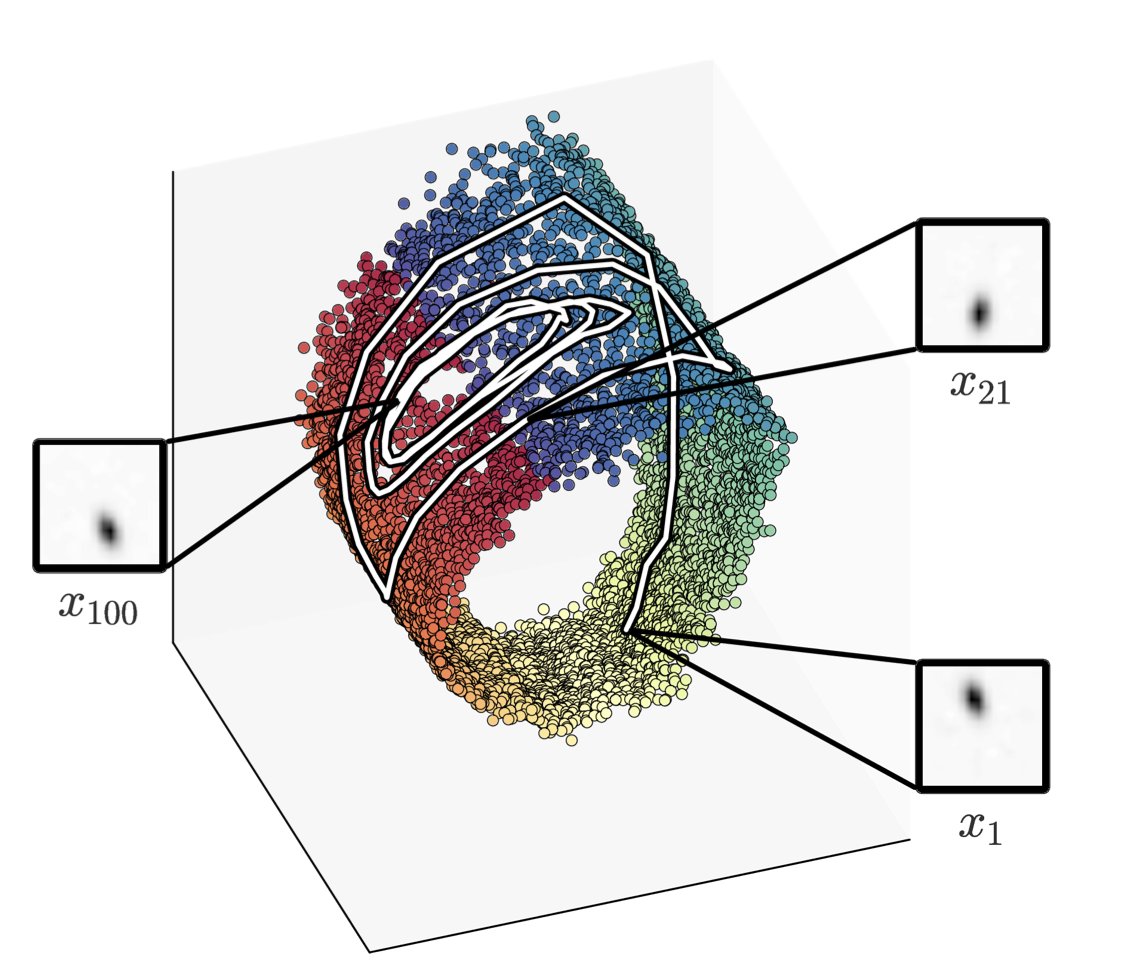

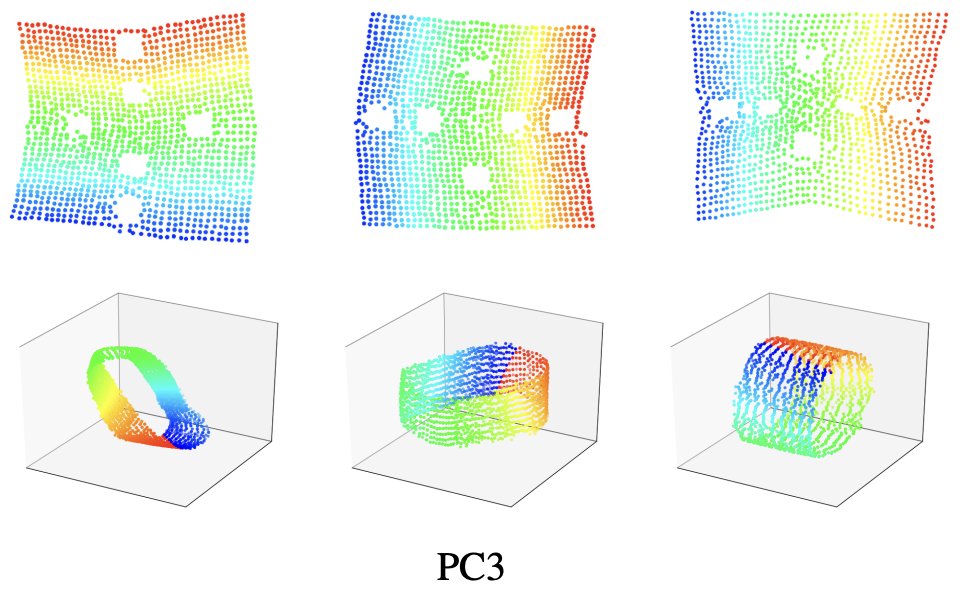

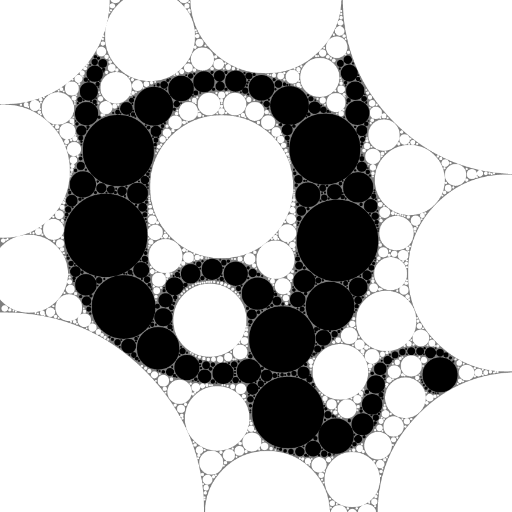

Generative models (diffusion/flow) are taking over robotics 🤖. But do we really need to model the full action distribution to control a robot? We suspected the success of Generative Control Policies (GCPs) might be "Much Ado About Noising." We rigorously tested the myths. 🧵👇

calling a model with a non-commercial license "open weights" is bullshit.