vume

56 posts

How Apple mfrs think this goes >be me >drop $1600 on two RTX 3090s used off eBay >"48GB VRAM, I'm basically a datacenter now" >they arrive in anti-static bags that look like they've been through a war >plug them into my motherboard and it sounds like a jet engine taking off >neighbors probably think I'm mining crypto again >install llama.cpp, download qwen3.6-27b quantized >"Q4_K_M, only 16GB, totally fits" >start LM Studio on port 1234 >type "hello" into the chat box >GPU fans spin up to 100% instantly >wait 8 seconds for a response >>"Hello! How can I assist you today?" >I've seen faster responses from my grandma reading a text aloud >try Q8_0 quantization because "quality matters" >OOM error, obviously >spend three hours tweaking n_gpu_layers and n_ctx like it's some kind of dark art >finally get it running at 4 tokens per second >ask it to write me a poem about my GPUs >>"Two cards of silicon and light / They hum through the endless night" >"bro this is actually fire" >show it to someone on Discord >”why are you running LLMs locally when you could just use an API for free" >explain that the joy isn't in the output, it's in watching 94% VRAM usage and knowing nobody else has access to my model >they don't understand >close Discord, open LM Studio again >"let's try a longer context window" >crash

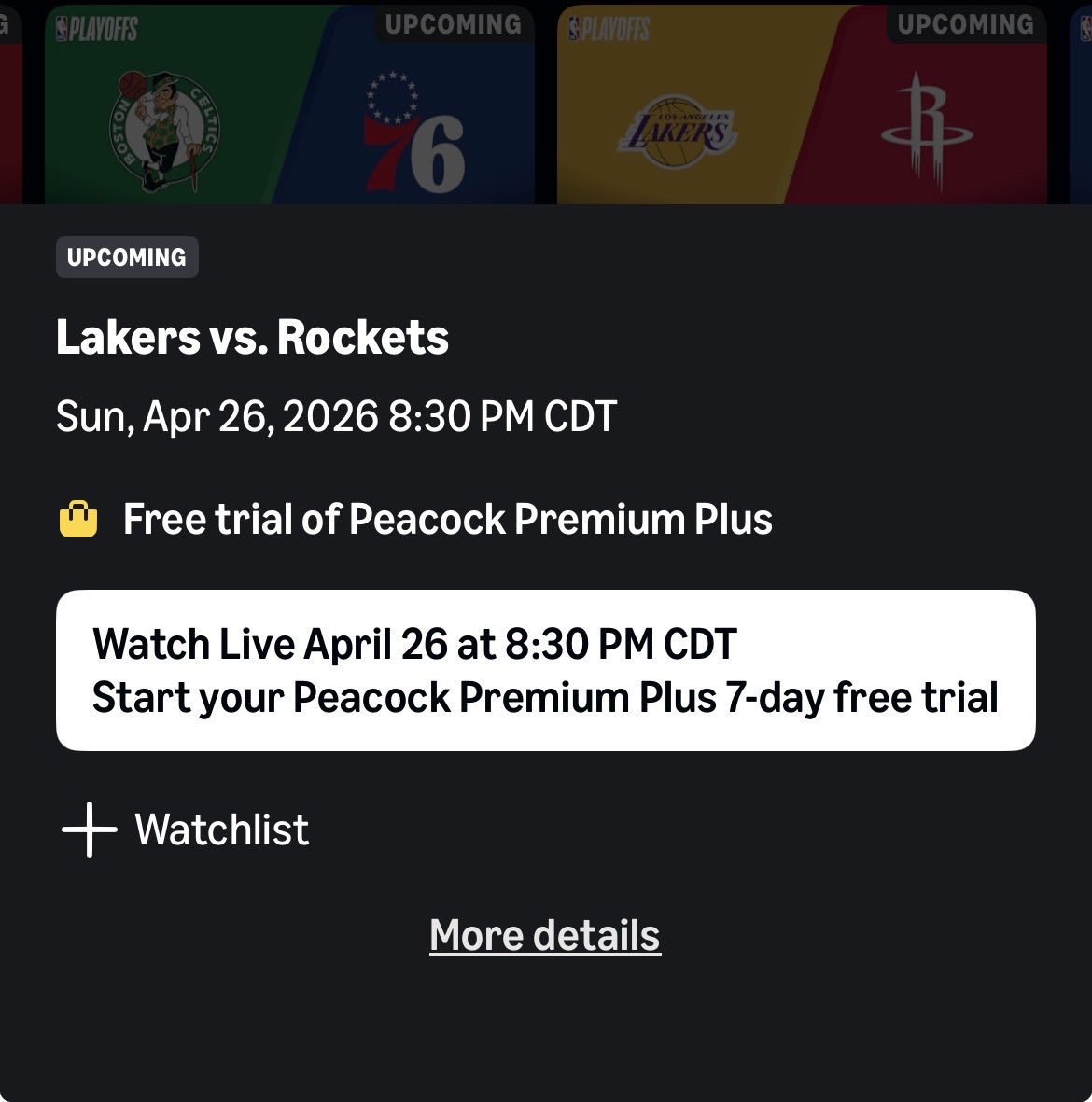

O LEBRON NÃO DÁ CARA KKKKKKKKKK #NBA #basketball #basquete #nbabrasil