Sabitlenmiş Tweet

Anurag Verma

2.1K posts

Anurag Verma

@vanurag12

Product Leadership@12BN $ org. Learn how to ace product roles & crack product leadership roles. Author: Product cases play book 👇

Bangalore, India Katılım Eylül 2013

292 Takip Edilen713 Takipçiler

Anurag Verma retweetledi

Anurag Verma retweetledi

Anurag Verma retweetledi

Anurag Verma retweetledi

Anurag Verma retweetledi

Axar Patel can bowl with the new ball, gets top order batters out, plays match-winning cameos, and has taken match winning catches for india. All-rounder ho toh bapu jaisa. Vice captain for a reason. @akshar2026

English

Anurag Verma retweetledi

Anurag Verma retweetledi

There’s a different kind of joy you feel watching certain batters bat. @IamSanjuSamson is one of them. 97*, 89 and 89 at the biggest stage in knockout games. Moves like silk but is made of steel. 🙌🏼👏🏼 #INDvNZ #t20worldcup2026

English

Anurag Verma retweetledi

Not every inspiring sporting story ends with a trophy. Over the last few days, Lakshya Sen has shown India what courage, resilience and belief truly look like. His run to another All England final, through extraordinary wins and immense physical pain, has been about far more than a result. He has reminded young India that greatness lies not only in winning, but in the honesty of effort, the dignity of the fight and the strength to keep believing. I am Proud of you, @lakshya_sen . Very, very proud.

English

Anurag Verma retweetledi

3️⃣2️⃣1️⃣ RUNS 🔥

3️⃣ FIFTIES 👏

8️⃣0️⃣.2️⃣5️⃣ AVERAGE ✨

A performance for the ages from SANJU SAMSON 🫡

#TeamIndia | #T20WorldCup | #MenInBlue | #Final | #INDvNZ | @IamSanjuSamson

English

Anurag Verma retweetledi

Anurag Verma retweetledi

Anurag Verma retweetledi

While Cost/minute is an imp metric, what really matters here is cost/outcome metric (Cost/qualified lead, cost/conversion) with a voice AI experience tailored/finetuned for the use case at scale.

My experience with voice AI deployments is that cost/outcome metric doesn't play out even if the cost/minute was pretty low because metrics like lead qualification rate don't work out. Everything needs to be good interrupt handling, voice modulation, latency, context engg across multiple calls, enterprise guardrails, privacy compliance, knowing when to handover to a real human. Having said that there are few players in the market that are getting there.

Rajan Anandan@RajanAnandan

. @YashishDahiya, we are fully able to deliver at Rs2/minute already! Time for @policybazaar to partner with @SarvamAI @pratykumar @vivekrag

English

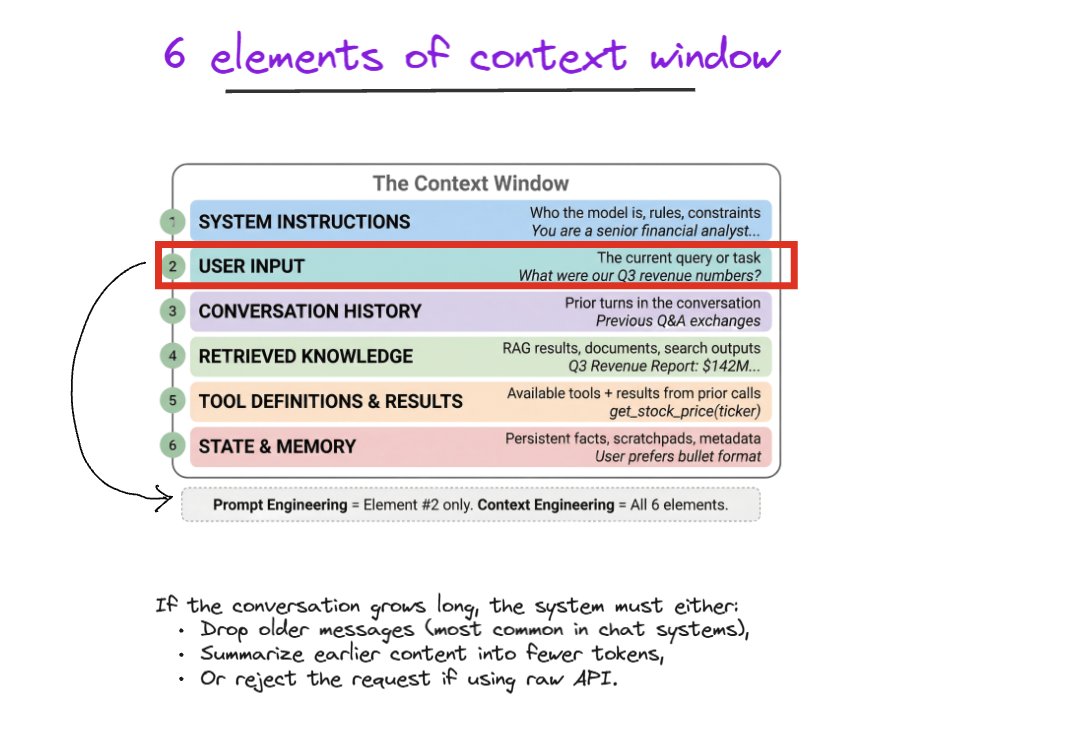

So much talk on AI agents & AI product management and how AI is able to access the browser, hit an API, access file system but ask a very basic question to these self professed AI PMs ****if all an LLM can do is generate text/images/video -

How on earth is it able to launch the browser, execute code to hit an API without a run time or access a file system? And what you will get is radio silence****

Alright so for all those self certified AI PMs - here is the magic -

An LLM never directly uses tools. It only describes tool usage in text.

We build the tools (say an API to get product prices) and tell LLM what are the tools available and how it can request for tool usage in text through a structured JSON like below

{

"name": "search_flights",

"arguments": {

"from": "string",

"to": "string",

"date": "date"

}

}

It is straightforward to pass this information to LLM at inference time since they are very good at generating JSON.

Now when LLM actually generates this JSON in response to a user prompt it is our responsibility to actually handle this tool call request from the LLM, get the requested output and pass it back to the LLM so that it can respond to the end user’s request. So something like

If LLM response = tool call

And tool call function name = search flights

// do appropriate computation

// return the result say Indigo 7 AM Del-Bangalore at Rs 7000 and add it to the LLM prompt

Now the LLM is able to respond to the end user saying there is a 7 AM Del-Bangalore flight available.

So an LLM is not magically able to access your file system or crawl a web page or search flights. We build those tools aka write that code and pass the output to LLMs at inference time. This is the first pillar for AI agents.

Follow me for high quality content, not product philosophy but practical insights.

GIF

English

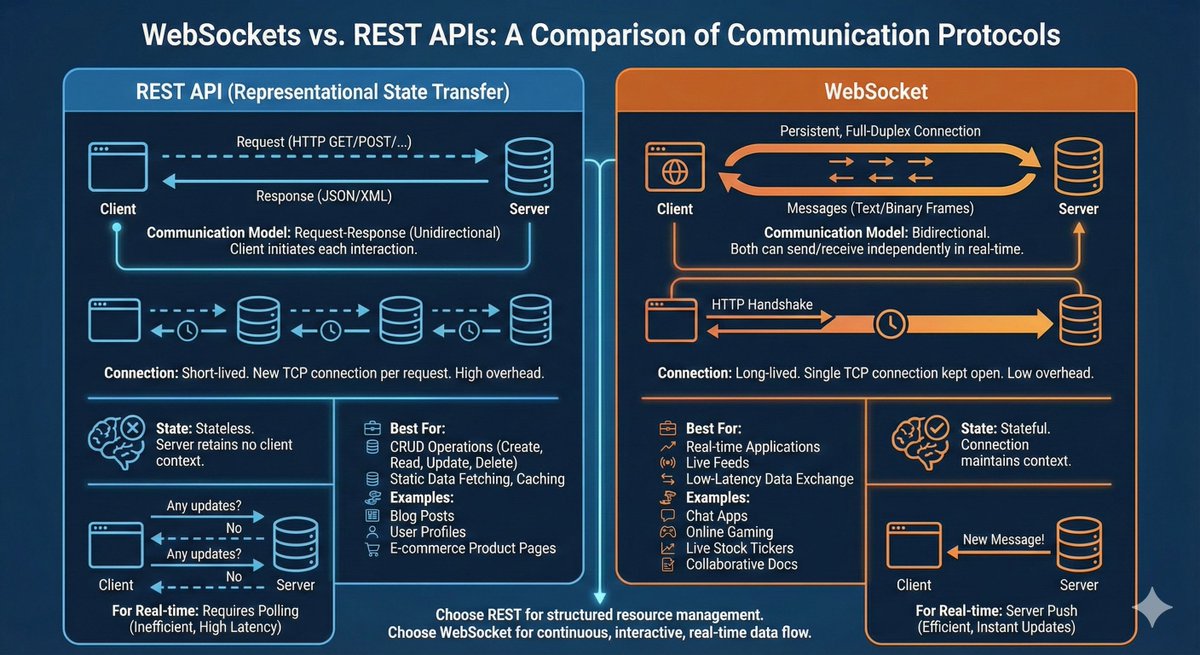

With the advent of AI tech challenged PMs will soon become history. However, most intro to tech for product managers ends at (REST) APIs over HTTP/HTTPs, let's now talk about so many other ways in which client and server can interact.

What is an API - well the most simplistic but clear explanation is it is a URL/endpoint that gives you raw data that you can munge as per your requirement. This is the classic request, response model. The client requests, the server responds.

There are more options here in the request, response model eg. there is the newer GraphQL rather than REST and then there are Remote Procedure Calls in the new avatar gRPC. However, I digress.

Let’s switch gears. If you follow the simple Socratic way of reasoning you can easily think of scenarios where the request-response model doesn't work. All you have to do is look around.

Example there are very common scenarios where a server would like to push updates without waiting for client to seek anything. Still unsure?

***Think Chat Apps, collaborative editing such as Google docs, multiplayer games***. In fact in all these cases you want the client and server to push updates to each other at any point of time in a bi-directional manner. ****For this the classic rest APIs don't work, what you need are Websockets.****

Never heard of websockets, well time to upgrade.

The above example is bidirectional communication.

What are the other possibilities - well think of scenarios where it is the server pushing updates to client in one direction. You ask what are these scenarios. ***Well here are some - Live News, Sports scores, Stock tickers***

In these scenarios all you want is client establishing a connection and server pushes information whenever it has a new update. The client doesn't request data every single time. ***This paradigm is called Server Sent Events/SSE****

If you think more there are lots of other scenarios such as 1:1 video streaming, broadcast streaming etc which have other paradigms which we can cover in a subsequent post.

English

@lennysan Which also means that having amazing commercial sense and deep understanding of how product metrics translate into top line/bottomline will also become super imp.

English

Hi @lennysan given the role is evolving rapidly and now PMs can prototype and test multiple options within the solution space at a high pace, I believe spending a lot more time in problem space and identifying high quality problems to solve which will deliver material outcomes becomes even more imp. In fact a corollary to this is that PMing even at a junior levels will be more about problem space than solution space. A high agency and quality PM even at junior levels should be able to drive really large outcomes.

English

A super intuitive guide to ***how attention works in LLMs*** for Product Managers and early career AI professionals. Why learn about attention because it is the most imp foundation of how LLMs work.

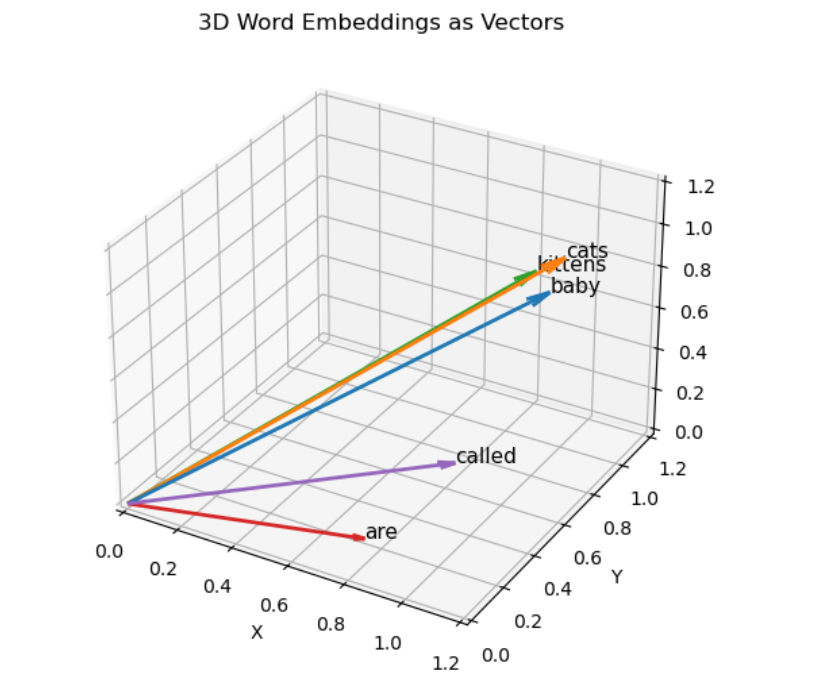

Imagine the sentence "Baby Cats are called Kittens". Now if you understand token embeddings, you understand that words/tokens can be represented as vectors that also capture the semantic similarity between them.

So in the above example think of every word as 1 token, and that we can represent every word with a vector. (For sake of simplicity I have considered a 3 dimensional vector embedding)

Word Embedding

baby [1.0, 0.9, 0.8]

cats [1.0, 1.0, 0.9]

are [0.8, 0.1, 0.1]

called [1, 0.3, 0.4]

kittens [0.9, 1.0, 0.8]

If you plot these vectors intuitively you can see that baby, cats and kittens have semantic similarity. (See the chart, generated using a jupyter notebook)

Now self attention means which other input tokens I should pay max attention to while I am evaluating the current token. Say I am currently focusing on "cats". To represent cats in a more context aware manner I should know which other tokens from the text I should focus on.

To get this the intuitive mechanism is ***dot product b/w cat token vector and other token vectors..***

Query vector

q (cats)=[1.0,1.0,0.9]

Dot product with all keys (all tokens)

Key word Dot product Value (Attention score)

baby 1×1+1×0.9+0.9×0.8 2.62

cats 1×1+1×1+0.9×0.9 2.81

are 1×0.8+1×0.1+0.9×0.1 0.99

called 1×1+1×0.3+0.9×0.4 1.66

kittens 1×0.9 +1×1+ 0.9×0.8 2.62

The dot products tell you which other tokens to pay max attention to.

So baby and kittens are the ones to pay max attention to when evaluating cats. (besides self)

***Post this we are normalizing these attention scores to make them sum to 1, and then using them to find a context aware vector representation for "cats".***

**Rest of the steps

1. Scale by sqrt(dimension) in this case sqrt(3)

Word Scaled score

cats 1.62 = 2.81/sqrt(3)

baby 1.51

kittens 1.51

called 0.96

are 0.57

2. Softmax → attention weights (Softmax is nothing but taking exponentials and normalizing)

Exponentials:

e¹·⁶² ≈ 5.05 (cat), e¹·⁵¹ ≈ 4.53 (baby), e¹·⁵¹ ≈ 4.53 (kittens), e⁰·⁹⁶ ≈ 2.61 (called), e⁰·⁵⁷ ≈ 1.77 (are)

Sum ≈ 18.49

So cat attention weight = 5.05/18.49 = 0.27, baby = 4.53/18.49 = 0.25 and so on.

Finally cat context aware vector = attention weights * embedding vectors = 0.25baby+0.27cats+0.10are+0.14called+0.25kittens = [0.96,0.79,0.7]

This embedding vector is now a contextual embedding.

Still strongly “cat-like” but enriched by baby and kittens

Follow me

English

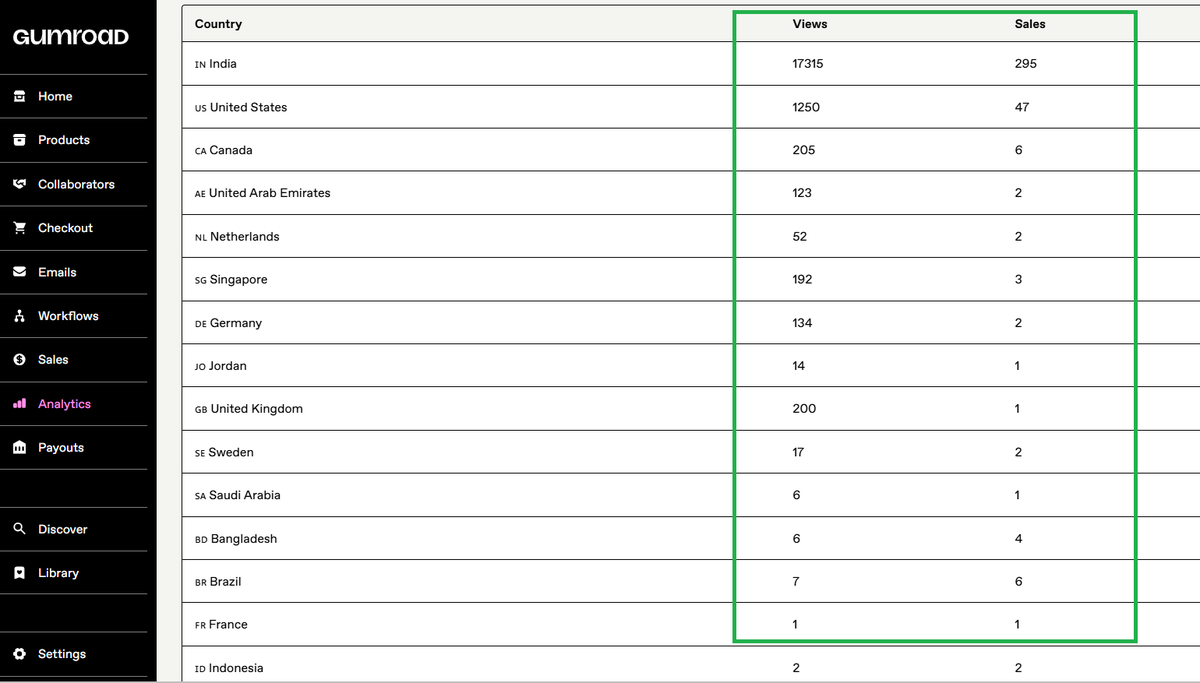

@vanurag12 This is underrated advice. My side project taught me more about pricing psychology in 3 months than any PM course ever did. Nothing humbles you like watching 90% of users abandon at the pricing page.

English