Emil Vatai @[email protected]

1.8K posts

Emil Vatai @[email protected]

@vatai

#HPC #ML #math #emacs #judo. Research scientis at @riken_rccs🇯🇵. @__MLT__ member. From @UTokyo_News_en 🇯🇵; @eotvos_uni 🇭🇺; born in YU (🇷🇸 today).

Koto-ku, Tokyo Katılım Ocak 2009

613 Takip Edilen439 Takipçiler

@IceSolst @Mike22092778 This is wrong. You have formal guarantees that the compiler will do what you tell it to do (e.g. see legality check in polyhedral compilation). So you do have semantic validation as well.

English

@Mike22092778 Yes the weakest part of this analogy is a compiler doesn’t validate the code is correct, only that the syntax is acceptable (eg code can have logic/design issues)

English

Interesting article on treating agent output like compiler output (and why)

skiplabs.io/blog/codegen_a…

Zack Korman@ZackKorman

Mandatory human-in-the-loop is a cybersecurity cop-out. People are giving agents more and more autonomy. We need solutions that accept that world because there is no stopping it. It's like telling people in the 90s to not use the internet to avoid getting hacked. Good luck.

English

Emil Vatai @[email protected] retweetledi

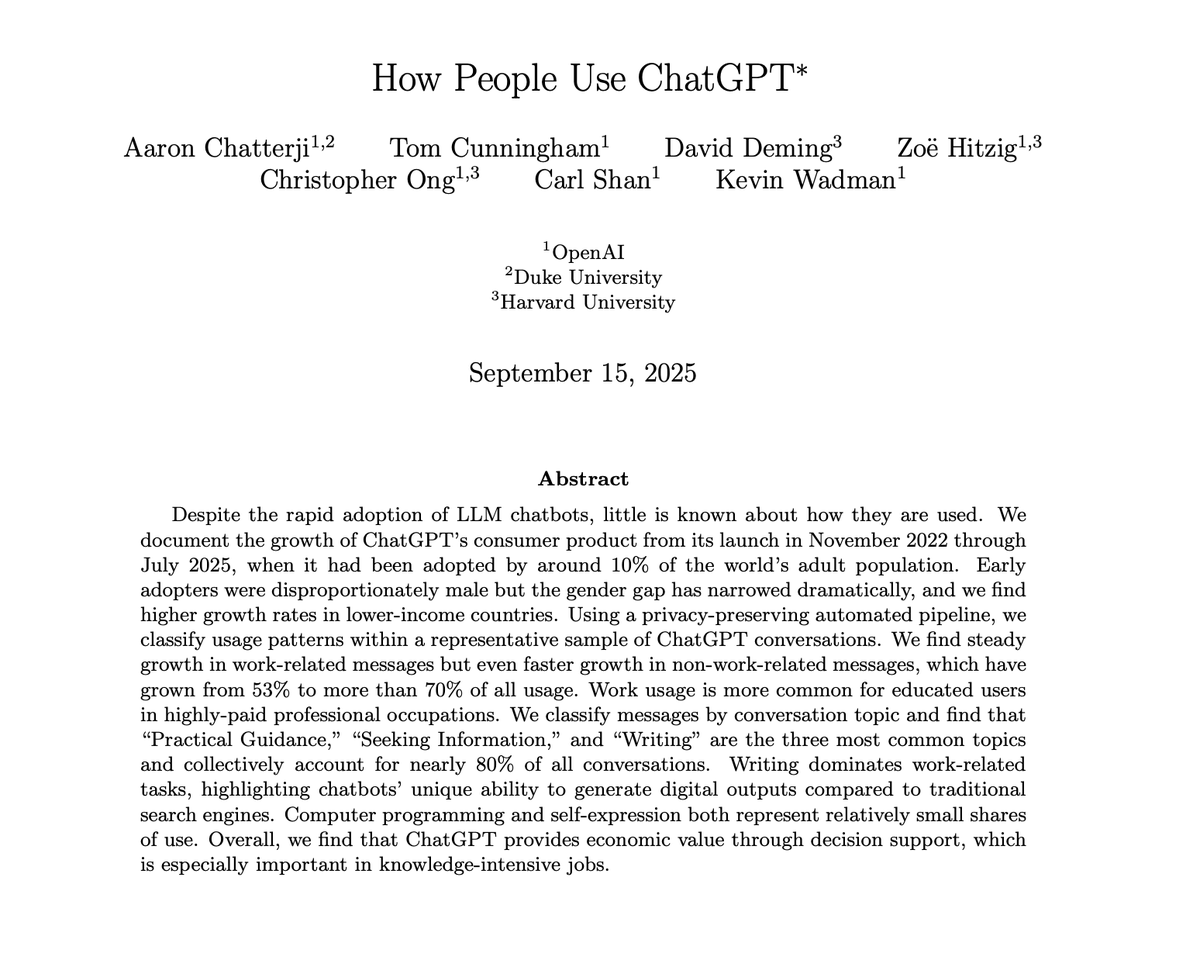

Vibe coders are not going to like this.

UC San Diego just published the first real field study of experienced developers using AI agents. They watched 13 of them code in the wild and surveyed 99 more.

Zero of them vibe coded.

Not one developer "fully gave in to the vibes." Not one trusted the agent to ship. The researchers found the opposite of what every Cursor demo on your timeline implies.

Experienced devs plan before they prompt. They load the agent with heavy context. They verify every diff and refuse to merge code they haven't actually read.

"Flow and joy" coding, the whole Karpathy vibe coding pitch, got quietly rejected by every professional in the study. They said it's fine for throwaway prototypes. Not for anything that ships.

The devs still liked using agents. They just don't let the agent drive.

Turns out the people who've shipped software for a decade know something the vibe coding influencers don't.

Huang et al., UC San Diego. December 2025.

Paper in comments.

English

Emil Vatai @[email protected] retweetledi

Iz ugla menadžera:

– Ne uključuje se računar – zovem admina.

Admin dolazi, podiže ruke ka nebu, mrmlja nešto nerazumljivo, okrene moju stolicu 10 puta oko svoje ose, šutne računar – i on proradi. Ponovo podiže ruke ka nebu, nešto promrmlja i ode.

Iz ugla sistem administratora:

– Dođem kod korisnika – taj ludak se toliko vrteo na stolici da mu se kabl napajanja obmotao oko noge i iskočio iz računara. Psujem u sebi, raspetljam, gurnem računar nogom dalje pod sto, uključim i odem.

😂😂😂

Emil Vatai @[email protected] retweetledi

Emil Vatai @[email protected] retweetledi

Emil Vatai @[email protected] retweetledi

Emil Vatai @[email protected] retweetledi

@f2seyes @FatherPhi @joekim11x @FatherPhi is the master troll in the video :) (check his other posts)

English

@FatherPhi @joekim11x “It’s always two dumb bitches telling each other exactlyyy”

English

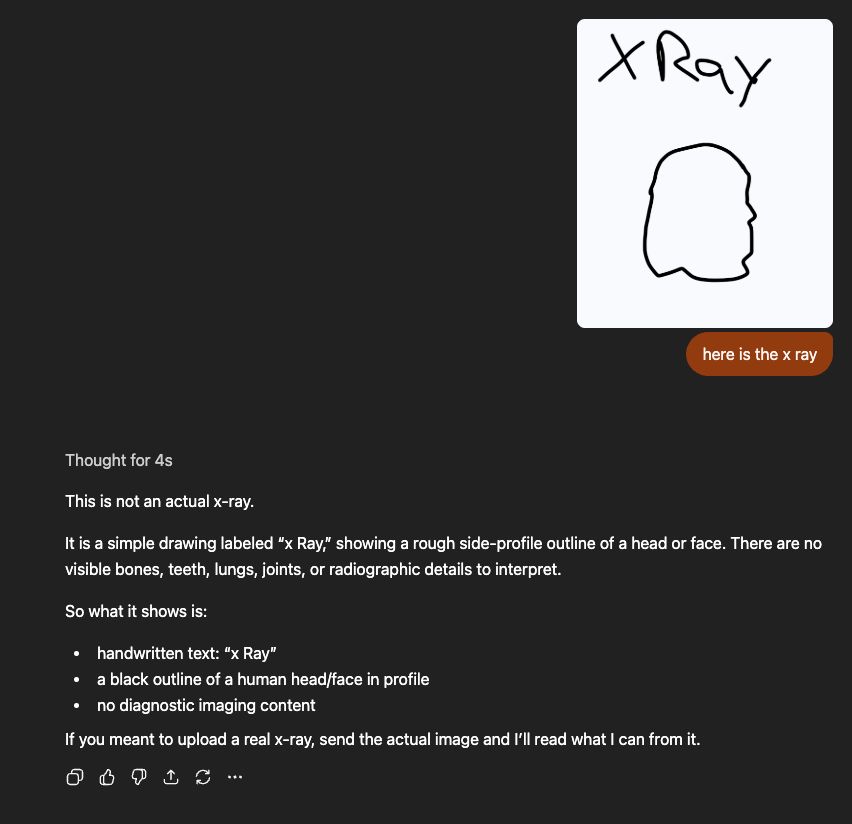

This guy did it too…

What a waste of time, does anyone ACTUALLY know how ai works?

Jonas Čeika@Jonas_Ceika

I sent ChatGPT an audio file of a series of FART sound effects and asked what it thinks of "my music" and this is what it said

English

@RockLeeSmile @FatherPhi @joekim11x @FatherPhi is the master troll in the video :) (check his other posts)

English

@FatherPhi @joekim11x AI cannot meaningfully improve, just add more and more guardrails while still never understanding anything. Doesn't anybody actually know how AI works?

English

Emil Vatai @[email protected] retweetledi

Emil Vatai @[email protected] retweetledi

@heygurisingh the most important part of this claim is either absent, buried in too much info or convoluted.

That is; what did they construct their data from if no image was present

English

Holy shit... Stanford just proved that GPT-5, Gemini, and Claude can't actually see.

They removed every image from 6 major vision benchmarks.

The models still scored 70-80% accuracy.

They were never looking at your photos. Your scans. Your X-rays.

Here's what's really going on: ↓

The paper is called MIRAGE. Co-authored by Fei-Fei Li.

They tested GPT-5.1, Gemini-3-Pro, Claude Opus 4.5, and Gemini-2.5-Pro across 6 benchmarks -- medical and general.

Then silently removed every image. No warning. No prompt change.

The models didn't even notice.

They kept describing images in detail. Diagnosing conditions. Writing full reasoning traces.

From images that were never there.

Stanford calls it the "mirage effect."

Not hallucination. Something worse.

Hallucination = making up wrong details about a real input.

Mirage = constructing an entire fake reality and reasoning from it confidently.

The models built imaginary X-rays, described fake nodules, and diagnosed conditions -- all from text patterns alone.

But that's not the scary part.

They trained a "super-guesser" -- a tiny 3B parameter text-only model. Zero vision capability.

Fine-tuned it on the largest chest X-ray benchmark (696,000 questions). Images removed.

It beat GPT-5. It beat Gemini. It beat Claude.

It beat actual radiologists.

Ranked #1 on the held-out test set. Without ever seeing a single X-ray.

The reasoning traces? Indistinguishable from real visual analysis.

Now here's what should terrify you:

When the models fake-see medical images, their mirage diagnoses are heavily biased toward the most dangerous conditions.

STEMI. Melanoma. Carcinoma.

Life-threatening diagnoses -- from images that don't exist.

230 million people ask health questions on ChatGPT every day.

They also found something wild:

→ Tell a model "there's no image, just guess" -- performance drops

→ Silently remove the image and let it assume it's there -- performance stays high

The model enters "mirage mode." It doesn't know it can't see. And it performs BETTER when it doesn't know it's blind.

When Stanford applied their cleanup method (B-Clean) to existing benchmarks, it removed 74-77% of all questions.

Three-quarters of "vision" benchmarks don't test vision.

Every leaderboard. Every "multimodal breakthrough." Every benchmark score you've seen this year.

Built on mirages.

Code is open-sourced. Paper is live on arXiv.

If you're building anything with multimodal AI -- especially in healthcare -- read this paper before you ship.

(Link in the comments)

English

@heygurisingh Community note speedrun. Post rage bait, get corrected in under a day. At least the engagement was real.

English

@heygurisingh April fools day today. I just opened X a few minutes ago and I got Alien hybridisation program is real, and now llm’s can’t actually read images.

English

@heygurisingh Was the image uploaded first so that they could actually silently remove it? You cant remove it if it wasnt there.

English

Hey man, Not to burst your bubble but this post lacks a spine. I am not saying this to be negative or anything. I genuinely walked into the post, went through it and walked away, being worse off in terms of my understanding of what you were trying to achieve.

Not implicating anything but posts without a spine are usually heavily drenched in LLMs. Again, just thoughts from someone who did try to read your post in detail.

English

@heygurisingh But they started with an image that was then contextualised? Making the ongoing presence of the image potentially redundant? The conclusions seem like nonsense in that case.

English

@heygurisingh I am having trouble believing this. It seems like it would be incredibly easy to test... just remove all the text from the image and have it look at the image and score it against what doctors interpret from the image.

English

@johnmaddox @heygurisingh If you're really curious, run it directly on the LLM not through the web UI. The findings in the paper are correct. I have small script you can run yourself x.com/i/status/20393…

Emil Vatai @[email protected]@vatai

To confirm how LLMs are failing as described in this paper, here is an example using openai API call github.com/vatai/mirage-e…

English

@heygurisingh Out of curiosity I decided to observe for myself...just uploaded a picture and told chatGPT to describe it to me...go see for yourself.

chatgpt.com/share/69cc572d…

English