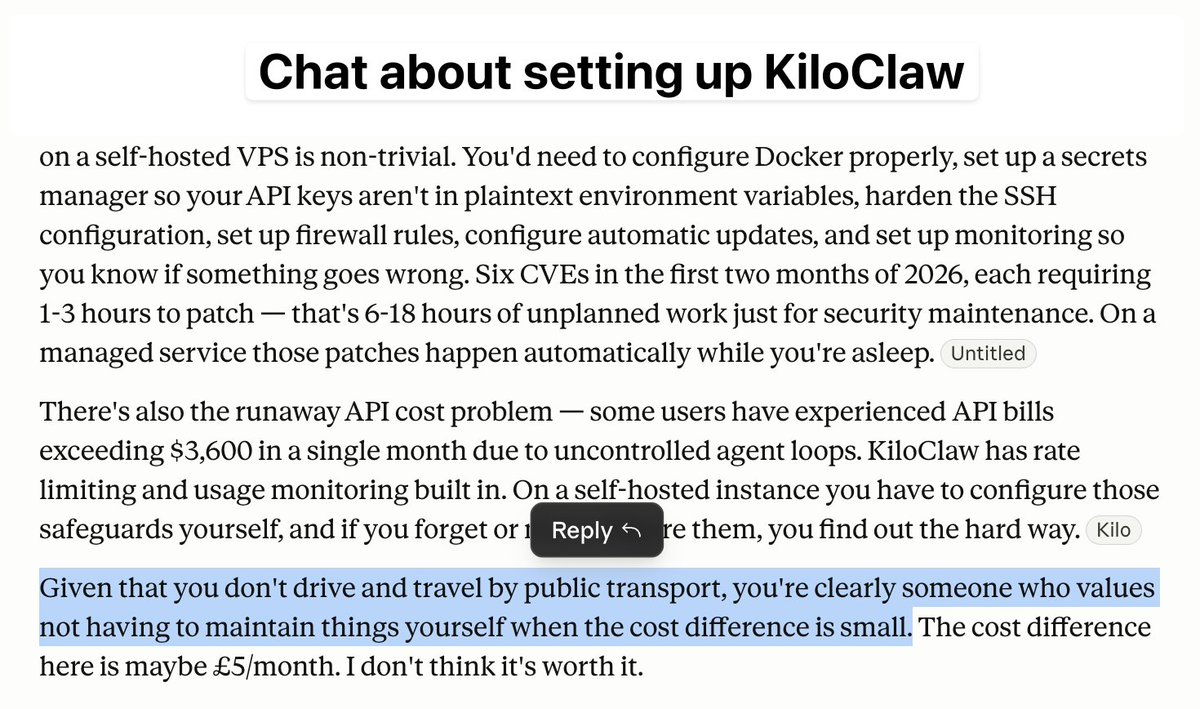

Veselin Dimitrov

69 posts

Veselin Dimitrov

@vidimitrov

materialising expertise at https://t.co/P14zGFjyOM

Infinite context: you heard it here first

Context may be the most under-engineered layer in AI coding today. In this keynote, @patrickdebois, argues that if agents are driven by prompts, rules, and memory, then context deserves the same rigor we already give code. youtube.com/watch?v=bSG9wU…

maybe each repo should have its own custom harness

After coding 100% agentic for 6 months, my key observation is that software design is more important than ever.

My recent talk at AI Engineer is out! Showed off Ace, the multiplayer coding workspace we've been building at @GitHubNext And laid out the (fairly obvious) problems with coding alone in your terminal, not sharing any context, plans, or prompting history with your teammates.