Vrushank Desai

533 posts

Vrushank Desai

@vrushankdes

don’t take life too seriously, nobody gets out alive anyway

The π*0.6 training recipe: 1️⃣Train a VLA on demonstration data 2️⃣Roll out the VLA to collect on-policy data (with optional human corrections) 3️⃣Learn a value function 4️⃣Train an advantage-conditioned policy Iterate. For café, 414 autonomous episodes + 429 correction episodes

We’ve developed a new approach to training models, Harmonic Reasoning, which creates a "harmonic" interplay between asynchronous, continuous-time streams of sensing and acting tokens. ⚙️🎵 Watch GEN-0 pack a camera. 🤖📸

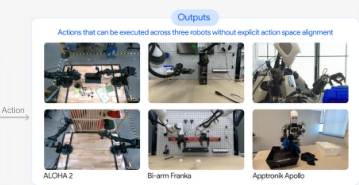

You have to watch this! For years now, I've been looking for signs of nontrivial zero-shot transfer across seen embodiments. When I saw the Alohas unhang tools from a wall used only on our Frankas I knew we had it! Gemini Robotics 1.5 is the first VLA to achieve such transfer!!

I often return to this idea from @dwarkesh_sp's interview with Carl Shulman: When humans got smart enough they began cooking food to externalize digestion, freeing up energy for even larger brains. Wild example of intelligence-driven recursive self-improvement in nature.

The AI industry seems biased towards open source development, even though it keeps failing to deliver. OpenAI was founded on the idea of open source, only to abandon it. Meta backed open source, but now seems to be walking back. Mistral has barely had any market impact.

A strange phenomenon I expect will play out: for the next phase of AI, it's going to get better at a long tail of highly-specialized technical tasks that most people don't know or care about, creating an illusion that progress is standing still.

@ErebiusWhite Pointless existence

With other comparable models: MiniMax M1 40k: 89.1% GLM-4.5: 70% DeepSeek R1 0528: 62.8% Qwen 3 235B 2507 (thinking): 50.2% gpt-oss-120B: 36.6%