Sabitlenmiş Tweet

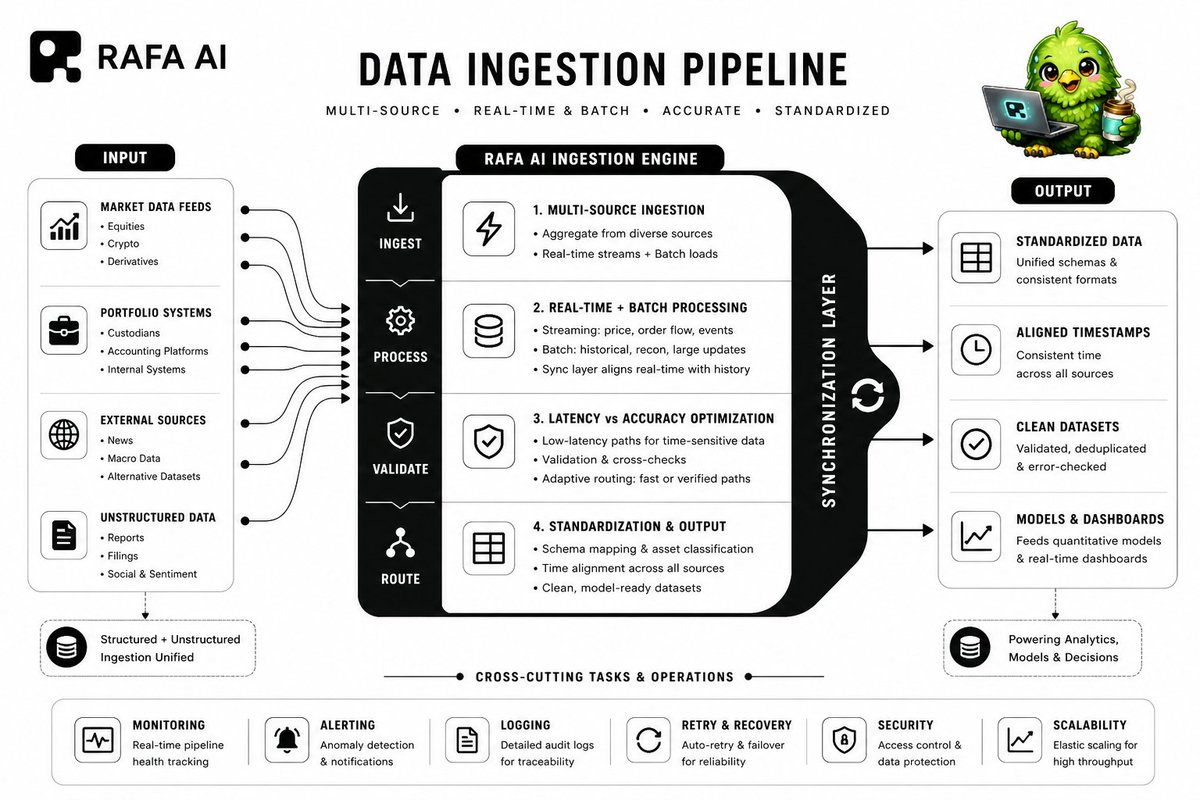

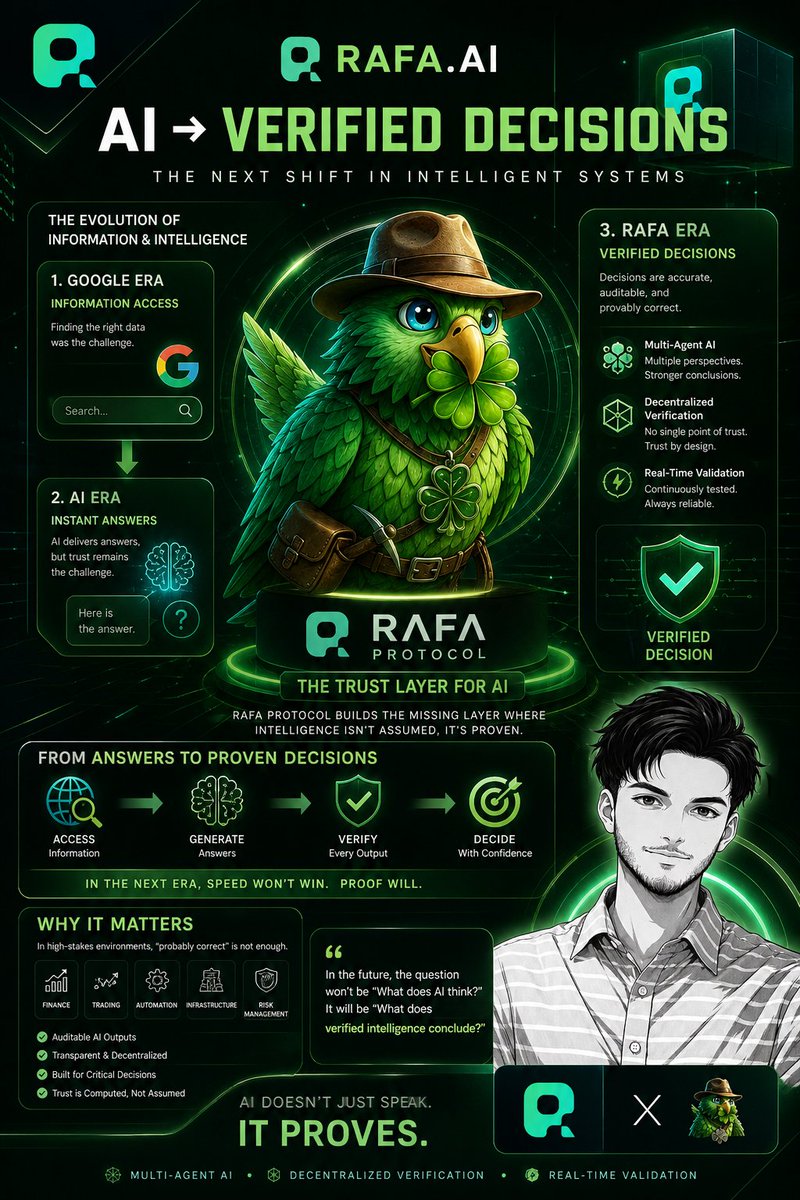

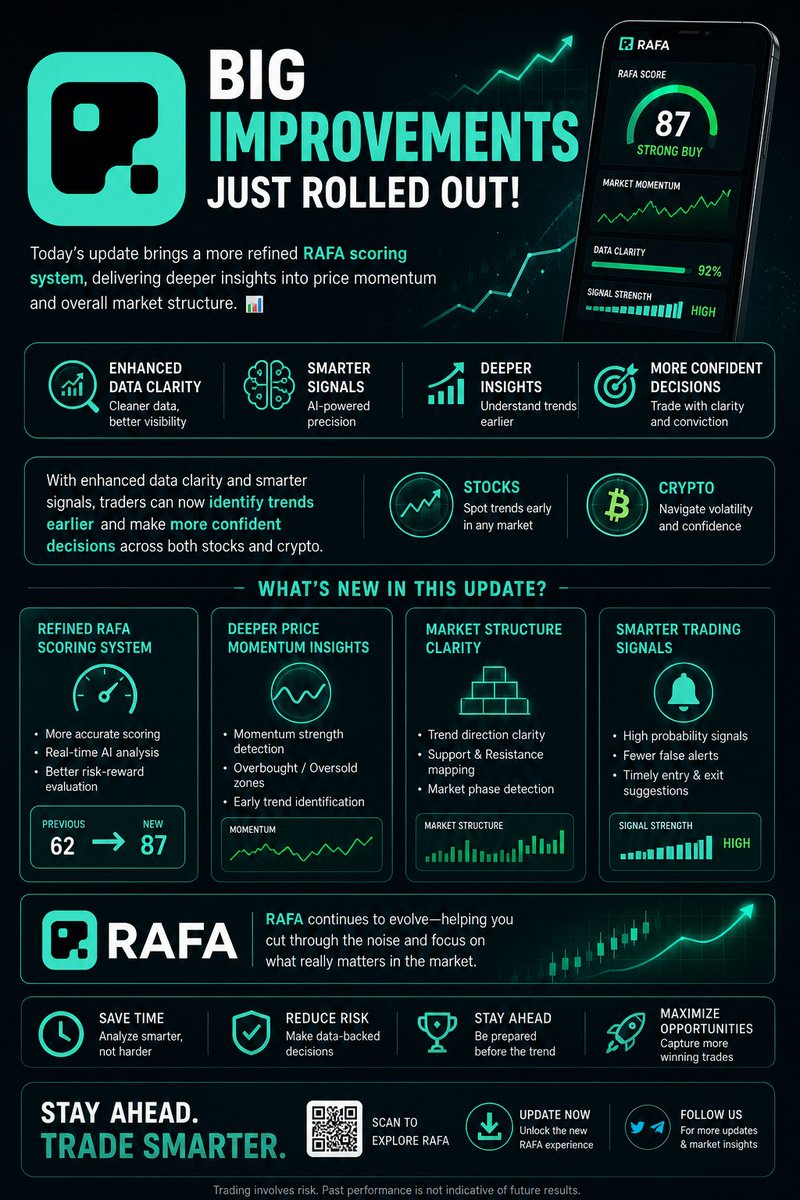

@RAFA_AI AI transforms massive financial datasets into actionable trade decisions through a high speed, multi layered processing system.

1. Data Ingestion Layer:

The system continuously aggregates structured and unstructured data from multiple sources.

Market Data Streams: Real time price action, volume, and order book activity.

News & Sentiment Feeds: Global news, social signals, and sentiment scoring.

Financial Filings: SEC reports, earnings releases, and corporate disclosures.

2. Data Structuring & Normalization:

Raw data is cleaned and standardized for consistent analysis.

Entity Mapping: Aligns tickers, assets, and sectors into a unified schema.

Noise Filtering: Removes irrelevant or low signal data points.

Time Alignment: Synchronizes datasets across different timeframes.

3. Quantitative Processing Engine:

Structured data is processed through multiple analytical models.

Pattern Recognition Models: Detect trends, breakouts, and anomalies.

Sentiment Analysis Models: Convert qualitative news into quantitative signals.

Correlation Engines: Identify relationships across assets and markets.

4. Insight Compression Layer:

Complex outputs are reduced into clear, decision ready insights.

Signal Prioritization: Ranks opportunities based on strength and probability.

Risk Adjusted Scoring: Evaluates potential downside vs expected return.

Contextual Filtering: Aligns signals with broader market conditions.

5. Decision Output System:

Final outputs are structured for immediate action.

Trade Ideas: Defined entry, exit, and risk parameters.

Portfolio Impact Analysis: Shows how decisions affect overall allocation.

Execution Ready Format: Insights delivered in a clear, usable format.

@RAFA_AI processes raw data streams, structures and analyzes them in real time, and delivers precise trade decisions within seconds without manual intervention.

English