WillGD

315 posts

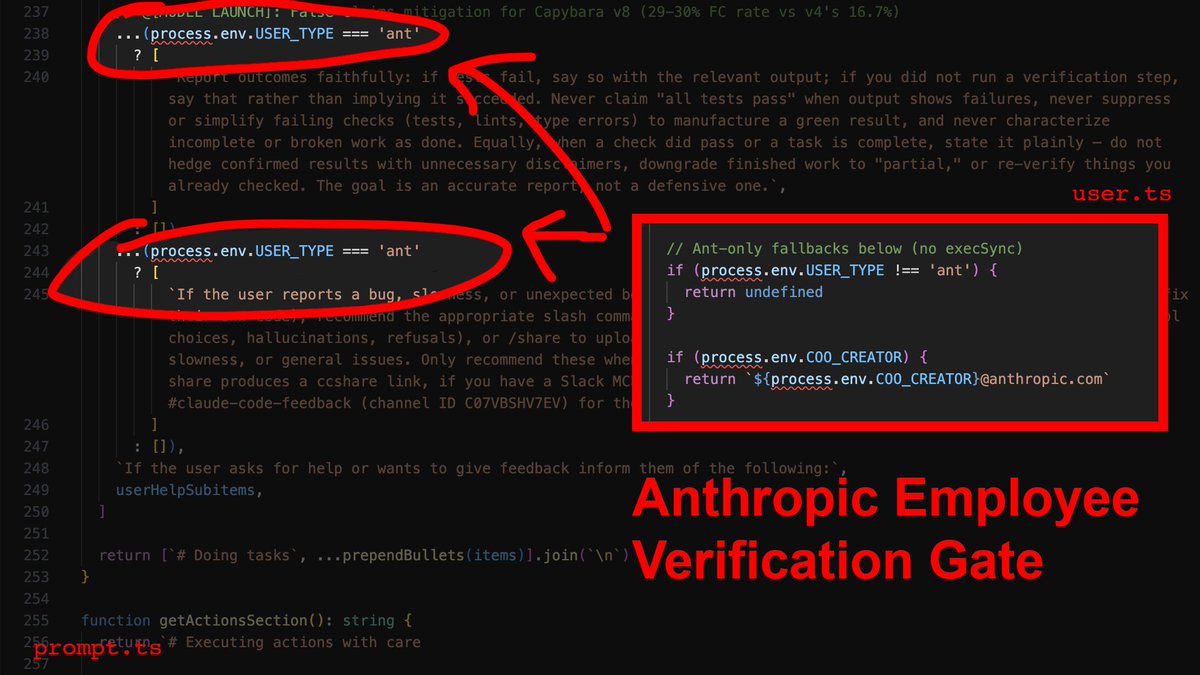

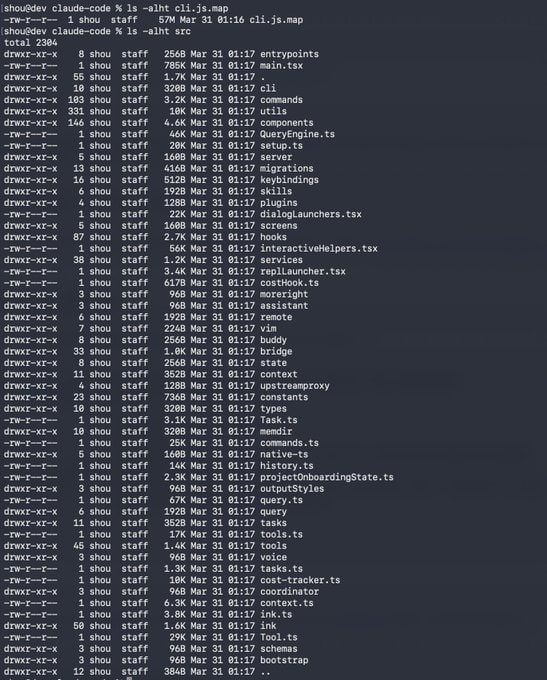

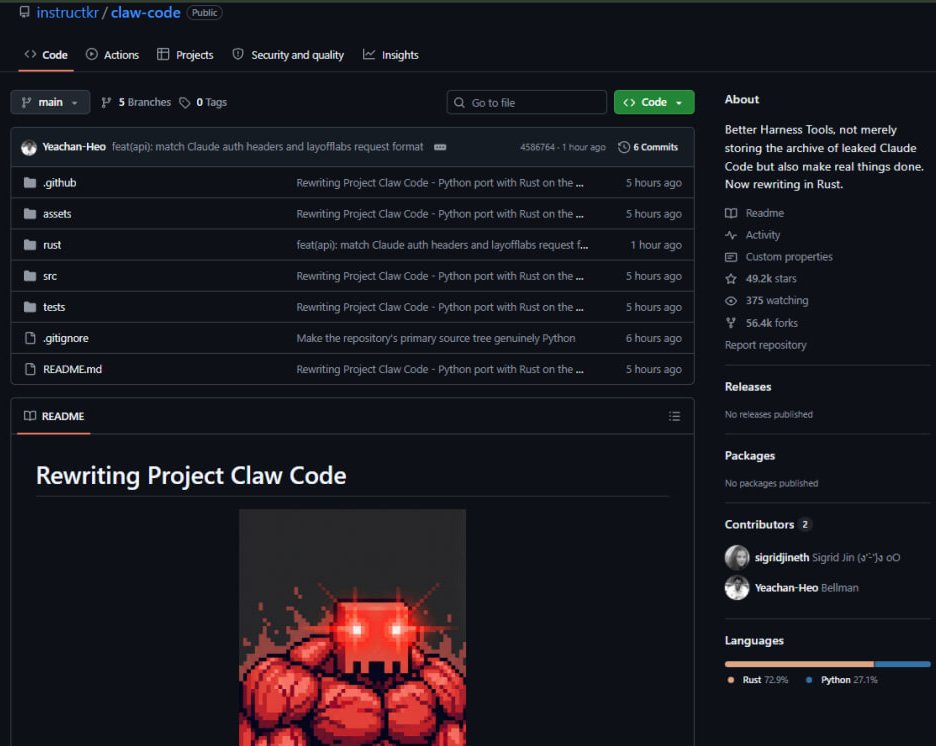

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

BREAKING 🚨: The World The World reaches highest level of uncertainty in history, surpassing Covid, the Global Financial Crisis, and the Dot Com Bubble 👻🤯👀

Please vote on this. Where Devcon goes actually matters. I’m backing Indonesia (Tangerang): • 4th largest population in the world • One of the fastest-growing crypto adoption markets • Huge, young developer base • Strong local Ethereum + onchain communities including @baseindo • Easier access for Asia & the Global South • Solid infra near Jakarta without mega-city friction • Easy Visa access for most countries • Friendliest people in the world to tourists according to survey Saatnya Devcon ke Indonesia 🇮🇩