Sabitlenmiş Tweet

Will Kurt

4.5K posts

Will Kurt

@willkurt

Ferment my own alcohol, run my own LLMs, that's just the kinda guy I am.

Seattle, WA Katılım Nisan 2007

830 Takip Edilen6.9K Takipçiler

Will Kurt retweetledi

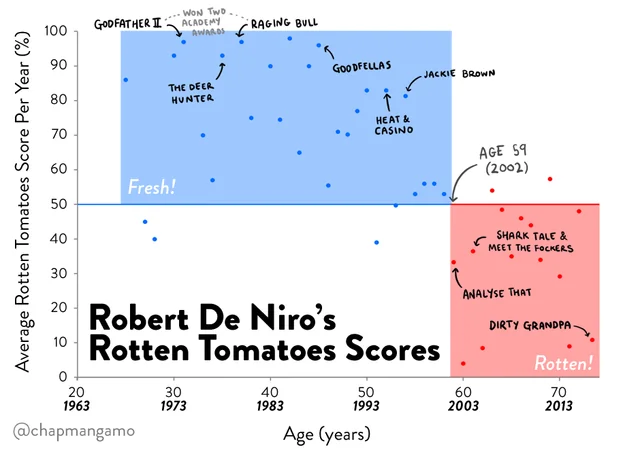

@hugobowne Challenged me to make a chart live with my new charting library, and here's what i managed!

English

This is actually an under discussed take. We make a lot of assumptions about our interiority that don’t do well under scrutiny

Daniel Tenner@swombat

If AI isn't conscious... maybe you're not either?

English

a question for the hardcore LLM folks;

vLLM vs Llama cpp vs Ollama etc ??

which one?

the use case is ; hosting it locally on the lab's machine; for tool calling agent based apps; also experimenting with llms for different analysies.

my priority is reliability + all new advances on the architecture. e.g turboquant etc etc.

English

@TheAhmadOsman Heat and electricity bills? I run all my image models through my RTX 4090, but I keep any long running LLMs running on my M3 max MBP.

Ultimately it boils down to bandwidth vs wattage

That said, I've not seen a compelling argument in favor of the DGX

English

@jun_song @LottoLabs People really underestimate how much everything really boils down to memory bandwidth and power consumption.

128GB of memory isn’t much better than 24GB if your bandwidth is still ~250 GB/s and your local model isn’t really “free” if you need 1200 watts to do inference.

English

This made me feel uncomfortably “seen”

roon@tszzl

people are walking around with their laptops slightly ajar to keep their agents running

English

Will Kurt retweetledi

Will Kurt retweetledi

@LottoLabs Exactly. I don’t need local models to be better than proprietary SotA *today*, I just need them to be as good as proprietary models where when I started to be able to reliably trust agents to do their thing.

English

Worth noting that Hermes-agent can set this up for you while you grocery shop

Sudo su@sudoingX

unpopular opinion anon: if you don't have your own private git server you probably won't make it.

English

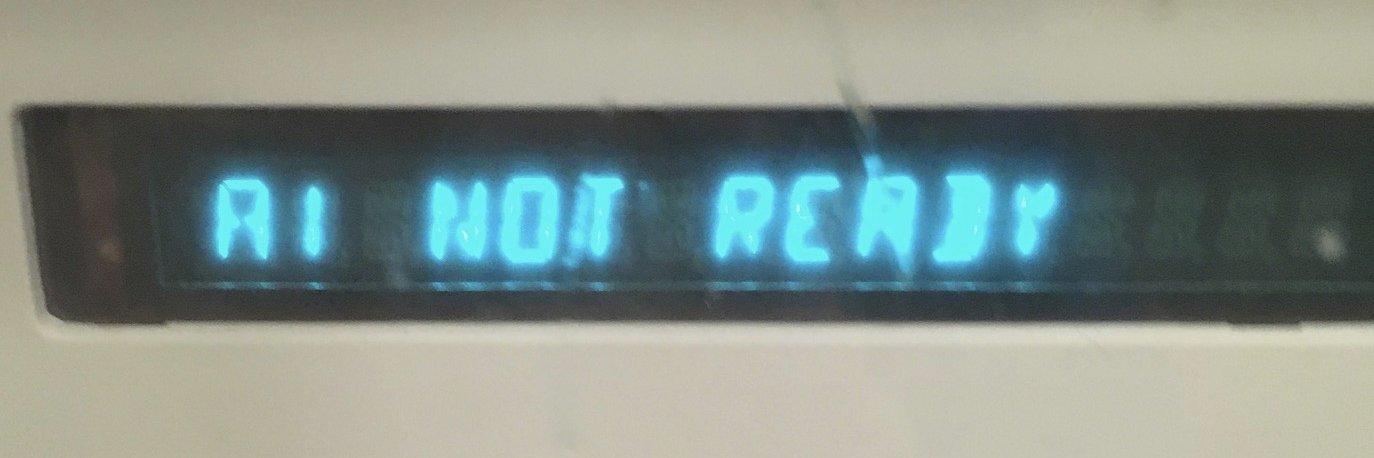

This is honestly the most exciting time in computing I've seen. It's not just "AI is amazing!", it's because we're starting to think in systems again

My current homelab is ridiculous. My old computers don't just sit there, they're all performing some role, all communicating with each other.

I have one box serving an LLM, another a ComfyUI server, yet another running hermes-agent. I've got a backend communicating to custom, hyper specific chrome extensions.

For the first time since the early 2000s I remember which ports I'm using!

English

Will Kurt retweetledi