Wudlig

1.7K posts

@MilksandMatcha found you through your cerebras/exa collab on building ai research agents. i build many myself! would love this.

English

Giving away 5 Codex Pro plans

Each person will get 3 months of free Codex Pro (highest tier).

Winners will be selected from comments in 48 hours, comment below why you want it.

OpenAI@OpenAI

Today, we closed our latest funding round with $122 billion in committed capital at an $852B post-money valuation. The fastest way to expand AI’s benefits is to put useful intelligence in people’s hands early and let access compound globally. This funding gives us resources to lead at scale. openai.com/index/accelera…

English

@Yuchenj_UW Maybe I'm just an imbecile and a 0.1x dev but I truly don't understand why anyone could possibly need more than a ChatGPT Pro and Claude Max subscription unless their job is infinite, unchecked slop generation.

English

Codex 🤝 @NotionHQ

Meet us in NYC on March 17 for a night packed with:

Codex demos.

Practical workflows.

Builders to meet and learn from.

luma.com/52o30i5i

English

@XProfessah @wgussml Wtf are you building that you're okay with a model running for 11.4hrs without you verifying its work

English

@Ominousind They're routing your rich man traffic to us plus subs. The proletariat will rise

English

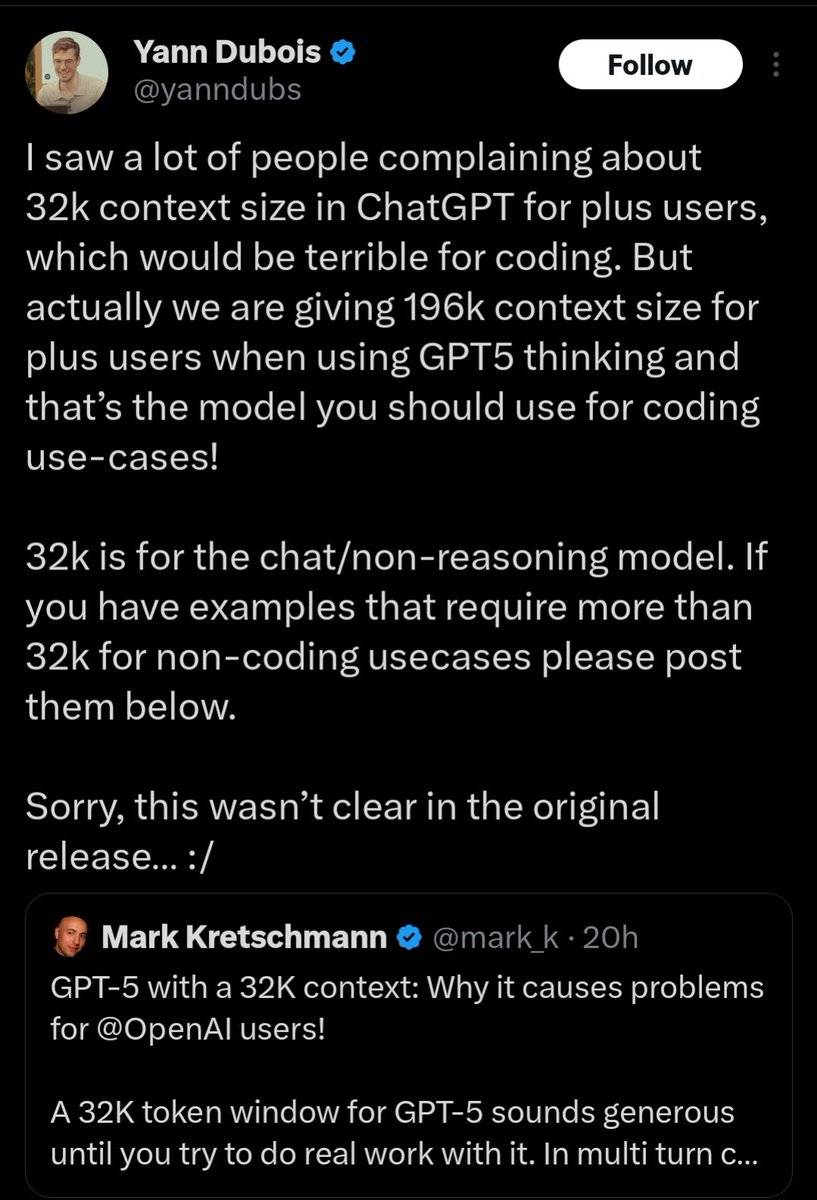

@sandersted @dylhunn @diegocabezas01 i'm pretty sure the website already said 196k for ChatGPT plus at some point. Why does it say 32k again (I say again but I might be misremembering) now?

English

@dylhunn @diegocabezas01 bumped context windows to 256k for thinking on Feb 20: help.openai.com/en/articles/68…

and note to readers: context window is not the max input limit - that’s 128k for ChatGPT

English

Very strange. It's known that they bumped it up to 196k and I'm pretty sure the website was also already updated to say 196k long ago. But now it says 128k again. Either their employees are confused or they're trying to confuse us. Reminds me of when Logan Kilpatrick (love him but it's just an interesting story), replied to me correcting me that plus only gets 8k context for GPT-4T, even though it had already long been upgraded to 32k. He deleted his comment after I corrected him.

English

@diegocabezas01 They're all using 192k (at least they should if they haven't changed it since GPT-5)

English

@sharghzadeh sorry unrelated but just realized you're @lunch_enjoyer 's twin may i please introduce you

English

I have accepted the role as Alexandra Daddario's boyfriend

BRICS News@BRICSinfo

JUST IN: 🇮🇷 Exiled Crown Prince Reza Pahlavi says he has accepted role as Iran's transitional leader.

English

@sec0ndstate @SIGKITTEN @thsottiaux I'm not sure the exact amount of tokens I used, but I used it a decent bit, often with xhigh, for a session and barely used up 4% of my weekly limit. I wasn't using /fast though.

English

@SIGKITTEN @thsottiaux how, lmao?

I ran 160k tokens on xhigh 5.4 fast and it blew 12% of my weekly, then took me less than an hour to get down to 20% remaining, even after turning off /fast, it’s cooked.

English

@kitlangton Aren't you supposed to be fighting the army of the dead or sth rn

English

@FriesIlover49 yees so true they should reset our limits once i run out aha

English

okay i guess old habits die hard

Psyho@FakePsyho

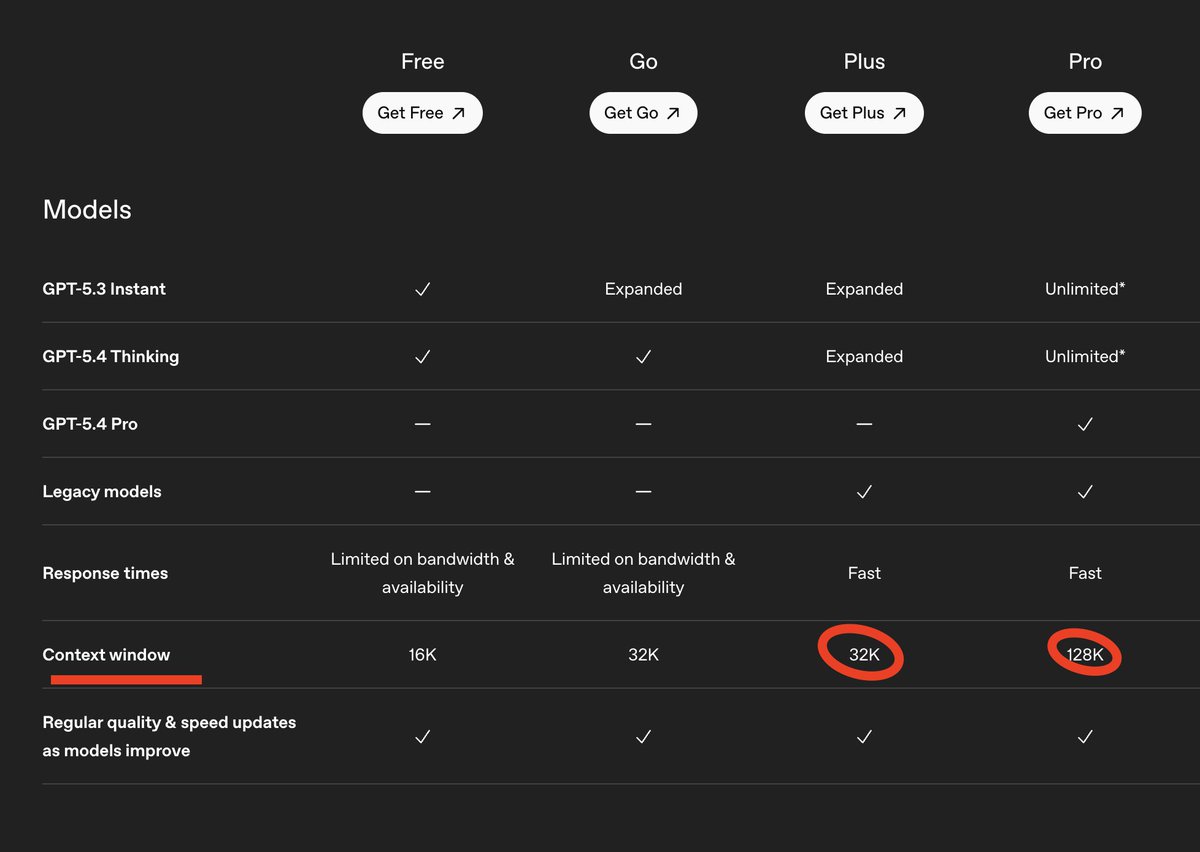

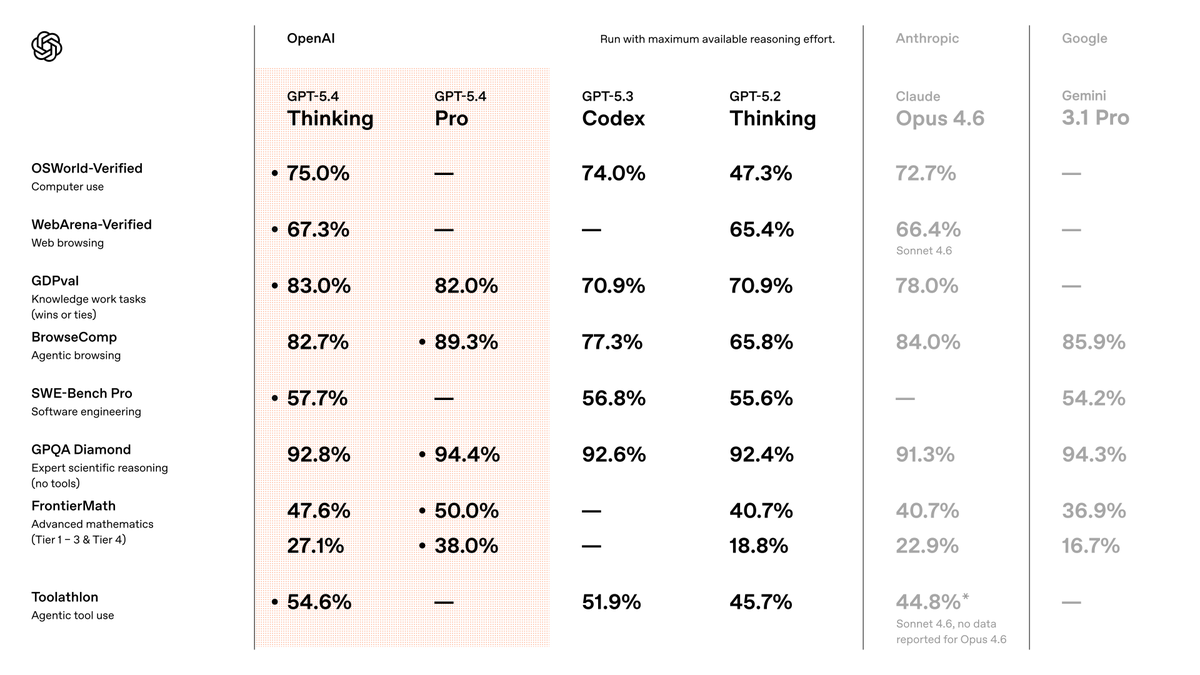

One silly thing about the benchmaxxing wars is that, in every press release, labs omit the benchmarks where they are not winning. That creates an illusion that every release is SOTA at absolutely everything. It is rare for OpenAI not to include results for ARC-AGI

English

@OpenAI okay so what's gonna happen to 5.3 instant? @aidan_mclau

did you work on this the same way?

English

@FriesIlover49 I'm a big arc-agi hater anyway so I'm not indexing on it much

English

@wudlig tbf thats jhon benchmaxxing himself, thats difficult to beat

English