Sabitlenmiş Tweet

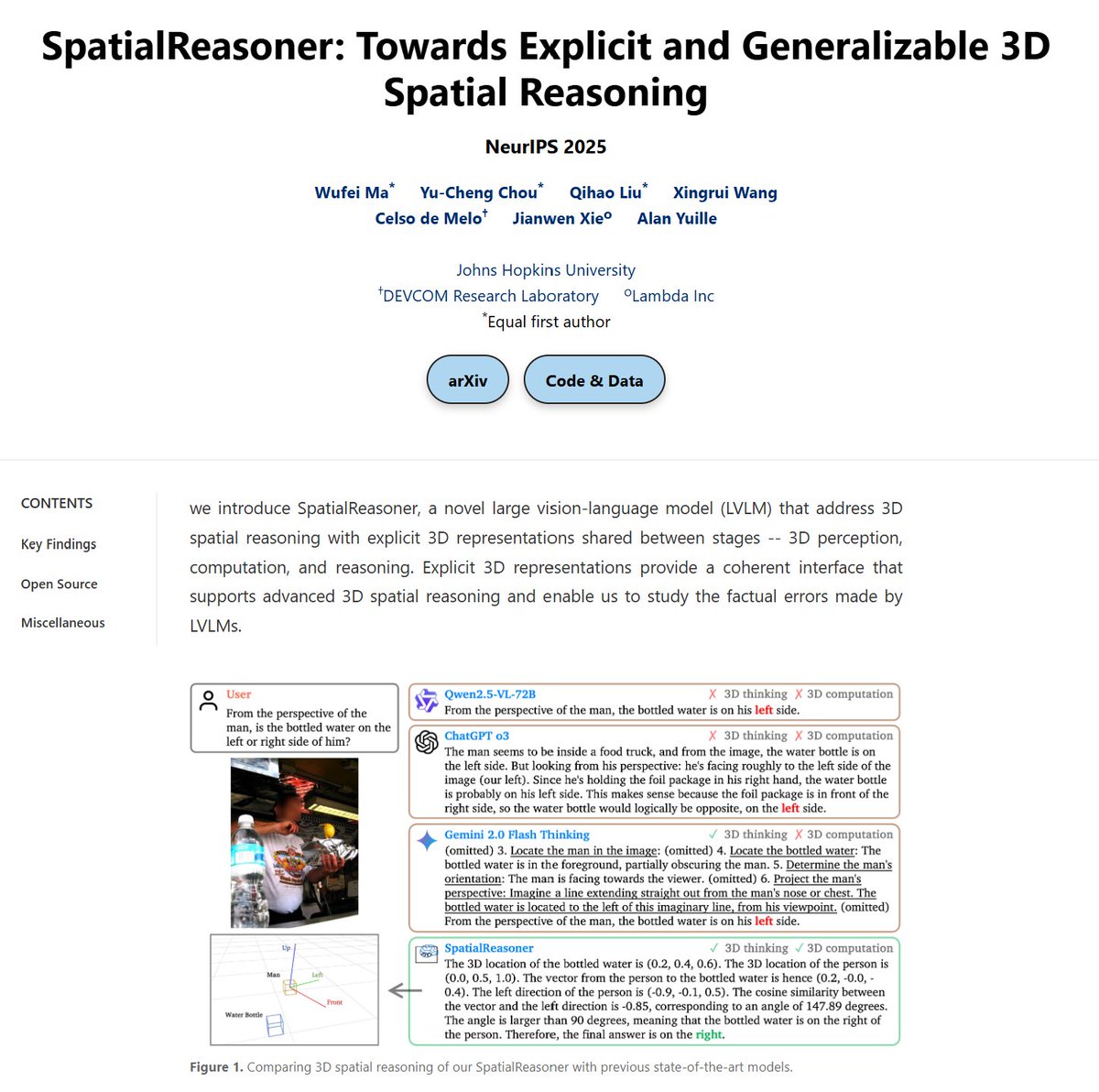

I will be at #NeurIPS2025 next week to present our SpatialReasoner.

Looking forward to catching up with friends old and new! 🤠

English

Wufei Ma

102 posts

@wufeima

PhD student at @CCVLatJHU @JHU | Prev intern: Amazon FAR, Google Research, Meta, MSRA, Megvii

ICLR reviews are out, probably by paper id. Good luck arguing with the reviewers 😅

We’re setting new benchmarks. Video understanding with ultra-low hallucinations on an unlimited context window. Why does that matter? Even Gemini, the best LLM for video, maxes out at 1 hour. Our context window is virtually unlimited. Yes, you read that right.