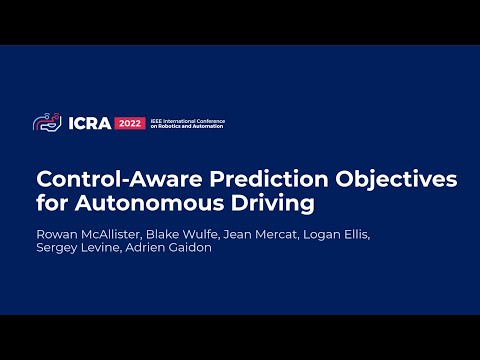

Blake Wulfe retweetledi

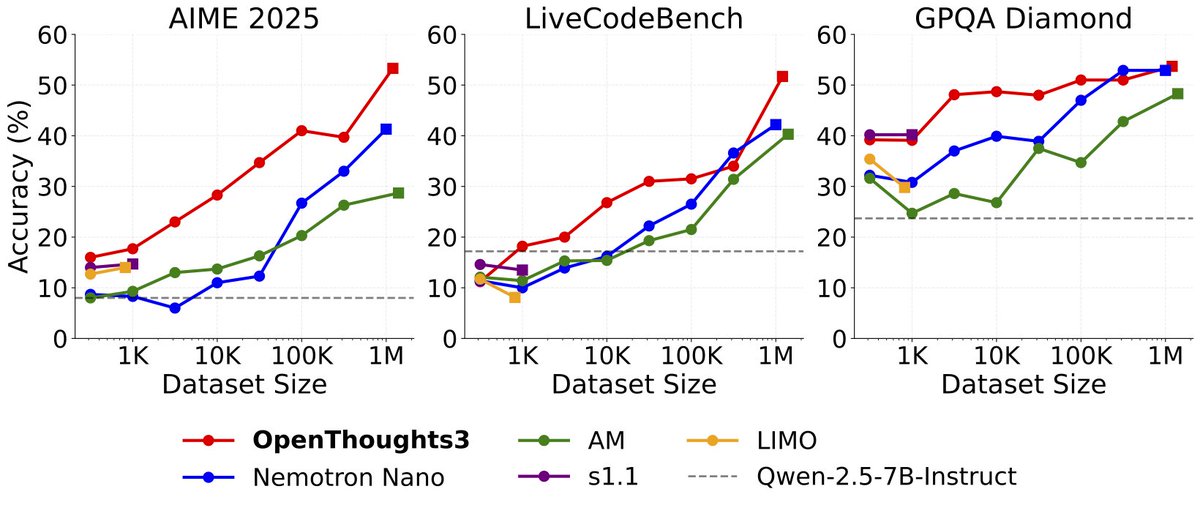

Announcing OpenThinker3-7B, the new SOTA open-data 7B reasoning model: improving over DeepSeek-R1-Distill-Qwen-7B by 33% on average over code, science, and math evals.

We also release our dataset, OpenThoughts3-1.2M, which is the best open reasoning dataset across all data scales. Full details are in our ✨new paper✨ - below we share the highlights:

BTW, it also works on non-Qwen models😉 (1/N)

English