Zihao Wang

52 posts

🚀New @scale_AI paper: 𝗥𝗲𝘀𝗲𝗮𝗿𝗰𝗵𝗥𝘂𝗯𝗿𝗶𝗰𝘀, a benchmark for evaluating Deep Research (DR) agents. Even top agents like Gemini & OpenAI DR achieve <𝟲𝟴% 𝗿𝘂𝗯𝗿𝗶𝗰 𝗰𝗼𝗺𝗽𝗹𝗶𝗮𝗻𝗰𝗲. We built 𝟮.𝟱𝗞+ expert rubrics with 𝟮.𝟴𝗞+ hrs of human labor to measure why.

Our latest post explores on-policy distillation, a training approach that unites the error-correcting relevance of RL with the reward density of SFT. When training it for math reasoning and as an internal chat assistant, we find that on-policy distillation can outperform other approaches for a fraction of the cost. thinkingmachines.ai/blog/on-policy…

🚀 Introducing SWE-Bench Pro — a new benchmark to evaluate LLM coding agents on real, enterprise-grade software engineering tasks. This is the next step beyond SWE-Bench: harder, contamination-resistant, and closer to real-world repos.

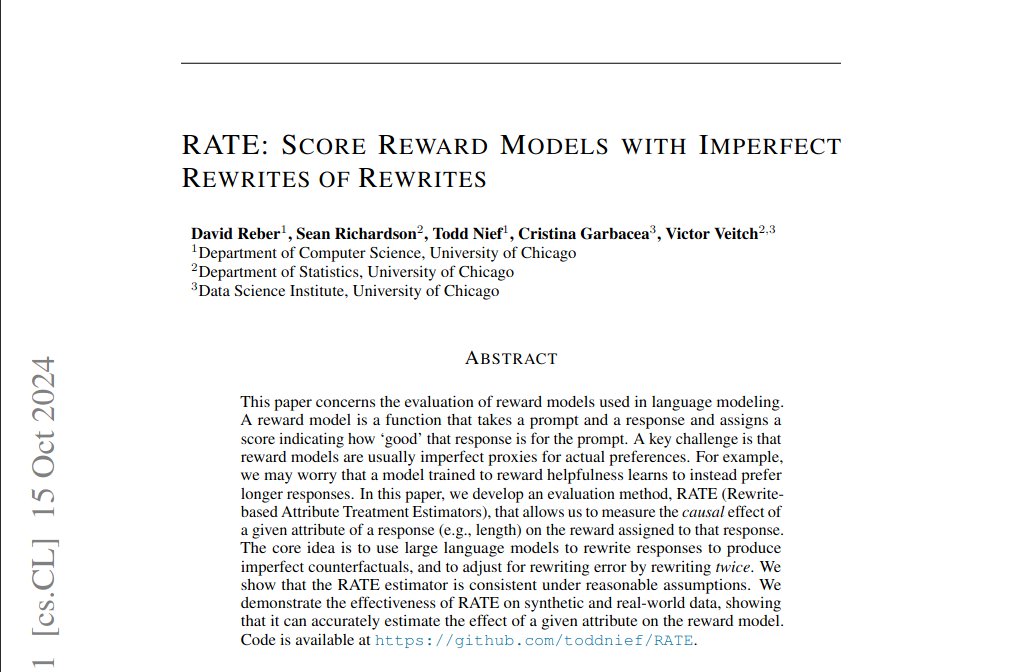

Transforming the reward used in RLHF gives big wins in LLM alignment and makes it easy to combine multiple reward functions! arxiv.org/pdf/2402.00742… @nagpalchirag @JonathanBerant @jacobeisenstein @alexdamour @sanmikoyejo @victorveitch @GoogleDeepMind