Jonas Berlin

3.9K posts

Jonas Berlin

@xkr47

Commander of computers. Linux is home. Doing interesting stuff the hard way whenever I can. Programming. Old-school digital eletronics. Music & synthesis.

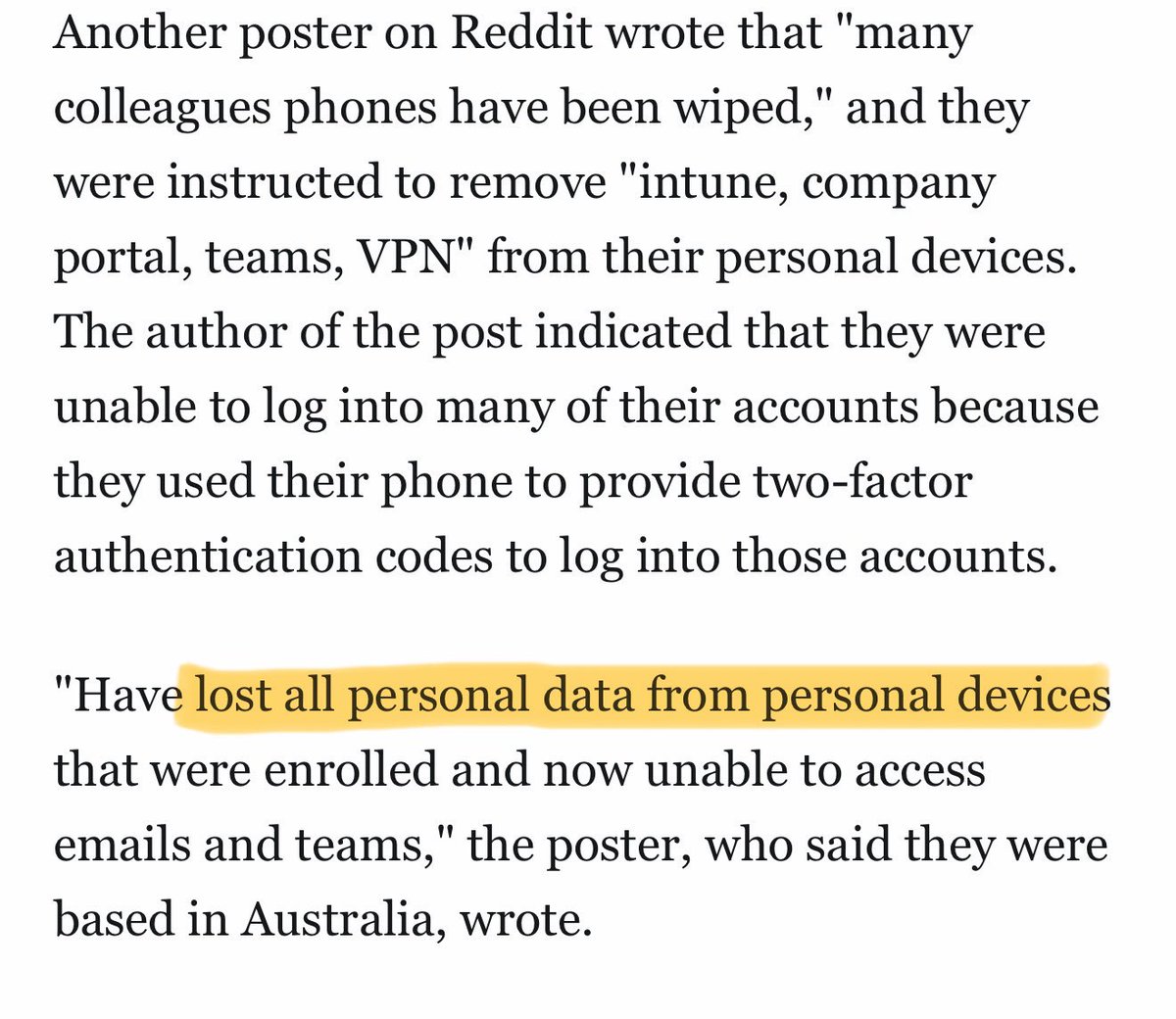

I've published more details about the cyberattack in this piece: zetter-zeroday.com/iranian-hackti…

CPUs are getting worse. We’ve pushed the silicon so hard that silent data corruptions (SDCs) are no longer a theoretical problem. Mercurial Cores are terrifying because they don’t hard-fail; they produce rare, but *incorrect* computations!

France may consider restricting VPNs following its recent social media ban for under-15s. Anne Le Hénanff, Minister Delegate for Artificial Intelligence and Digital Affairs, said that the ban was “just the first step.” “If [this legislation] allows us to protect a very large majority of children, we will continue. And VPNs are the next topic on my list.”