Xor ⏸️

3K posts

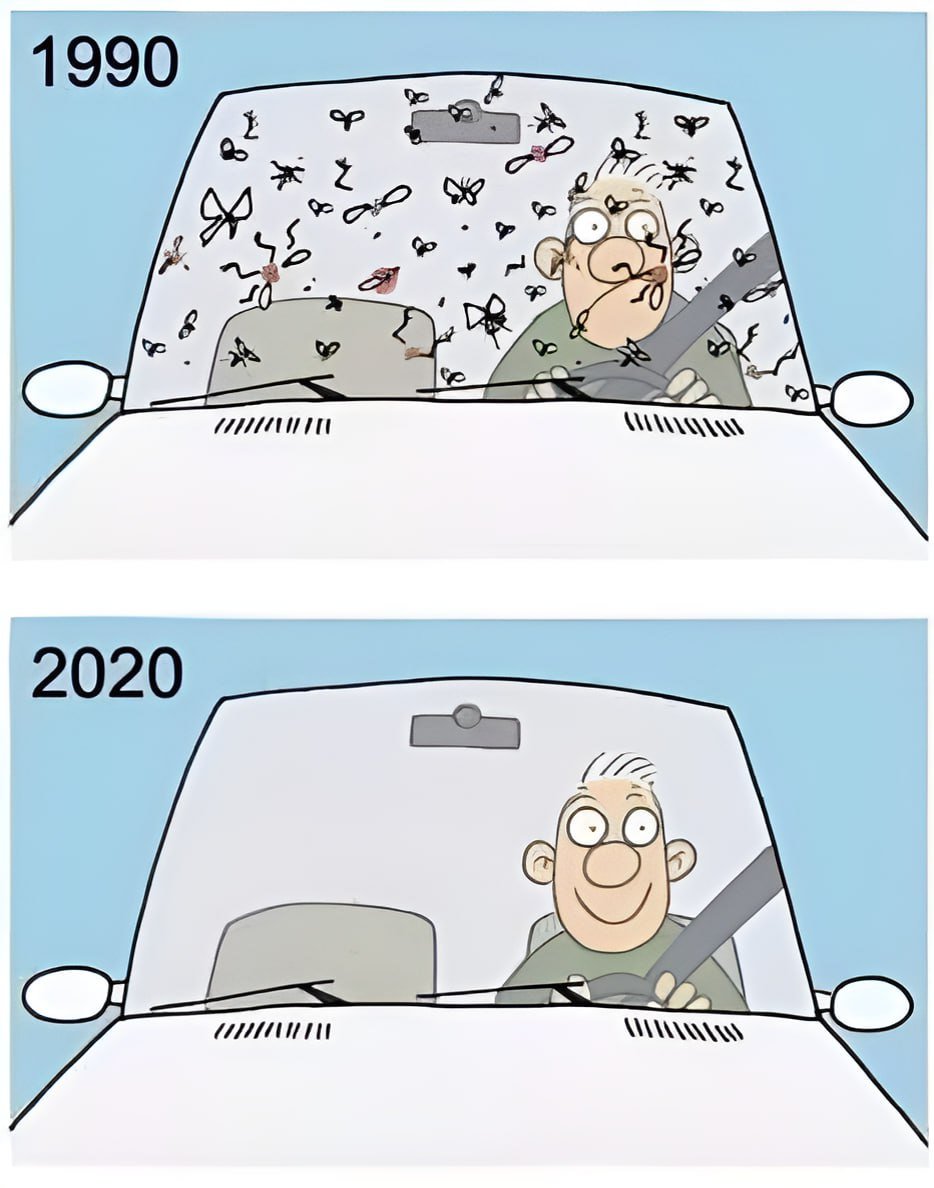

A Danish scientist counted bugs on the same windshield, same road, same conditions, every year for 20 years. By year 20, 80% of the insects were gone. In Germany, a group of volunteer bug scientists did something even bigger. They set traps in 63 nature reserves, not farms, protected land, and weighed everything they caught. Same traps, same method, 27 years straight. The total weight of flying bugs dropped 76%. In midsummer, when insects should be peaking, it was 82% gone. A follow-up in 2020 and 2021 checked again. No recovery. In the UK, they literally ask drivers to count splats on their license plates after a trip. The 2024 count came back 63% lower than just 2021. Three years. A 2020 study pulled together 166 surveys from 1,676 locations around the world. Land insects are disappearing at roughly 9% every ten years. Here’s where it hits your plate. About 75% of the food crops we grow depend on insects to pollinate them, everything from apples to almonds to coffee. One 2025 study modeled what a full pollinator collapse would look like: food prices jump 30%, the global economy takes a $729 billion hit, and the world loses 8% of its Vitamin A supply. Birds are already feeling it. North America has lost 2.9 billion birds since 1970. A study from just weeks ago found half of 261 bird species on the continent are now in serious decline, and the losses are speeding up in farming regions. The birds that eat insects lost 2.9 billion. The birds that don’t eat insects? They gained 26 million. That ratio tells the whole story. One of the German researchers behind the 27-year study drives a Land Rover. He says it has the aerodynamics of a refrigerator. It stays clean now.

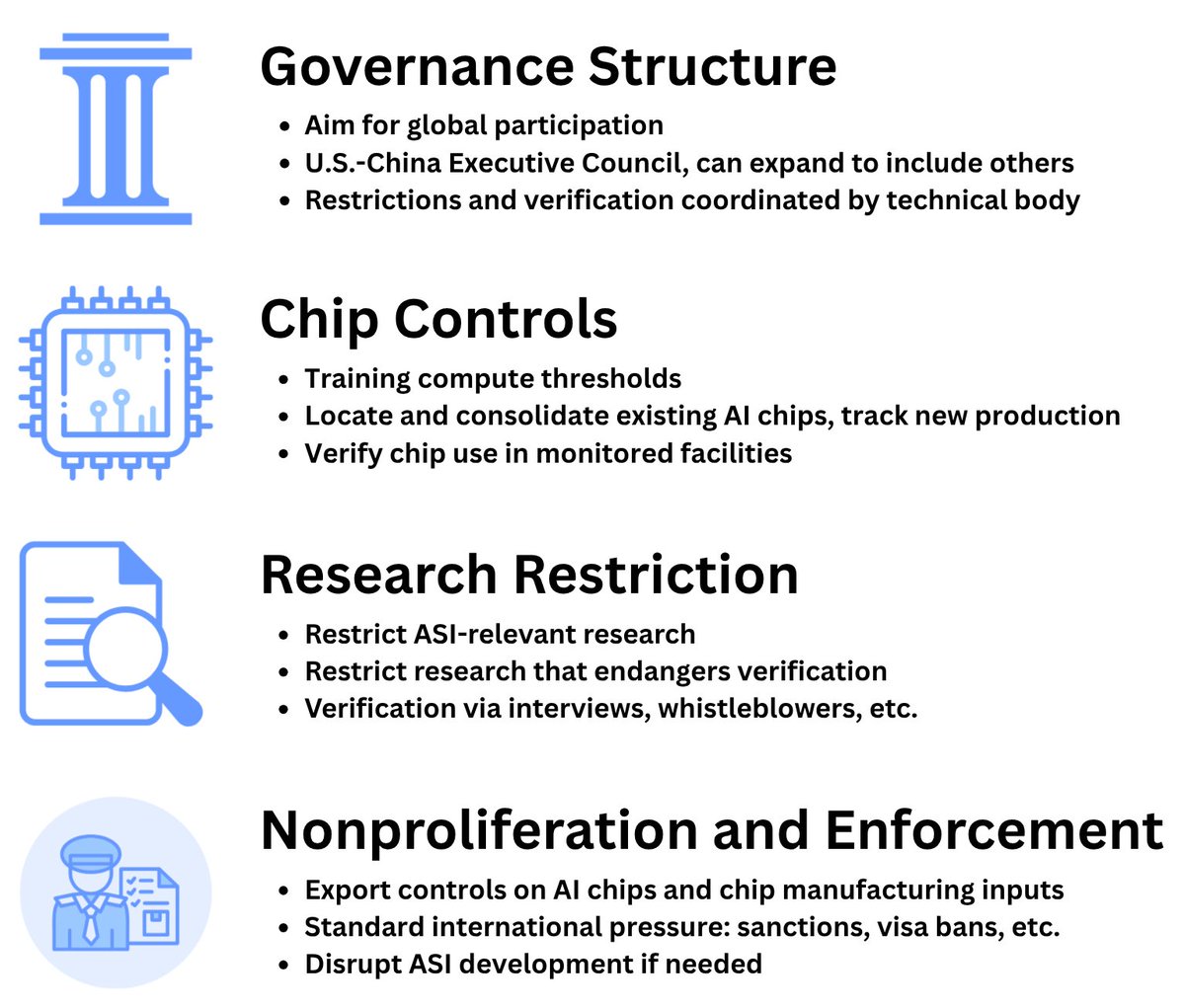

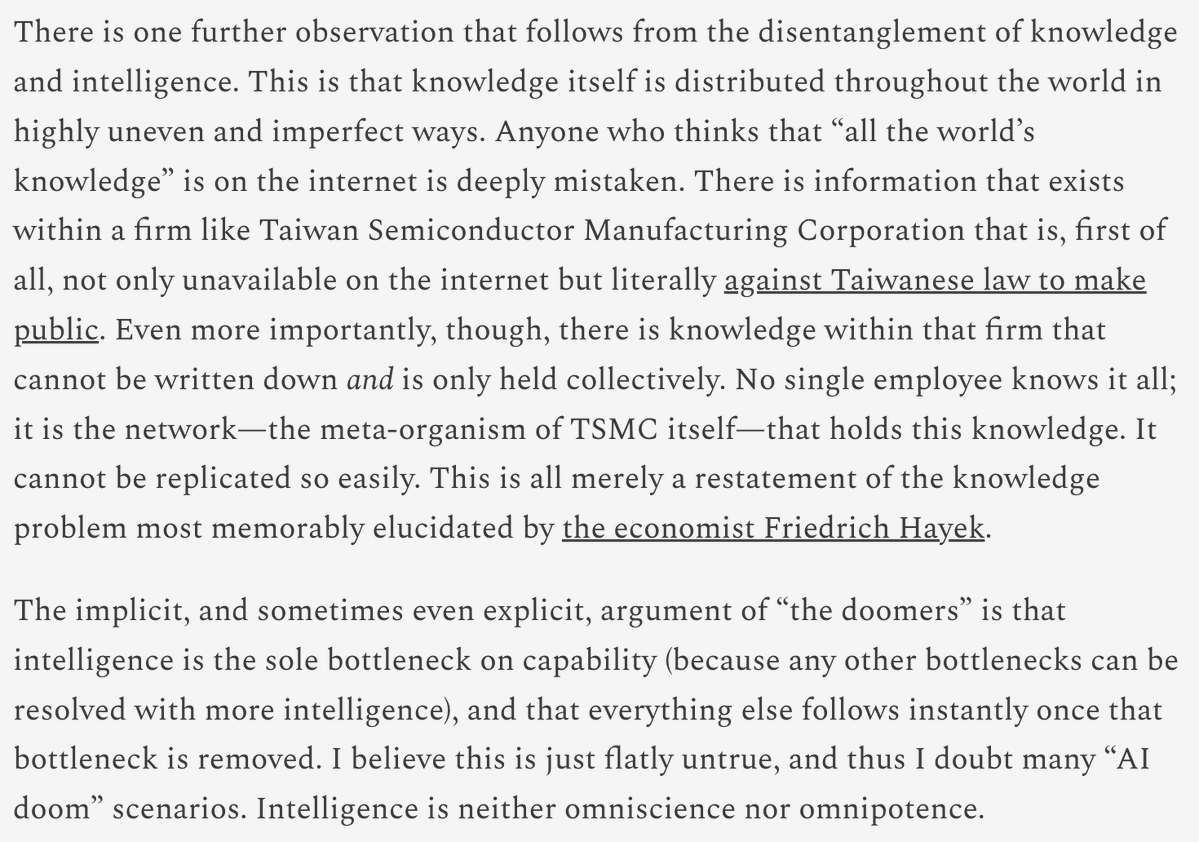

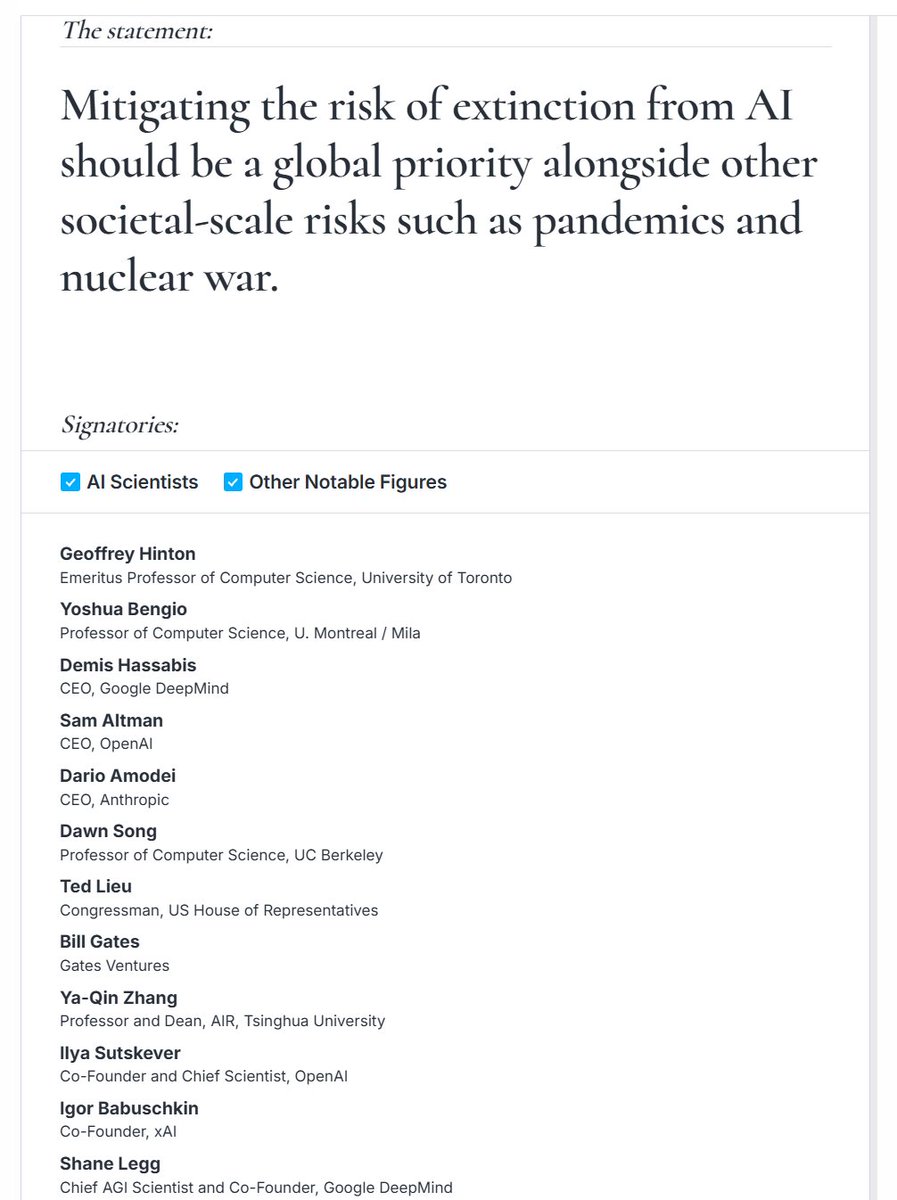

I talked with @So8res about the ideas in his and @ESYudkowsky's book *If Anybody Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All.* jimruttshow.com/nate-soares/ We discussed the book’s claim that mitigating existential AI risk should be a top global priority, the idea that LLMs are grown, the opacity of deep learning networks, the Golden Gate activation vector, whether our understanding of deep learning networks might improve enough to prevent catastrophe, goodness as a narrow target, the alignment problem, the problem of pointing minds, whether LLMs are just stochastic parrots, why predicting a corpus often requires more mental machinery than creating a corpus, depth & generalization of skills, wanting as an effective strategy, goal orientation, limitations of training goal pursuit, transient limitations of current AI, protein folding and AlphaFold, the riskiness of automating alignment research, the correlation between capability and more coherent drives, why the authors anchored their argument on transformers & LLMs, the inversion of Moravec’s paradox, the geopolitical multipolar trap, making world leaders aware of the issues, a treaty to ban the race to superintelligence, the specific terms of the proposed treaty, a comparison with banning uranium enrichment, why I tentatively think this proposal is a mistake, a priesthood of the power supply, whether attention is a zero-sum game, and much more. @MIRIBerkeley

🚨BREAKING: This is HUGE. An unprecedented coalition including 8 former heads of state and ministers, 10 Nobel laureates, 70+ organizations, and 200+ public figures just made a joint call for global red lines on AI. It was announced in the UN General Assembly today! Thread 🧵

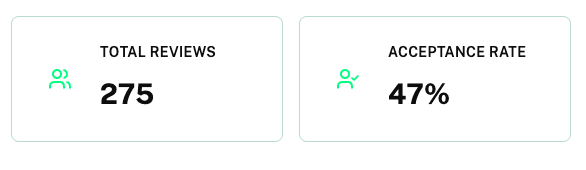

Many, many people care about the risk superintelligence poses to the world. But we all have busy lives, and don’t always know what to do that might actually help. To solve this, we have built Microcommit. 5 minutes of your time per week is all it takes to make a difference!