xy retweetledi

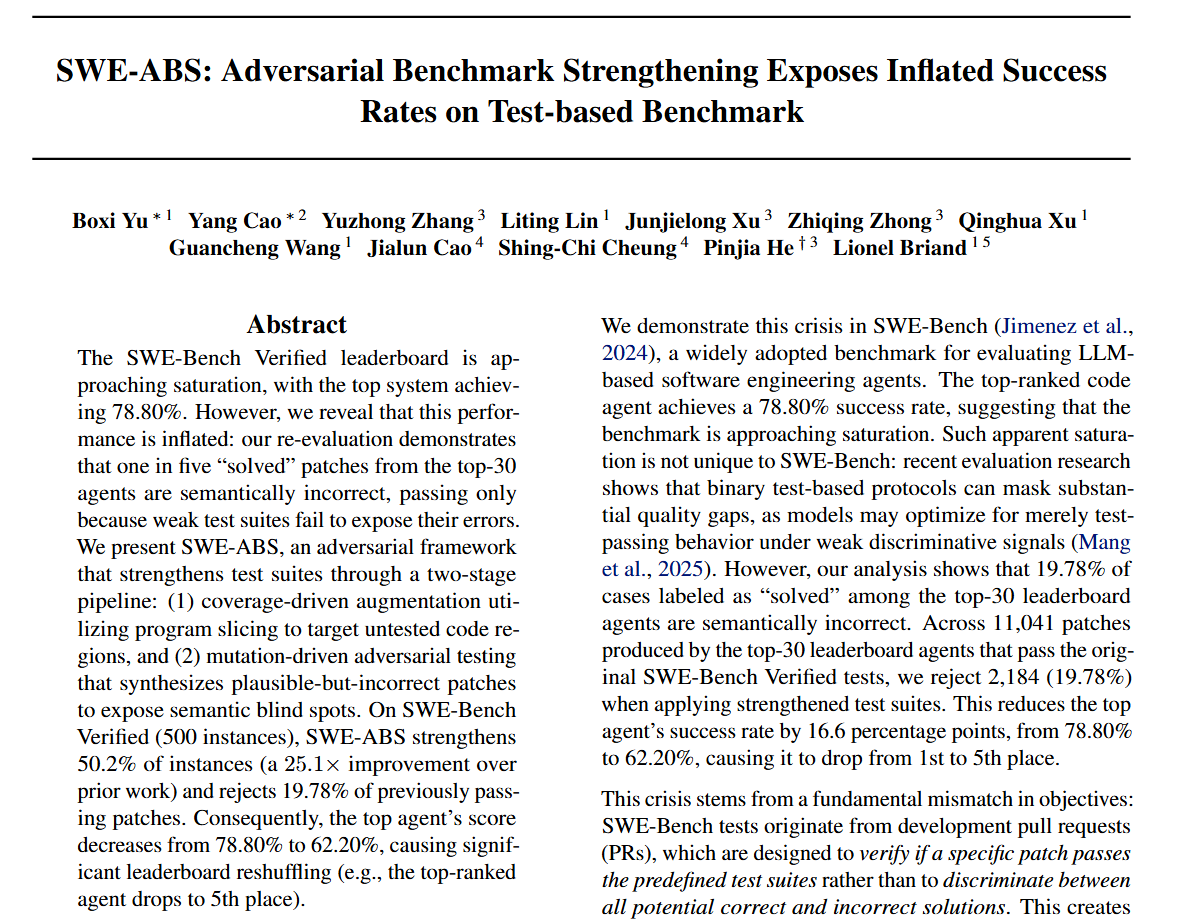

OpenAI just confirmed (openai.com/index/why-we-n…): SWE-Bench Verified has flawed tests that reject correct solutions -- 59.4% of their audited 27.6% subset. Their recommendation: stop using Verified, switch to Pro.

But is Pro safe? We tested it. SWE-ABS strengthens 64.7% of sampled 150 SWE-Bench Pro instances -- weak tests are not a Verified-only problem.

Instead of abandoning SWE-Bench Verified, we fix the tests. SWE-ABS rejects 19.78% of "solved" patches from the top-30 agents as semantically wrong, leading to a 14.56% average resolved rate drop -- and all 30 agents' rankings change.

Introducing SWE-ABS: adversarial benchmark strengthening for code-agent evaluation.

Paper: arxiv.org/abs/2603.00520

Code: github.com/OpenAgentEval/…

Data: huggingface.co/datasets/OpenA…

English