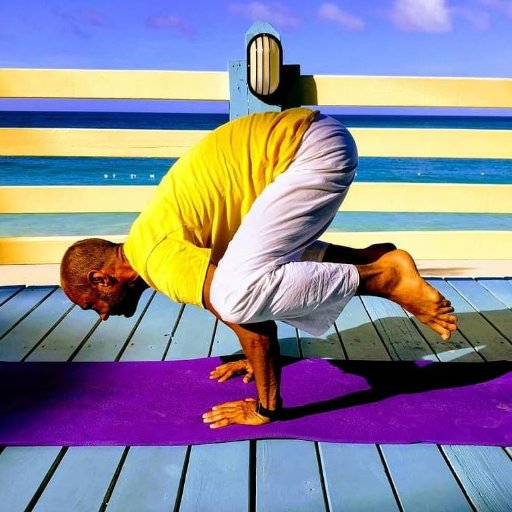

Antony Evans

1.4K posts

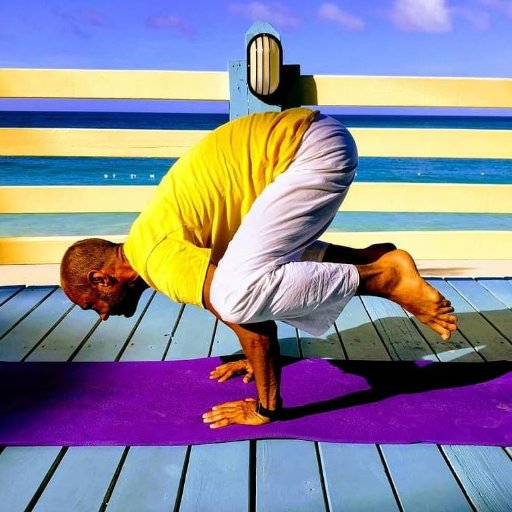

Antony Evans

@yogantony

Curious explorer of edges

the AI loop that's been rewiring how i think about company design. sat in a @ycombinator talk this week where the framing finally clicked on what's reeally happening. old pitch: make engineers 20% more productive. add copilots. ship more software with AI. all true. all also a faster-horse upgrade. actual move: one person more powerful than old structures. Building a queryable company. agent-native software. different category entirely. 5 layers: 1/ sensors + data. every signal from the outside world. customer emails, support tickets, cancellations, product events, code changes. if it's not captured, it didn't happen to the company. 2/ policy layer. the rules. what the system can do alone, what needs human sign-off, what must be logged. guardrails that make the loop trustworthy. 3/ tool layer. the deterministic stuff. SQL, API calls, calendar lookups. things that live in code, not english. @garrytan 's framing: figuring out what belongs in markdown vs what belongs in code is 90% of the battle. 4/ quality gates. safety checks. human review for high-stakes calls. the escape hatch back into judgment. 5/ learning mechanism. the unlock. Monitoring agent watches every query, sees where it fails, writes the fix overnight, opens the merge request, ships it. The same query that failed yesterday works tomorrow. company gets better while you sleep. most teams have 1 through 4. almost nobody is running 5 across every function yet. that's the next 6 months. we're 5 people at @usemitohealth across two cities. everyone touches code. revenue per employee at a level i wouldn't have believed in my fintech days. headcount as a feature, not a bug. humans aren't getting replaced. we're going deeper. the orchestration, the taste, the high-stakes calls - that layer is expanding. the middle is what's compressing... if you're operating today, the question isn't whether to use AI but around whether the shape of your company makes sense.

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.