yOPERO

1.2K posts

yOPERO

@yopero

Image-free thinker, one-shot learner and jack of all trades.

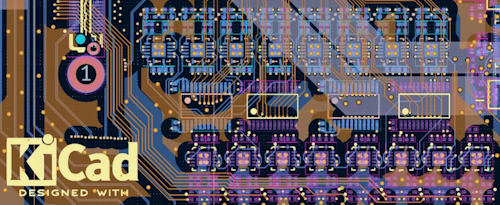

Congrats to @diodeinc on raising a $11.4 M Series A! They build AI that automates circuit design with customers incl. a F100 company and physical intelligence. 1/9 story on how @lennykhazan and @davideasnaghi applied to YC with just an idea still in their jobs, but that first idea nobody wanted. Though, it was a good start for them to iterate during the S24 batch.

Time to debunk the “nobody is a libertarian” chart, since it’s going viral again. Anytime you see a heat map purporting to show that nobody holds a combination of economic conservative and social liberal views, you should be skeptical. They almost always manufacture that result by miscategorizing the axis on which a given question belongs or what constitutes a left or right answer. In order to move toward the bottom (social liberal) on this chart, you would have to AGREE with the statements: ✅ “Over the past few years, Black people have gotten less than they deserve.” ✅ “Generations of slavery and discrimination have created conditions that make it difficult for Black people to work their way out.” ✅ Illegal immigrants are a net “contribution” to our country. In order to move to the right (economic conservative) on the chart, you would have to DISAGREE with the following statements: ❌ “Politics is a rigged game.” ❌ “People like me don’t have any say.” ❌ “Social Security is important to me personally.” Do you know any libertarians who believe in reparations for slavery, feel well represented in the political process, and don’t think entitlements are important? Yeah, neither do I. No wonder the chart doesn’t either.