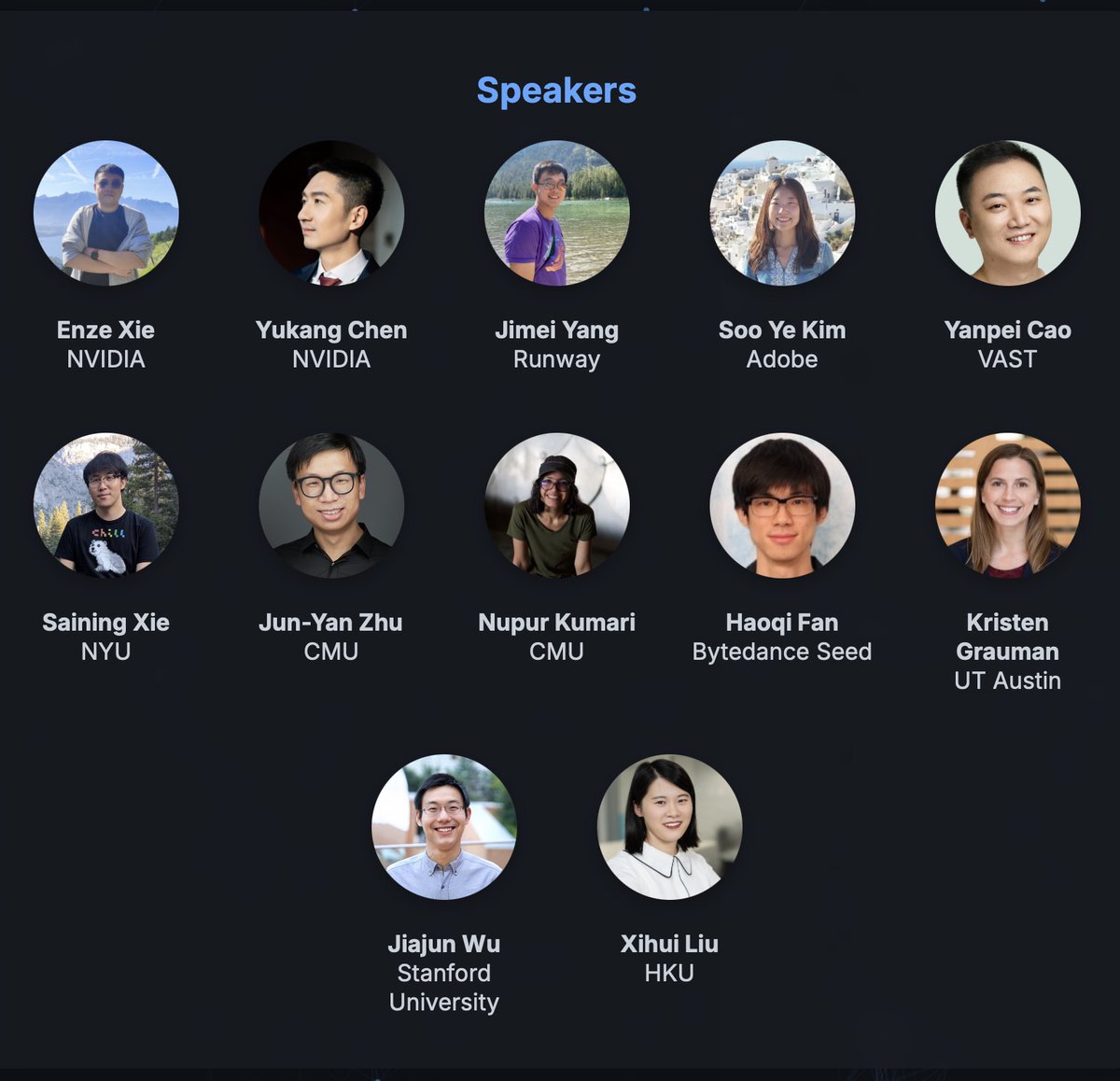

Yukang Chen

59 posts

Yukang Chen

@yukangchen_

Research Scientist @NVIDIA, work in Efficient and Long AI.

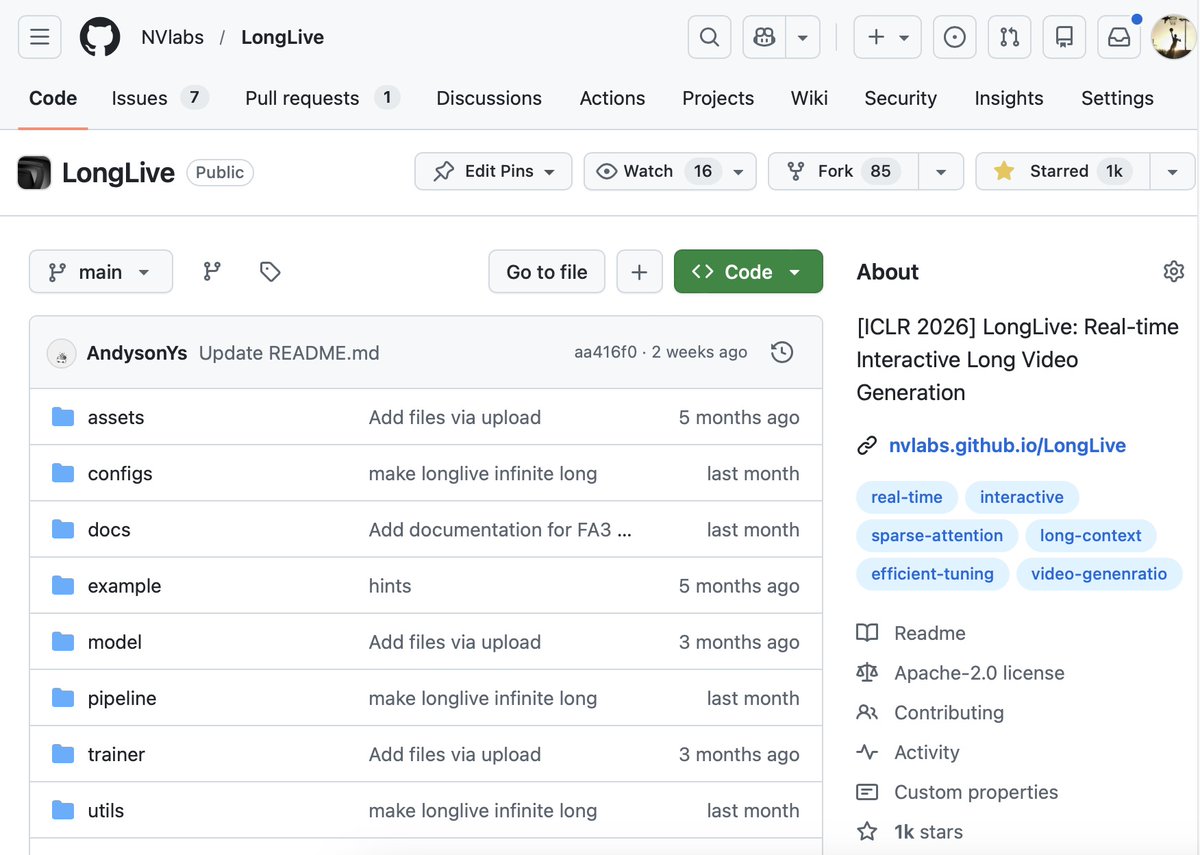

OmniVinci is now #1 paper on Huggingface!!! 🤗 Building omni-modal LLMs is MORE than just mixing tokens 😉 At @NVIDIA, we explored deeper possibilities in building truly omni-modal systems — leading to OmniVinci-9B, which introduces three key innovations: - OmniAlignNet – a unified vision–audio alignment module powered by contrastive learning - Temporal Embedding Grouping & Constrained Rotary Time Embedding – enabling absolute and relative temporal representation across multimodal tokens - To support this, we curated a 24M-sample omni-modal dataset and developed a new large-scale data engine for efficient labeling. 🔍 Key Findings: - Audio understanding significantly enhances video comprehension - Audio signals improve omni-modal reinforcement learning - Modality-specific captioning falls short — true understanding demands omni-modal context 📈 Results: OmniVinci-9B outperforms Qwen2.5-Omni across omni-modal, vision, and audio benchmarks — using only 1/6 of the training tokens. #LLMs #AI #ML