Zach Gollwitzer

5.6K posts

Zach Gollwitzer

@zg_dev

Fintech data systems and infra engineer ○ Running https://t.co/crOIdOlqX0, https://t.co/tKra6hDDCk

For 50 years, software engineering ran on code rationing. Writing code was expensive, so we rationed it carefully through roadmaps, RFCs, prioritization meetings, and scope reviews. This created a role: the No Engineer. No, that won't scale. No, we don't have bandwidth. No, that's out of scope. No, we need a design doc first. The No Engineer was valuable for 50 years. Every "no" saved real money. Their judgment was the rationing system. LLMs will be the end of code rationing. Code is cheap now. And while the No Engineer is explaining why something can't be done, the Yes Engineer has already shipped three versions of it. If you're a Yes Engineer, the next decade is yours.

There's a lot of confused people in this thread on why GitLab isn't an acceptable drop-in replacement for Github. I will periodically add some examples. These are UX monstrosities that make it *unusable*

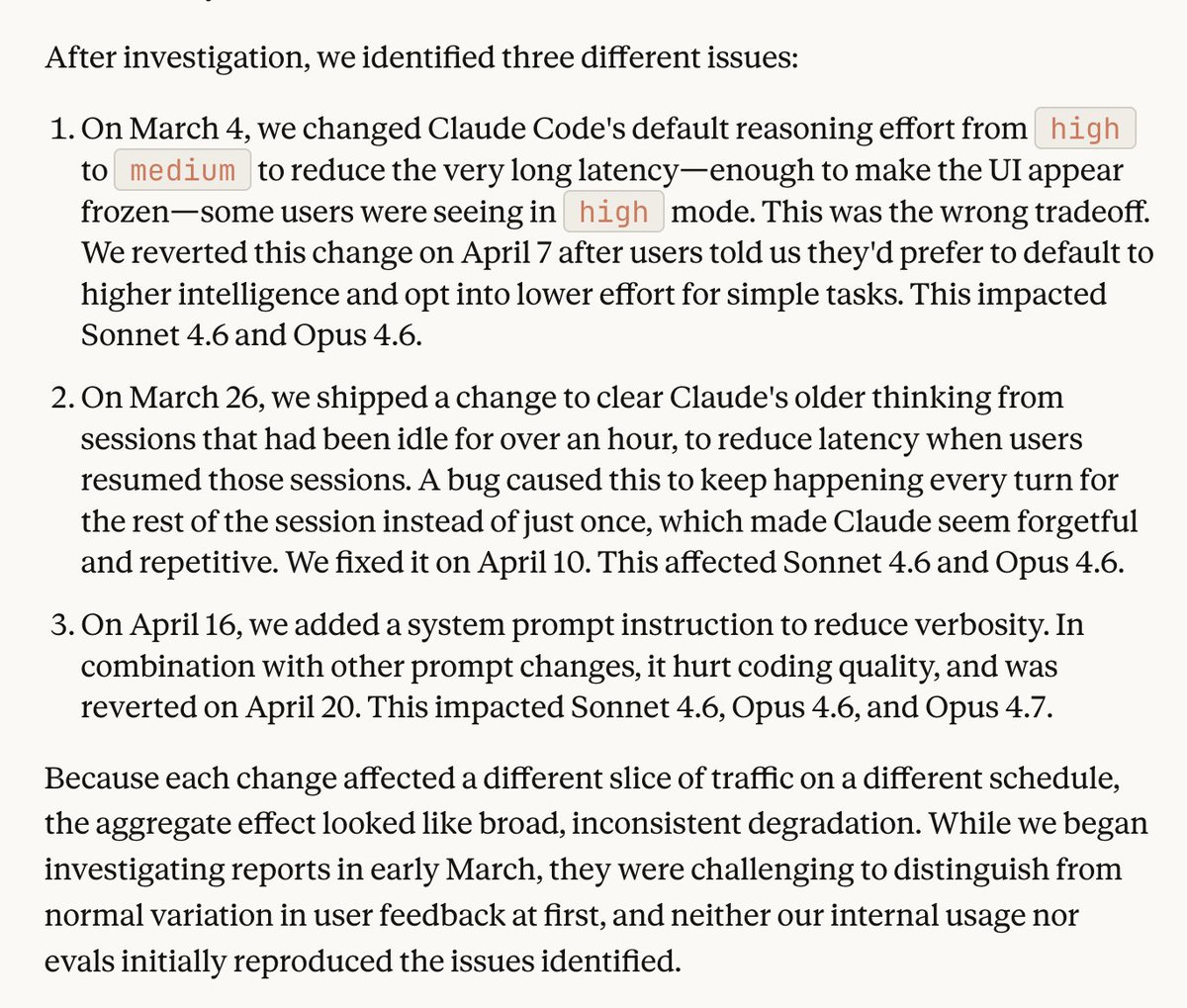

Over the past month, some of you reported Claude Code's quality had slipped. We investigated, and published a post-mortem on the three issues we found. All are fixed in v2.1.116+ and we’ve reset usage limits for all subscribers.

WE DON'T HATE CLAUDE CODE ENOUGH WHY ARE WE PAYING THOUSANDS OF DOLLARS IF YOUR EVERY RELEASE IS MAKING THE HARNESS LESS USABLE?

So why does AI love this so much if it wasn't that common before?

I don't want to go too deep on AI + DDD. My current thinking: GOOD: Ubiquitous Language / Bounded Contexts / ADR's BAD: Entities / Value Objects / Aggregates / Domain Events Essentially, use DDD to document the app but don't prescribe the shape of the app