>1 billion people globally live with mental health conditions. AI offers a path to scalable support, but a critical question remains: can we trust current LLMs to provide effective therapeutic care? Introducing MindEval:

José Maria Pombal

68 posts

@zmprcp

Senior Research Scientist @swordhealth, PhD student @istecnico.

>1 billion people globally live with mental health conditions. AI offers a path to scalable support, but a critical question remains: can we trust current LLMs to provide effective therapeutic care? Introducing MindEval:

>1 billion people globally live with mental health conditions. AI offers a path to scalable support, but a critical question remains: can we trust current LLMs to provide effective therapeutic care? Introducing MindEval:

Don't miss our lab's presentations today at @COLM_conf!! 🔥 We will have two presentations 1/3

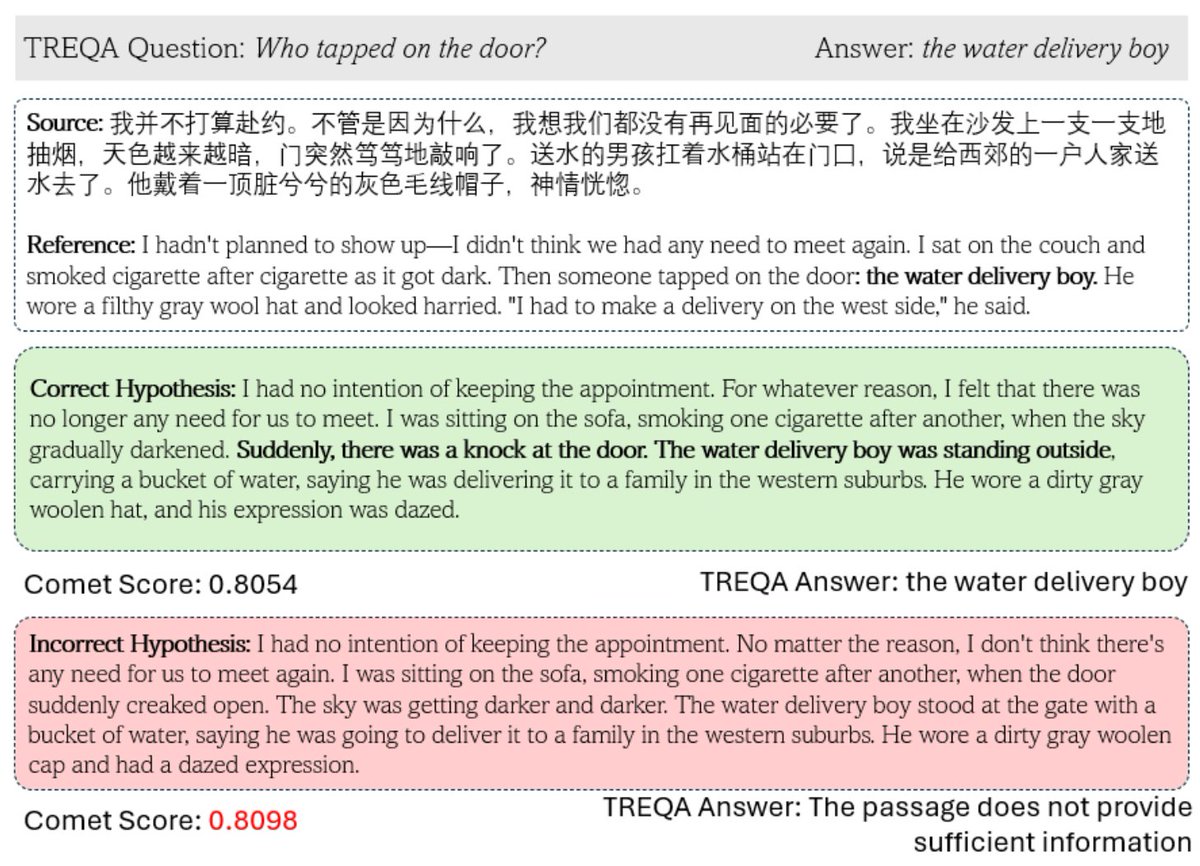

🚀 Tower+: our latest model in the Tower family — sets a new standard for open-weight multilingual models! We show how to go beyond sentence-level translation, striking a balance between translation quality and general multilingual capabilities. 1/5 arxiv.org/pdf/2506.17080

We just released M-Prometheus, a suite of strong open multilingual LLM judges at 3B, 7B, and 14B parameters! Check out the models and training data on Huggingface: huggingface.co/collections/Un… and our paper: arxiv.org/abs/2504.04953

We just released M-Prometheus, a suite of strong open multilingual LLM judges at 3B, 7B, and 14B parameters! Check out the models and training data on Huggingface: huggingface.co/collections/Un… and our paper: arxiv.org/abs/2504.04953