Søren Sjørup

5.6K posts

Søren Sjørup

@zorendk

https://t.co/Z4zLkfHFhI

Danmark har brug for mere ulighed - @dhh 💪

I don't think Rust is the best programming language for LLMs. The only reason why Greg said this is because Rust limits the LLM from making mistakes by not compiling shitty code, but the LLMs still suggest using unsafe! to bypass security rules. Therefore, I would say that Go is the best — if there is any "best" language for LLMs. Since LLMs are based on next-token prediction, it's more important that the code looks almost the same to get a good result. Go was designed to make every app around the globe look almost the same; for instance, there is only one way to make a loop compared to Rust, which has at least five that I could think of (loop, for, while, while let, and map). I want the best language to be rust tho

JUST IN: Meta announces they'll be shutting down the Metaverse, after pouring $80,000,000,000.00 into the project.

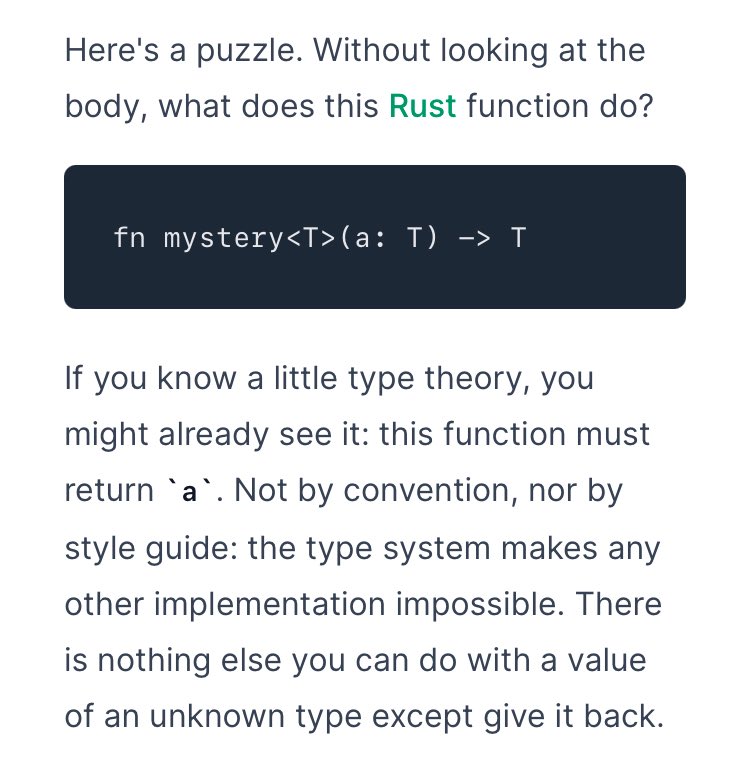

New research just exposed the biggest lie in AI coding benchmarks. LLMs score 84-89% on standard coding tests. On real production code? 25-34%. That's not a gap. That's a different reality. Here's what happened: Researchers built a benchmark from actual open-source repositories real classes with real dependencies, real type systems, real integration complexity. Then they tested the same models that dominate HumanEval leaderboards. The results were brutal. The models weren't failing because the code was "harder." They were failing because it was *real*. Synthetic benchmarks test whether a model can write a self-contained function with a clean docstring. Production code requires understanding inheritance hierarchies, framework integrations, and project-specific utilities. Different universe. Same leaderboard score. But it gets worse. A separate study ran 600,000 debugging experiments across 9 LLMs. They found a bug in a program. The LLM found it too. Then they renamed a variable. Added a comment. Shuffled function order. Changed nothing about the bug itself. The LLM couldn't find the same bug anymore. 78% of the time, cosmetic changes that don't affect program behavior completely broke the model's ability to debug. Function shuffling alone reduced debugging accuracy by 83%. The models aren't reading code. They're pattern-matching against what code *looks like* in their training data. A third study confirmed this from another angle: when researchers obfuscated real-world code changing symbols, structure, and semantics while keeping functionality identical LLM pass rates dropped by up to 62.5%. The researchers call this the "Specialist in Familiarity" problem. LLMs perform well on code they've memorized. The moment you show them something unfamiliar with the same logic, they collapse. Three papers. Three different methodologies. Same conclusion: The benchmarks we use to evaluate AI coding tools are measuring memorization, not understanding. If you're shipping code generated by LLMs into production without review, these numbers should concern you. If you're building developer tools, the question isn't "what's your HumanEval score." It's "what happens when the code doesn't look like the training data."

🎯⬇️⬇️