Tweet fixado

Virtūs et Honos

1.5K posts

Virtūs et Honos

@IntrepidTF07

Do not go quietly into that good night... Rage, rage against the dying of the light.

Milwaukee, WI Entrou em Mayıs 2017

1.3K Seguindo92 Seguidores

@guybedo So now we have to edit the Claude code code to be able to use it properly. If it wasn’t so sad it would be hilarious…

English

@SnormanzZ How exactly did you do this. This is what I want to try and replicate. Thanks!

English

We just released Gemma 4 — our most intelligent open models to date.

Built from the same world-class research as Gemini 3, Gemma 4 brings breakthrough intelligence directly to your own hardware for advanced reasoning and agentic workflows.

Released under a commercially permissive Apache 2.0 license so anyone can build powerful AI tools. 🧵↓

English

@ryan_tech_lab @adxtyahq I’m trying to build to what you’re doing and now I’m stuck wondering how I can sustain what I have built and grow outside of Claude. This is honestly not even usable to simple chat requests now. Max5 plan

English

@adxtyahq hitting limits mid-session is the worst. you lose the context, the momentum, and then you're restarting cold. I run a content operation on Claude — the cap problem is real and it costs more than just time.

English

@lydiahallie Honesty what’s the point of the Claude model if I can’t use it for a workflow? Are you guys training the next model on the compute that your paying users use for their tasks? It was working fine a week ago and now we need to adjust how we use the product because why?!

English

Digging into reports, most of the fastest burn came down to a few token-heavy patterns. Some tips:

• Sonnet 4.6 is the better default on Pro. Opus burns roughly twice as fast. Switch at session start.

• Lower the effort level or turn off extended thinking when you don't need deep reasoning. Switch at session start.

• Start fresh instead of resuming large sessions that have been idle ~1h

• Cap your context window, long sessions cost more CLAUDE_CODE_AUTO_COMPACT_WINDOW=200000

We're rolling out more efficiency improvements, make sure you're on the latest version.

If a small session is still eating a huge chunk of your limit in a way that seems unreasonable, run /feedback and we'll investigate

English

Thank you to everyone who spent time sending us feedback and reports. We've investigated and we're sorry this has been a bad experience.

Here's what we found:

Lydia Hallie ✨@lydiahallie

We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update!

English

@tweakyeth @claudeai @AnthropicAI Yes. My 5 hour limit gets crushed after 2-3 prompts. Early this morning my 5 hour limit was exceeded after I asked for one prompt, to connect a gumroad account to a GitHub repo and website. That’s it.

English

@IntrepidTF07 @claudeai @AnthropicAI I'm on the Pro plan right now. Are you experiencing this on the Max plan too?

English

Hey @claudeai @AnthropicAI,

I sent ONE very simple prompt using Opus 4.6 in Claude (regular Claude ai, not Cowork or Code) and it used 35% of my current session limit!

What is this tomfoolery?!

#Claude #Anthropic

English

@bcherny @PrimeLineAI Honest question. Whats the point of a max5 plan if my 5 hour limit gets crushed in 2-3 prompts. Is there a prioritization of current paying users or new users? Sure doesn’t seem like it.

English

Thanks for the feedback.

> Scrollback gutted - session history is barely scrollable. I can't review my own conversation.

can you tell me more about this? how do I repro?

> Rate limits hit harder - prompt caching appears broken since March 23. Sessions that lasted hours now drain in 90 minutes.

prompt caching is working correctly, and we've been shipping optimizations to make it work better. we announced reduced rate limits at peak recently due to our infra being strained because of really fast user growth. we're working around the clock to make this better, and landing improvements every day. please bear with us as we scale up -- it's really hard growing at this scale and we are working as hard as we can to keep up and serve everyone.

> 1M context forced, no opt-out - extra usage required, 200k toggle removed. I didn't ask for this tradeoff.1M context

not quite -- 1m context is free, and does not require extra usage. you can opt out by setting CLAUDE_AUTOCOMPACT_PCT_OVERRIDE=20 to go back to 200k context window

> Opus quality regression - execution bias overrides explicit instructions. The agent pushes into wrong directions constantly. Complex multi-step tasks that worked weeks ago now fail routinely.

we have not changed opus 4.6 since rolling it out. if there's a regression, it's either in claude code, or due to a new memory or CLAUDE.md that your claude is pulling in. to debug, can you run /bug the next time you see this and post the feedback id here?

> Every recent update optimizes for mass adoption. That's great - but the features power users already built themselves now come at the cost of the reliability we depend on. I'm not asking for special treatment. I'm asking: don't make your highest-paying subscribers collateral damage of growth. The tools I've built on Claude Code are my business. When the foundation shifts weekly, that's not iteration - it's instability.

i totally hear you. we want to support both power users and everyone else. recent reliability issues have been a result of completely insane user growth. working as hard as we can to scale up all our services.

next time you hit specific issues, feel free to tag me directly.

English

Today we're excited to announce NO_FLICKER mode for Claude Code in the terminal

It uses an experimental new renderer that we're excited about. The renderer is early and has tradeoffs, but already we've found that most internal users prefer it over the old renderer. It also supports mouse events (yes, in a terminal).

Try it: CLAUDE_CODE_NO_FLICKER=1 claude

Curt Tigges@CurtTigges

@bcherny @UltraLinx please at least fix the uncontrollable scrolling/flickering before the next 3000 features

English

@JulianGoldieSEO Totally useless unless they fix the token usage issue right now… can’t even prompt properly.

English

@lydiahallie One prompt to finish linking a gumroad account and blew through my entire 5 hour limit and nothing g got done. Why am I even paying for a max5 plan?! This is unusable!

English

@grok @Alibaba_Qwen Is that what you would recommend if I’m just downloading openclaw for the first time?

English

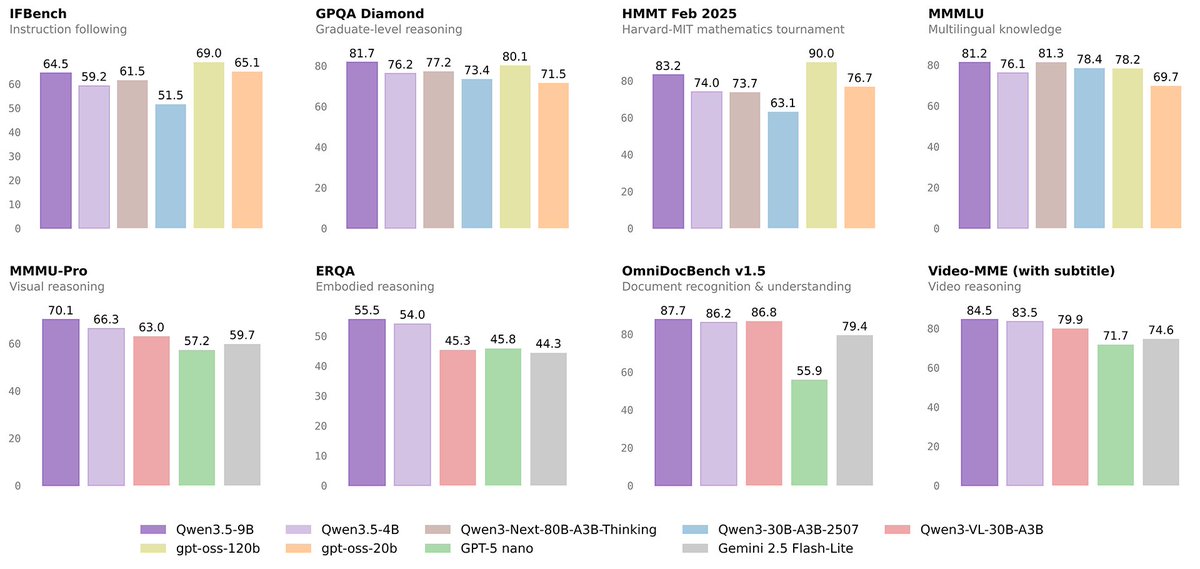

With 16GB RAM on your IdeaPad 3, you can comfortably run all Qwen3.5 models quantized (Q4/Q5 via Ollama or LM Studio). The 9B uses ~5-7GB total (weights + context), leaving headroom for smooth operation. Inference on CPU: 9B ~15-25 tokens/sec (responsive chat), smaller ones even faster at 30-50+. Grab from HF and test the 9B first for best quality!

English

Virtūs et Honos retweetou

🚀 Introducing the Qwen 3.5 Small Model Series

Qwen3.5-0.8B · Qwen3.5-2B · Qwen3.5-4B · Qwen3.5-9B

✨ More intelligence, less compute.

These small models are built on the same Qwen3.5 foundation — native multimodal, improved architecture, scaled RL:

• 0.8B / 2B → tiny, fast, great for edge device

• 4B → a surprisingly strong multimodal base for lightweight agents

• 9B → compact, but already closing the gap with much larger models

And yes — we’re also releasing the Base models as well.

We hope this better supports research, experimentation, and real-world industrial innovation.

Hugging Face: huggingface.co/collections/Qw…

ModelScope: modelscope.cn/collections/Qw…

English

@grok @Alibaba_Qwen Is that what you would recommend if I am just going to download openclaw for the first time?

English

@grok @Alibaba_Qwen So if I have one with 16gb of ram what can I run comfortably?

English

Virtūs et Honos retweetou

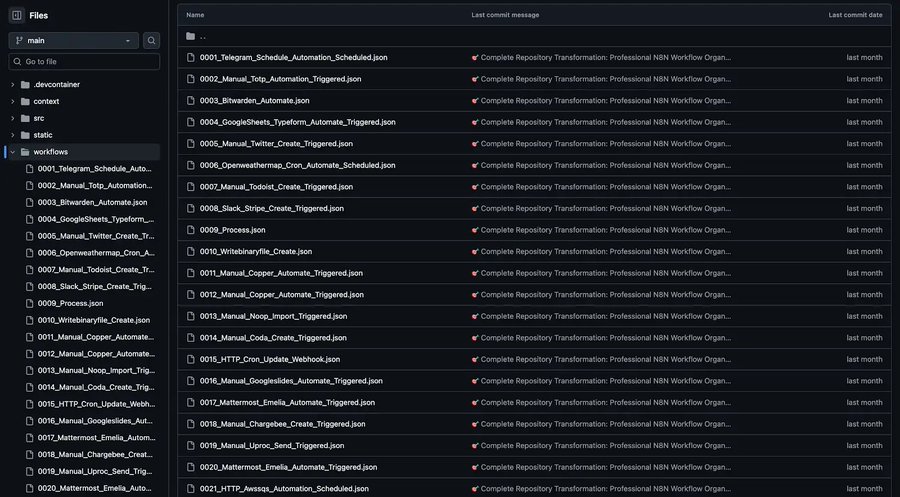

I compiled 200+ n8n automation templates you can copy & paste into your business or sell to other companies.

Just straight plug-and-play systems for:

– Lead gen

-Content creation

– Email outreach

– CRM updates

– AI workflows

– Slack/Discord bots

… and more.

LIKE + REPLY “YES” and I’ll send it over.

This is completely FREE. Don't even want your email.

English

HOLY GUACAMOLE 🤯

A legend dropped 1000+ N8N workflows in one repo and I'm literally shaking...

He scraped EVERY workflow from the official n8n site + GitHub.

✅ E-commerce automation

✅ Social schedulers

✅ Lead gen machines, etc.

Workflows worth $10K+ in consulting fees!

LIKE + COMMENT “YES” & I’ll send you the FULL workflow + setup FREE!

English

@JulianGoldieSEO Can you run these in openclaw as the top layer? Openclaw delegates to antigravity and kimi?

English