Tweet fixado

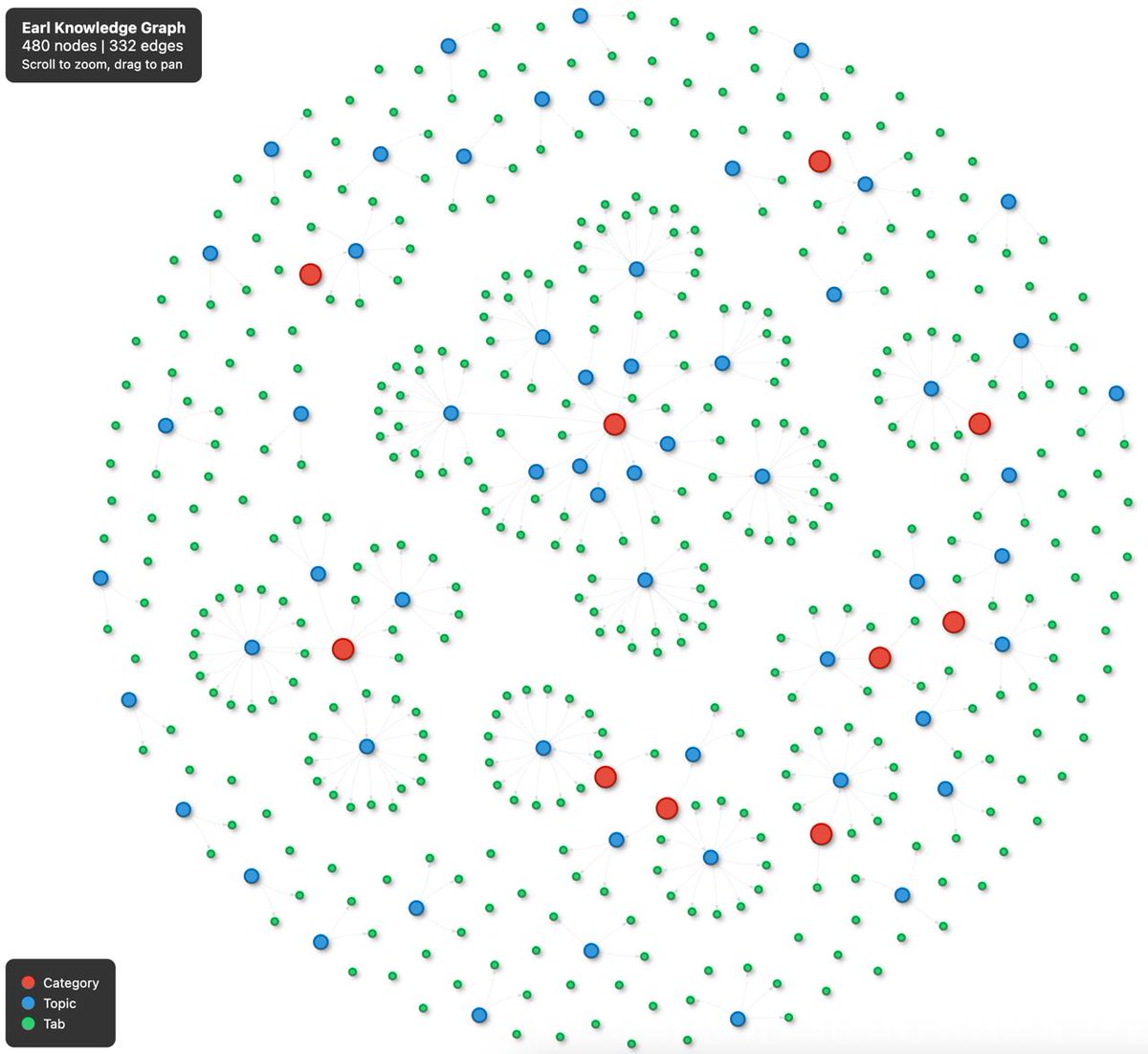

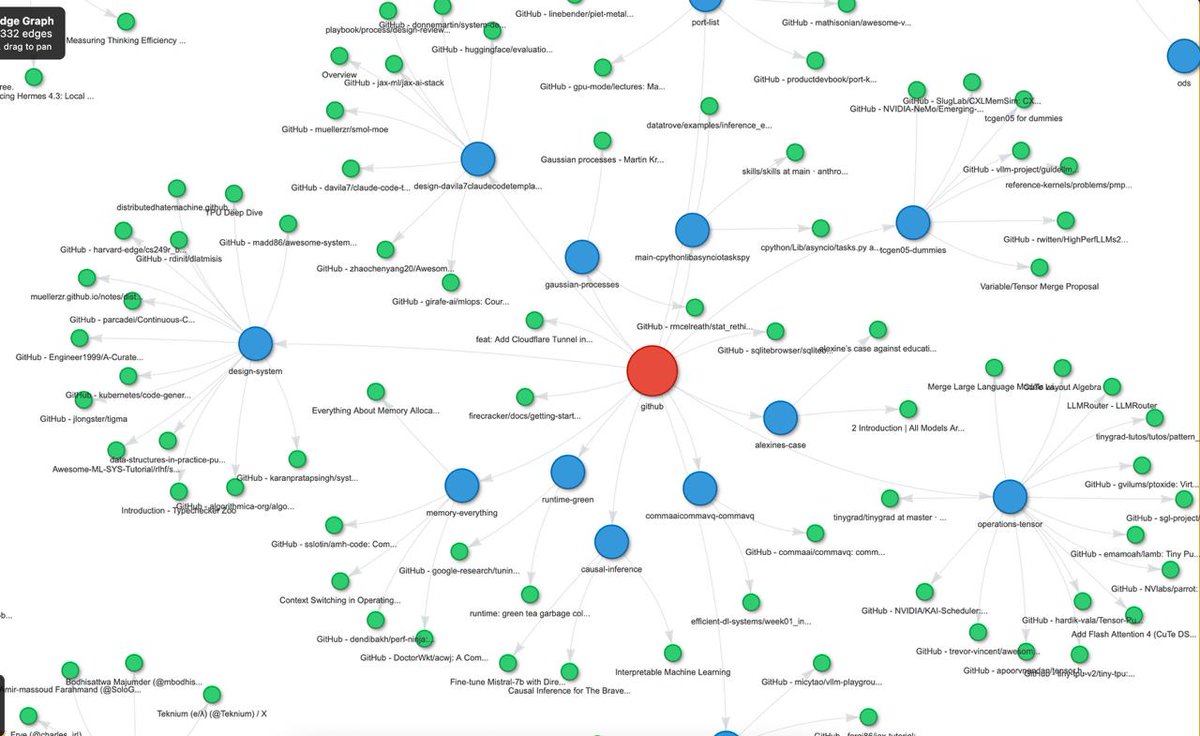

normal people read docs to learn @modal i rebuilt the entire platform from scratch

distributedhatemachine.github.io/posts/modal

github.com/wtfnukee/minim…

@charles_irl pls hire

English

Egor Konovalov

52 posts

Oh, you're using Copilot? Everyone's on Cursor now. Just kidding, we're all on Windsurf. We're using Cline. We're using Aider. We have an in-house MCP server mesh with custom tool schemas but wait, OpenCode just dropped so we're migrating to that instead. Our PM is on Gemini CLI. The team lead was on Codex but now she's back to copy-pasting into ChatGPT. If you're not on Amp, you're ngmi. Our intern is building on Goose for our internal tooling. Our CFO approved Claude Max so now we're porting our workflows to computer use. Our CTO is working on an agent-less RAG pipeline so we won't need vibe coding anymore. Our CEO thinks we're talking about actual vibrations. We're building clankercloud.