Sumit Datta

2.3K posts

Sumit Datta

@sumitdatta

41, engineer, founder, nocodo: Lovable for AI agents

Himalayan village, India Entrou em Mart 2008

549 Seguindo881 Seguidores

Tweet fixado

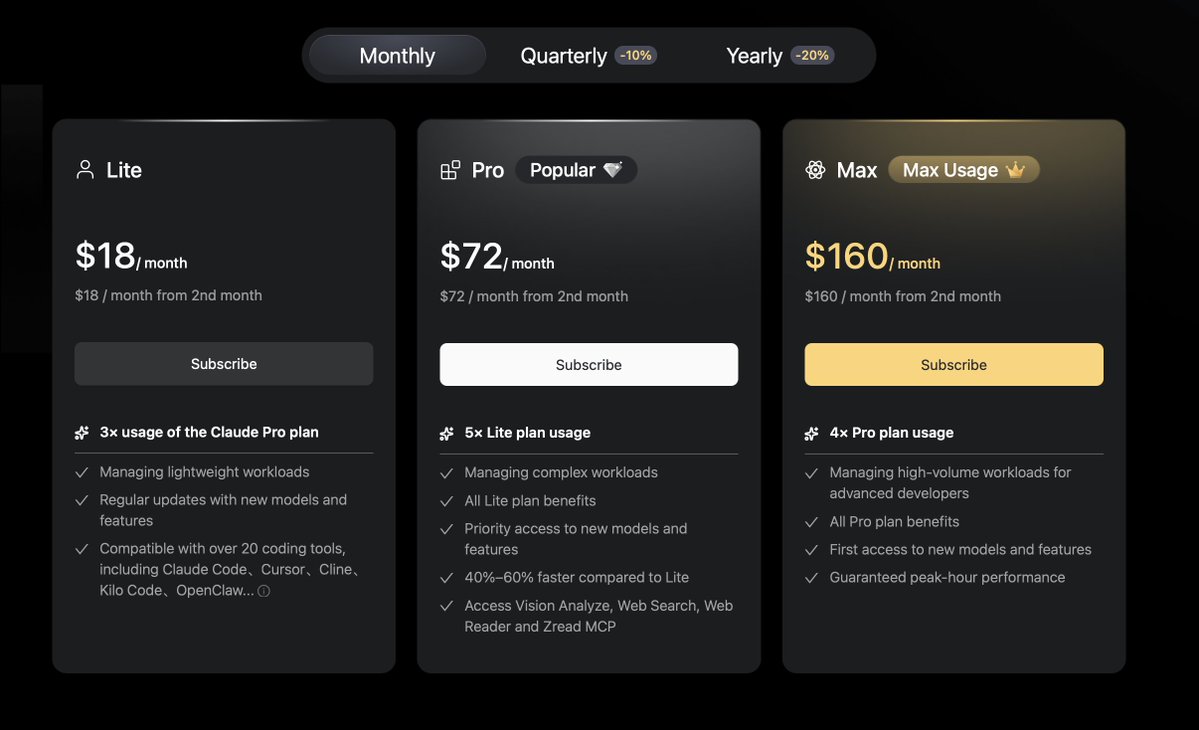

@ibocodes This is how pricing works. Look at the flagship killer Android phones.

The brands start with a mouth watering price. In a year or two, they are selling at much higher prices.

Then there are new entrants.

English

@aryanlabde People also spend hundreds on movies, TV shows, rollercoaster rides.

For thousands of people who are now able to build anything at all by just talking in their human language - $200 is not much. It is the power of freedom.

And the tools are worse now than they will ever be

English

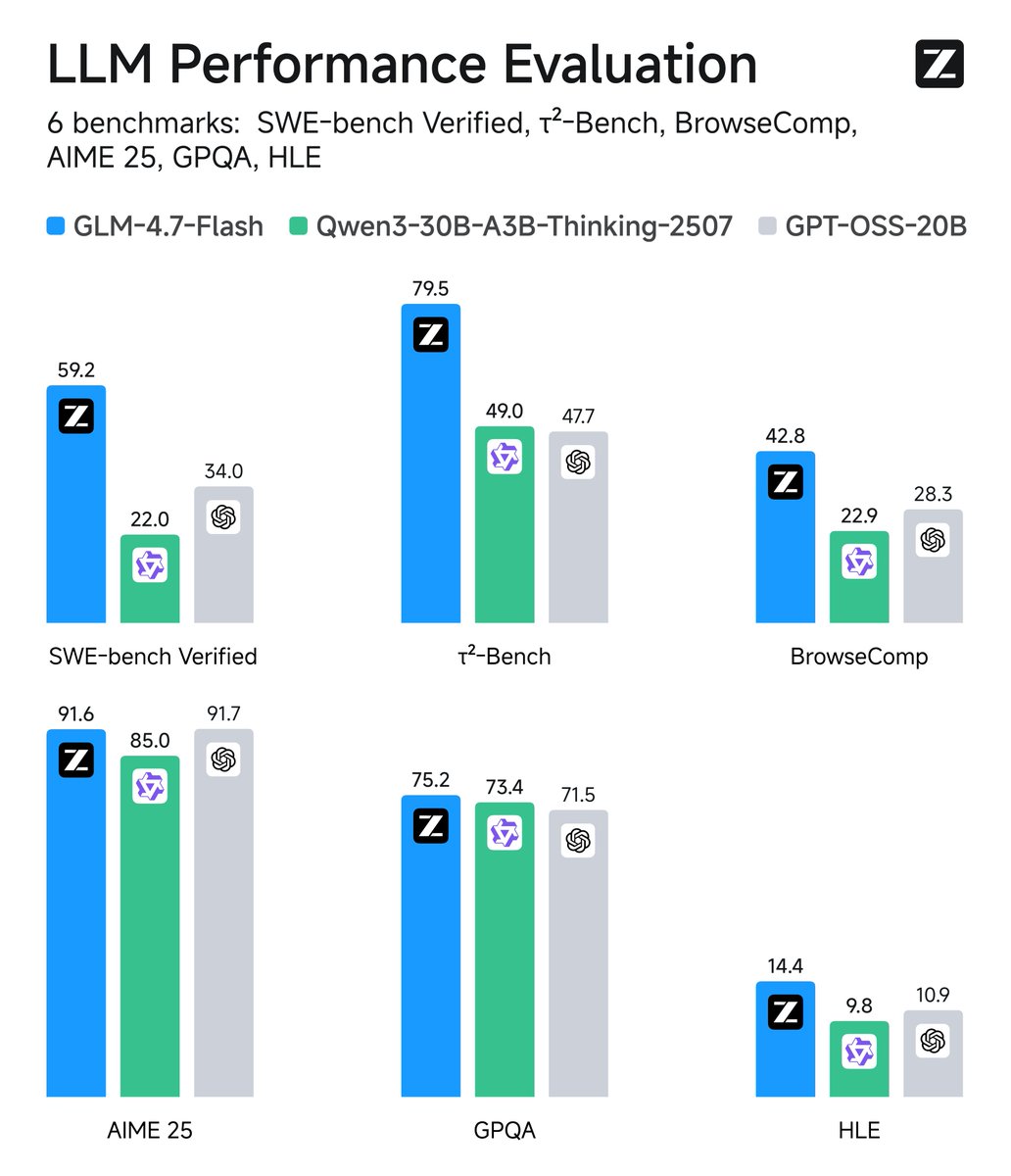

Who is gonna tell this guy?

Lol these people who cannot face reality. People really are sheep - they need a leader, they like the charm of OpenAI or Anthropic.

When other models are dominating and democratizing, these people will go out of their way to share scam.

Melvyn • Builder@melvynx

all people telling you to switch to this Chinese model as they are "as good as Opus for 1/10 of the price" are basically liars and incompetent they don't use the tools, they make tweets for hype, because using this model for 2 minutes makes you realize how dumb they are what for? seriously

English

@XSuxBro @sudoingX @pupposandro Tiny models are good at function calling. I use then to decide and branch out to specific agents for the given task. You have to plan your harness around tiny models.

English

read this carefully anon. @pupposandro wrote a single fused CUDA kernel for all 24 layers of Qwen 3.5-0.8B. one kernel launch. absolutely zero CPU round trips between layers. the result?

a $900 RTX 3090 from 2020 hit 411 tok/s. apple's M5 Max hit 229. the 3090 won on speed AND efficiency 1.55x faster than llama.cpp on the same hardware.

the gap between NVIDIA and Apple was never about silicon. it was software. generic frameworks waste cycles on kernel launch overhead, memory re fetches, thread synchronization. when you fuse everything into one dispatch the hardware shows what it actually has.

this is the beginning of something bigger. we already proved that a 27B dense model on a single 3090 one-shots what $70K enterprise hardware cannot. now imagine what happens when someone writes kernels optimized specifically for the 3090 and the models that run best on it. not generic inference. hardware specific, model specific fused from the kernel level up.

the 3090 is not a relic. it's an untapped research platform. and the people writing these kernels are proving it with data.

all open source and reproducible anon.

Sandro@pupposandro

English

@sudoingX @pupposandro Interesting. I am not from an ML background but when I saw "Convert ONNX models into native, backend-agnostic Burn [Rust] code" on github.com/tracel-ai/burn… - I thought, what if we create custom code for a GPU for a specific model. Would it improve performance?

I would love to try

English

Multiple entity extraction (financial transactions, orders, locations, events, persons, orgs...) now works with @Alibaba_Qwen Qwen 3.5 0.8b model - should work on any small laptop

Tested on M4 Mac Mini 16 GB + @UnslothAI model on llama.cpp)

github.com/brainless/dwata

English

@rezoundous I use the M4 Mac Mini with 16GB system memory. Full time development setup with Zed, Claude Code, Codex or opencode.

I use Ollama + Ministral 3:3b in my own product github.com/brainless/dwata

Works without hiccups.

English

@0xSero Sorry I am not well versed, what do you mean by a pure logic agent? I understand having less knowledge.

Context: "prune down to 40% if you accept lots of knowledge loss and want a pure logic agent"

English

This is possibly the best we can get until another compression breakthrough pops up for LARGE MoEs

WEIGHTS:

- 50% reap + 3bit quant == 81.75% compression

KV-CACHE:

- turboquant 4

Basically you can run large MoEs with about 18-20% of the vram of the BF16

So for Deepseek (671 GB~) you will be able to run the weights in 127GB and 200k kv-cache in about 20-60gb of vram.

Small models provide less savings I'd say you can comfortably prune 20-25% of the experts and quantise to about 4-8bits

For 1T param models I think it's possible to prune down to 40% if you accept lots of knowledge loss and want a pure logic agent, IDK how this would look though

------------

My current plan:

- Prune GLM-5 50% Done

- Quantize to EXL3 w 3bits if no turboquant or if 4bits

- Train the new models to respond like the original

PEFT from GLM-5 --> GLM-5-358B-REAP --> REAP-GGUF-3BIT -> REAP-EXL-3BIT

Very little samples were enough to recover an 80% REAP to semi-coherent from completely broken.

English

I needed GliNER2 for a Rust based desktop app. So I create a quick port of GliNER2 to Rust/Candle:

github.com/brainless/glin…

English

Sumit Datta retweetou

Introducing GLM-4.7-Flash: Your local coding and agentic assistant.

Setting a new standard for the 30B class, GLM-4.7-Flash balances high performance with efficiency, making it the perfect lightweight deployment option. Beyond coding, it is also recommended for creative writing, translation, long-context tasks, and roleplay.

Weights: huggingface.co/zai-org/GLM-4.…

API: docs.z.ai/guides/overvie…

- GLM-4.7-Flash: Free (1 concurrency)

- GLM-4.7-FlashX: High-Speed and Affordable

English

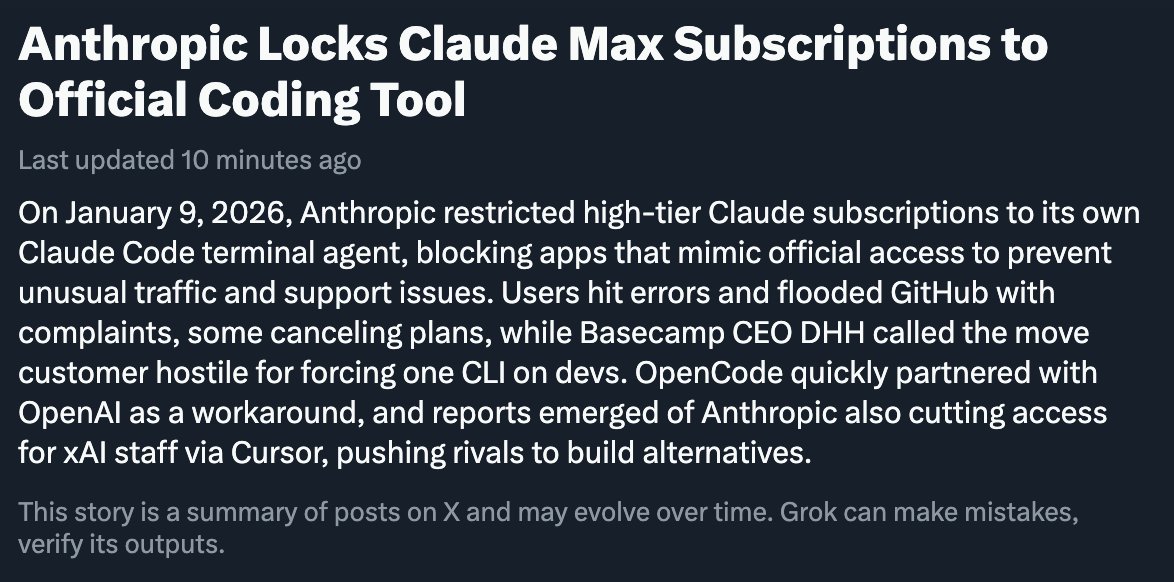

@burkov Anthropic's largest revenue stream is API usage, not Claude Code.

Enterprise has been their focus - agents. It is all about agents. Claude Code is only one such agent, of course, their own agent.

Being hyper focused on that one agent is not even helpful. They are a model maker

English

An absolutely right decision. Claude models were fine-tuned with reinforcement learning to work with a specific interface. This is why Claude is so effective in coding.

When you withdraw the model that was finetuned to work in a certain environment and put it into a different one, the consequences are absolutely unpredictable. Furthermore, the user experience is arbitrarily worse compared to the UX with the environment for which the model was finetuned.

I'm 100% certain that people who still claim that Gemini is better for coding than Claude used Claude in a non-native environment.

English

@trq212 Double standard to model pricing is ridiculous. Claude is a model, text in, text out. That is effing it.

Anthropic has two pricing - one if you use CC (can use Claude subscription) and another for every other agent (API pricing, which is expensive).

Is CC a lock-in?

English

@timsoulo Because they lose customers as easily as they get. It's easy to count up the payments for some months to show ARR but that's not actually what they get over a 12 month period.

Churn is super high. Changing platforms is not like changing a cloud provider. People build and leave

English

Why did Lovable need to raise $300m series B, when they're already at $200M ARR with just 100 people?

...and they raised $200M series A not long ago.

I mean:

- They didn't pay for ads all around New York and San Francisco like Clay.

- They didn't pay for Las Vegas Sphere and massive drone show like Gamma.

- They didn't pay to sponsor F1 and Arsenal like Airwallex (not an AI startup, but I needed a third one).

So what do they need so much money for?

⚠️ IMPORTANT: I'm not a hater!

I actually love using Lovable and I'm quite proud of my growing collection of vibe-coded apps.

But coming from the bootstrapped world I'm genuinely confused as to why they need so much money, when they're already seem to be doing so well with their ARR.

Any theories?

English

@forgebitz My entire agent building platform runs on SQLite itself. And right now I'm focused on a good LLM based analyst for SQLite databases.

Feel free to check the progress: github.com/brainless/noco…

English

Sumit Datta retweetou

People ask what faster AI actually means for them. Here’s a concrete example. In the video, GLM-4.6 on @cerebras builds Space Invaders in ~15% of the time it takes Claude Sonnet 4.5 Thinking.

The point isn’t speed for its own sake.

When latency drops far enough, new classes of workflows become possible.

This is like Netflix.

Netflix didn’t build a $400 billion business because it mailed DVDs faster. It's entire business changed. It became a movie studio. This transformation was made possible when streaming became fast and reliable enough that people stopped thinking about buffering, downloads, and storage altogether.

At that point, behavior changed: people browsed, clicked, abandoned, re-tried - without friction.

Fast inference does the same thing for AI.

When responses are slow, you design around waiting.

English

@hxtxmu @TheAhmadOsman Generally I mention "opencode is a coding agent that's already installed" - to give some context. And it works well.

opencode with GLM fights the Rust compiler per file, runs code lint, format, etc. You can work at file or module level

Claude does higher level management.

English

@hxtxmu @TheAhmadOsman Let's say you refactor & multiple dependent files need update. So in claude code, something like:

"We refactored abc.rs and multiple Rust files need update. Please check using cargo. Then use `opencode run "<prompt>"` to tell opencode to fix, one file at a time"

English